Abstract

Dam behaviour is difficult to predict with high accuracy. Numerical models for structural calculation solve the equations of continuum mechanics, but are subject to considerable uncertainty as to the characterisation of materials, especially with regard to the foundation. As a result, these models are often incapable to calculate dam behaviour with sufficient precision. Thus, it is difficult to determine whether a given deviation between model results and monitoring data represent a relevant anomaly or incipient failure.

By contrast, there is a tendency towards automatising dam monitoring devices, which allows for increasing the reading frequency and results in a greater amount and variety of data available, such as displacements, leakage, or interstitial pressure, among others.

This increasing volume of dam monitoring data makes it interesting to study the ability of advanced tools to extract useful information from observed variables.

In particular, in the field of Machine Learning (ML), powerful algorithms have been developed to face problems where the amount of data is much larger or the underlying phenomena is much less understood.

In this monograph, the possibilities of machine learning techniques are analysed for application to dam structural analysis based on monitoring data. The typical characteristics of the data sets available in dam safety are taking into account, as regards their nature, quality and size.

A critical literature review is performed, from which the key issues to consider for implementation of these algorithms in dam safety are identified.

A comparative study of the accuracy of a set of algorithms for predicting dam behaviour is carried out, considering radial and tangential displacements and leakage flow in a 100-m high dam. The results suggest that the algorithm called “Boosted Regression Trees” (BRT) is the most suitable, being more accurate in general, while flexible and relatively easy to implement.

The possibilities of interpretation of the mentioned algorithm are evaluated, to identify the shape and intensity of the association between external variables and the dam response, as well as the effect of time. The tools are applied to the same test case, and allow more accurate identification of the time effect than the traditional statistical method.

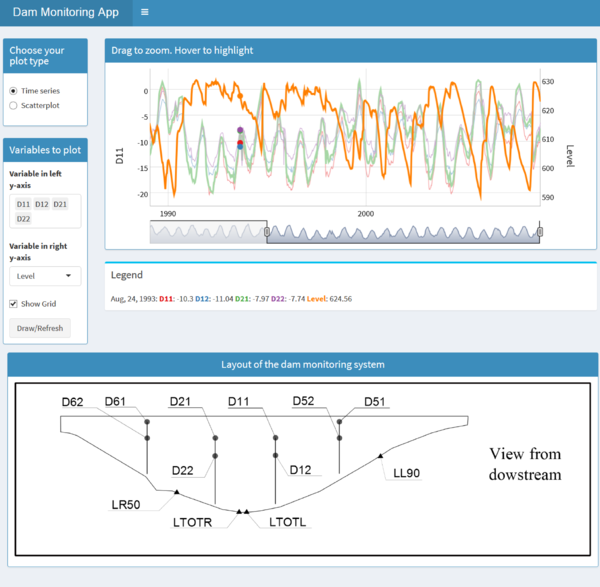

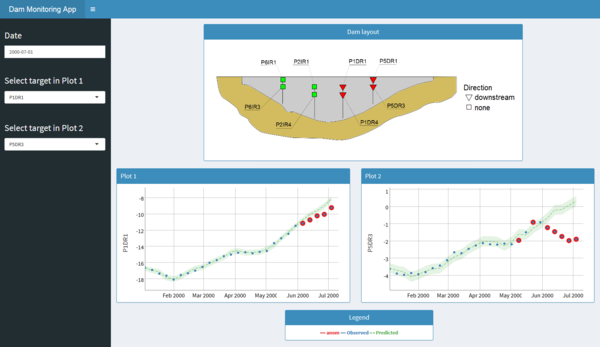

Finally, a methodology for the implementation of predictive models based on BRT for early detection of anomalies is presented, together with its implementation in an interactive tool that provides information on dam behaviour, through a set of selected devices. It allows the user to easily verify whether the actual data for each of these devices are within a pre-defined normal operation interval.

Resumen

El comportamiento estructural de las presas de embalse es difícil de predecir con precisión. Los modelos numéricos para el cálculo estructural resuelven bien las ecuaciones de la mecánica de medios continuos, pero están sujetos a una gran incertidumbre en cuanto a la caracterización de los materiales, especialmente en lo que respecta a la cimentación. Como consecuencia, frecuentemente estos modelos no son capaces de calcular el comportamiento de las presas con suficiente precisión. Así, es difícil discernir si un estado que se aleja en cierta medida de la normalidad supone o no una situación de riesgo estructural.

Por el contrario, muchas de las presas en operación cuentan con un gran número de aparatos de auscultación, que registran la evolución de diversos indicadores como los movimientos, el caudal de filtración, o la presión intersticial, entre otros. Aunque hoy en día hay muchas presas con pocos datos observados, hay una tendencia clara hacia la instalación de un mayor número de aparatos que registran el comportamiento con mayor frecuencia.

Como consecuencia, se tiende a disponer de un volumen creciente de datos que reflejan el comportamiento de la presa, lo cual hace interesante estudiar la capacidad de herramientas desarrolladas en otros campos para extraer información útil a partir de variables observadas.

En particular, en el ámbito del aprendizaje automático (machine learning), se han desarrollado algoritmos muy potentes para entender fenómenos cuyo mecanismo es poco conocido, acerca de los cuales se dispone de grandes volúmenes de datos.

En esta monografía se hace un análisis de las posibilidades de las técnicas más recientes de aprendizaje automático para su aplicación al análisis estructural de presas basado en los datos de auscultación. Para ello se tienen en cuenta las características habituales de las series de datos disponibles en las presas, en cuanto a su naturaleza, calidad y cantidad.

Se ha realizado una revisión crítica de la bibliografía existente, a partir de la cual se han identificado los aspectos clave a tener en cuenta para implementación de estos algoritmos en la seguridad de presas.

Se ha realizado un estudio comparativo de la precisión de un conjunto de algoritmos para la predicción del comportamiento de presas considerando desplazamientos radiales, tangenciales y filtraciones. Para ello se han utilizado datos reales de una presa bóveda. Los resultados sugieren que el algoritmo denominado “Boosted Regression Trees” (BRTs) es el más adecuado, por ser más preciso en general, además de flexible y relativamente fácil de implementar.

Adicionalmente, se han identificado las posibilidades de interpretación del citado algoritmo para extraer la forma e intensidad de la asociación entre las variables exteriores y la respuesta de la presa, así como el efecto del tiempo. Las herramientas empleadas se han aplicado al mismo caso piloto, y han permitido identificar el efecto del tiempo con más precisión que el método estadístico tradicional.

Finalmente, se ha desarrollado una metodología para la aplicación de modelos de predicción basados en BRTs en la detección de anomalías en tiempo real. Esta metodología se ha implementado en una herramienta informática interactiva que ofrece información sobre el comportamiento de la presa, a través de un conjunto de aparatos seleccionados. Permite comprobar a simple vista si los datos reales de cada uno de estos aparatos se encuentran dentro del rango de funcionamiento normal de la presa.

Nomenclature

Magnitude of the artificial anomaly introduced

Coefficients in the HST formula

Number of days since 1 January

Output of predictive model for

Output of an ensemble model at iteration

Weak learner fitted at iteration

Index of accuracy of anomaly detection models

Reservoir level

Argument of the trigonometrical functions in the HST model.

Version of a BRT model

minimum

Residuals average

Number of records in a period

Regularisation parameter

Number of inputs

Model residuals (prediction-observation)

Variance

Subsample of a training set used to fit

Standard deviation of the residuals

time

Detection time

Input variable

Observed value for input at time

Equally-spaced values of to be used in PDPs

Measured response variable

Predicted response variable

Observed value of the output variable at time

Mean of

ANFIS Adaptive Neuro-Fuzzy System

ARX Auto-Regressive Exogenous

BRT Boosted regression Trees

FEM Finite Element Method

GA Genetic Algorithms

HST Hydrostatic Season Time

HSTT Hydrostatic Season Temperature Time

KNN K-Nearest Neighbours

MARS Multivariate Adaptive Regression Splines

ML Machine Learning

MLP Multi Layer Perceptron

MLR Multilinear regression

NN Neural Network

PCA Principal Component Analysis

RF Random Forest

SVM Support Vector Machine

ACA Agencia Catalana de l'Aigua

ARV Average Relative Variance

KDE Kernel Density Estimation

MAE Mean Absolute Error

MINECO Ministerio de Economía y Competitividad

MSE Mean Squared Error

OOR Out of range

PDP Partial dependence plot

RI Relative Influence

1 Introduction and Objectives

1.1 Introduction

Dams play a key role in our society, since they provide essential services to our way of living, such as flood defence, water storage and power generation. Moreover, an eventual failure might have catastrophic consequences in terms of casualties, economic and environmental losses, as was unfortunately verified in the past [1].

As a consequence, safe dam operation needs to be ensured, and potentially anomalous performance shall be detected as early as possible, to avoid serious malfunctioning or failure. While the first objective is achieved by means of an appropriate maintenance program both for the structure and the hydro-electromechanical devices, failure prevention by early detection of anomalies is primarily based on surveillance tasks [2], [3].

In turn, surveillance is based on two main pillars [2]: a) visual inspection and b) monitoring of dam and foundation. Its main objective is to reduce the probability of failure [3].

Lombardi [4] formulated the objectives of dam and foundation monitoring in a concise way, by posing four questions to be answered:

- Does the dam behave as expected/predicted?

- Does the dam behave as in the past?

- Does any trend exist which could impair its safety in the future?

- Was any anomaly in the behaviour of the dam detected?

The answer to these questions requires the analysis of dam monitoring data two ways:

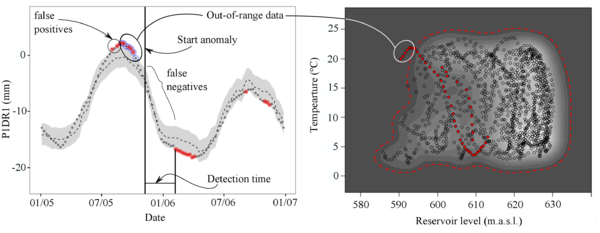

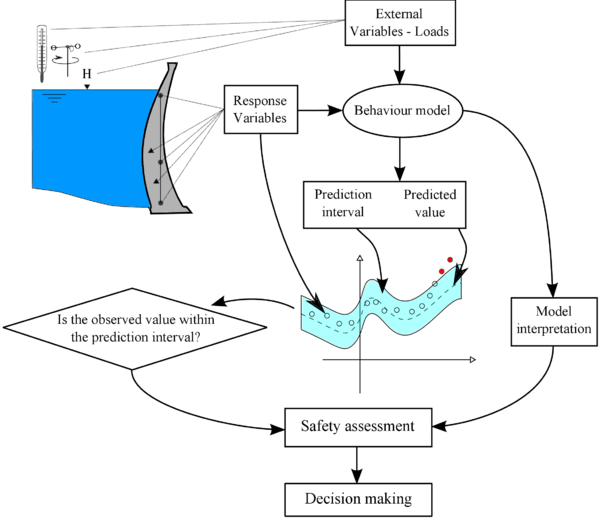

- In the short term (some times “on-line”), the measurements of some devices are compared to reference values, which correspond to the dam response to the concurring loads in “normal” or “safe” condition. These reference values and associated prediction intervals above and below them are obtained from some behaviour model, which accounts for the actual value of the acting loads. Those measurements outside the cited interval are considered as potential symptoms of anomalous behaviour, hence further verified.

- In the medium to long term, behaviour models and observed data are analysed to draw conclusions on the overall dam performance. In particular, the association between each load and output is observed, and the evolution over time is evaluated.

The result of this analysis is essential in dam safety assessment and decision making, together with the rest of available information about dam construction and operation, including visual inspection. Figure 1 shows schematically the monitoring data analysis process.

|

| Figure 1: Flow diagram of dam monitoring data analysis. |

1.2 Motivation

Dam monitoring data analysis, and the answer to the above mentioned questions, require a behaviour model that provides an estimate on the response of the structure at a given time, taking into account the acting loads.

Existing models can be classified as follows [5]:

- Deterministic: typically based on the finite element method (FEM), these methods calculate the dam response on the basis of the physical governing laws.

- Statistical: exclusively based on dam monitoring data.

- Hybrid: deterministic models which parameters have been adjusted to fit the observed data.

- Mixed: comprised by a deterministic model to predict the dam response to hydrostatic pressure, and a statistical one to consider deformation due to thermal effects.

Numerical models based on the FEM provide useful estimates of dam displacements and stresses, but are subject to a significant degree of uncertainty in the characterisation of the materials, especially with respect to the structural behaviour of the foundation and the thermal evolution of the dam body in concrete (particularly arch) dams. Other assumptions and simplifications have to be made, regarding geometry and boundary conditions. These tools are essential during the initial stages of the life cycle of the structure, provided that not enough data are available to build data-based predictive models. However, their results are often not accurate enough for a precise assessment of dam safety.

This is more acute when dealing with determined variables such as leakage in concrete dams and their foundations, due to the intrinsic features of the physical process, which is often non-linear [6], and responds to threshold and delayed effects [7], [8]. Numerical analysis cannot deal with such a phenomenon, because comprehensive information about the location, geometry and permeability of each fracture would be needed. Other phenomena are also difficult to reproduce with numerical models, such as the beginning of failure by concrete plasticising or cracking, although tools have been developed for this purpose [9].

These drawbacks are shared by all approaches that make use of a FEM model: deterministic, hybrid and mixed.

Many of the dams in operation have a number of monitoring devices that record the evolution of various indicators such as displacements, leakage flow or pore water pressure, among others. Although there are still many dams with few observed data, there is a clear trend towards the installation of a larger number of devices with higher data acquisition frequency [3]. As a result, there is an increasing amount of information on dam performance.

Statistical tools employed in regular engineering practice for dam monitoring data analysis are relatively simple. They are frequently limited to graphical exploration of the time series of data [10], along with simple statistical models [3], [11]. The hydrostatic-season-time (HST) model [12] is the most widely applied, and the only generally accepted by practitioners.

HST is based on multiple linear regression considering the three most influential external variables: hydrostatic load, air temperature and time. It often provides useful estimations of displacements in concrete dams [13], and does not require air temperature time series data (it is assumed to follow a constant yearly cycle). Moreover, the resulting model is easily interpretable, since the contribution of each input is assumed to be cumulative.

Nonetheless, HST also features conceptual limitations that damage the prediction accuracy [13] and may lead to misinterpretation of the results [14]. For example, it is based on the assumption that the hydrostatic load and the temperature are independent, whereas it is well known that they are coupled, since the thermal field is influenced by the the water temperature in the upstream face [15]. On another note, it lacks flexibility, since the functions have to be defined beforehand, and thus may not represent the true behaviour of the structure [7]. Also, they are not well-suited to model non-linear interactions between input variables [6].

In the recent years, non-parametric techniques have emerged as an alternative to HST for building data-based behaviour models [16], e.g. support vector machines (SVN) [17], neural networks (NN) [18], adaptive neuro-fuzzy systems (ANFIS) [19], among others [16]. In general, these tools are more suitable to model non-linear cause-effect relations, as well as interaction among external variables, as that previously mentioned between hydrostatic load and temperature. On the contrary, they are typically more difficult to interpret, what led them to be termed as “black box” models (e.g. [20]). As a consequence, the vast majority of related works are limited to the verification of their prediction accuracy when estimating determined output variables (e.g. [21], [22], [23]).

Therefore, dam engineers face a dilemma: the HST model is widely known and used and easily interpretable. However, it is based on some incorrect assumptions, and its accuracy can be increased. On the other hand, more flexible and accurate models are available, but they are more difficult to implement and analyse.

The research aims at solving this issue by exploring the possibilities of machine learning algorithms to improve dam monitoring data analysis and safety assessment.

1.3 Objectives

The main objective is the development of a methodology for dam behaviour analysis based on machine learning, efficient in early detection of anomalies. To achieve that goal, the following specific objectives need to be fulfilled:

1. Literature review on data-based models for dam monitoring data analysis, with focus on the following topics:

- Critical analysis of relevant articles and conference proceedings.

- Identification of areas to improve in the field of dam monitoring data interpretation.

- Revision of the statistical and machine learning tools with potential for application to the problem to be solved.

- Verification of the applicability of each tool to predict output variables of different nature.

- Analysis of the key methodological issues as regards the implementation of predictive models in day-to-day practice.

- Selection of a group of algorithms for a more detailed analysis.

2. Algorithm selection, in terms of accuracy, flexibility, robustness and ease of implementation.

3. Analysis of the effect of the training set size, to have an estimate on the time period required from the first filling before having the possibility of employing some data-based behaviour model.

4. Identification of tools for interpretation of ML models, i.e., analysis of the influence of each input on dam response and retrospective assessment of dam performance to detect potential changes in time.

5. Implementation of the methodology in a software tool for anomaly detection, with the following functionalities:

- Accuracy: the better the prediction of the model fits the actual response of the dam, the more reliable the conclusions drawn from its interpretation [24]. Moreover, a more accurate model will result in a narrower prediction interval which in turn would allow earlier anomaly detection.

- Flexibility: each dam typology presents different characteristics in terms of the most influential loads, the strength and nature of their association with dam response, the most representative output variables and the potential failure modes, among other aspects. The behaviour model should ideally be able to adapt to highly different situations.

- Interpretability: model analysis should throw information on the nature and intensity of the association between each input and response, and in particular on the time effect, i.e., whether dam performance changed over time, and which way.

- Ability to detect anomalies: a criterion to determine a prediction interval around the model prediction is required, to classify upcoming observations of different output variables as “normal” or “potentially anomalous”.

- Ability to identify extraordinary situations due to load combination.

- A graphical user interface for its practical application, including tools for data exploration, model fitting and anomaly detection.

1.4 Publications

This monograph is presented as a compendium of articles, previously published in indexed scientific journals. The list and the association with this document follows:

Chapter 2 contains a summary of the articles related to the literature review:

- Salazar, F., Morán, R., Toledo, M.Á., Oñate, E. Data-Based Models for the Prediction of Dam Behaviour: A Review and Some Methodological Considerations. Archives of Computational Methods in Engineering (2015). doi:10.1007/s11831-015-9157-9

- Salazar, F., Toledo, M.Á., Discussion on “Thermal displacements of concrete dams: Accounting for water temperature in statistical models”, Engineering Structures, Available online 13 August 2015, ISSN 0141-0296,

Chapter 3 is a summary of the article dealing with algorithm selection, based on a comparison of candidate techniques:

- Salazar, F., Toledo, M.Á., Oñate, E., Morán, R. An empirical comparison of machine learning techniques for dam behaviour modelling, Structural Safety, Volume 56, September 2015, Pages 9-17, ISSN 0167-4730,

Chapter 4 focuses on model interpretation, and is associated with the fourth paper in the compendium:

- Salazar, F., Toledo, M.Á., Oñate, E., Suárez, B. Interpretation of dam deformation and leakage with boosted regression trees, Engineering Structures, Volume 119, 15 July 2016, Pages 230-251, ISSN 0141-0296,

The overall methodology for anomaly detection is described in Chapter 5. It takes into account the conclusions of the precedent works, and is the subject of another article currently under review.

Finally, part of the work was presented in the following conferences:

Salazar, F., Oñate, E., Toledo, M.Á. Posibilidades de la inteligencia artificial en el análisis de auscultación de presas. III Jornadas de Ingeniería del Agua, Valencia (Spain), October 2013 (in Spanish). Salazar, F., Morera, L., Toledo, M.Á., Morán, R., Oñate, E. Avances en el tratamiento y análisis de datos de auscultación de presas. X Jornadas Españolas de Presas, Spancold, Sevilla (Spain), February 2015 1 (in Spanish). Salazar, F., Oñate, E., Toledo, M.Á. Nuevas técnicas para el análisis de datos de auscultación de presas y la definición de indicadores cuantitativos de su comportamiento, IV Jornadas de Ingeniería del Agua, Córdoba (Spain), October 2015. Salazar, F., González, J.M., Toledo, M.Á., Oñate, E. A methodology for dam safety evaluation and anomaly detection based on boosted regression trees. 8 European Workshop on Structural Health Monitoring, Bilbao (Spain), July 2016.

A copy of the post-print version of the articles is included in Appendix 7, while the works presented in conferences form Appendix 8.

Therefore, Chapters 2, 3 and 4 include a summary of the methods and results of the correspondent articles, while Chapter 5 contains the final part of the research, in which the previous results were taken into account.

(1) Section 3 of this paper was carried out by León Morera

2 State of the art review

2.1 Introduction

A literature review was performed on a selection of articles and conference proceedings featuring examples of application of data-based models in dam behaviour modelling. This chapter includes a summary of this analysis.

In what follows, stands for some response variable (e.g. displacement, leakage flow, crack opening, etc.), which is estimated in terms of a set of inputs : . The observed values are denoted as , where is the number of observations and refer to the dimensions of the input space.

2.2 Statistical and machine learning techniques used in dam monitoring analysis

2.2.1 Models based on linear regression

2.2.1.1 The Hydrostatic-Season-Time model (HST)

Linear regression is the simplest statistical technique, appropriate to reproduce certain phenomena. It is also the basis of the most popular data-based behaviour model in dam engineering: the Hydrostatic-Season-Time (HST). It was first proposed by Willm and Beaujoint in 1967 [12].

It is based on the assumption that the dam response is a linear combination of three effects:

|

|

(2.1) |

- A reversible effect of the hydrostatic load which is commonly considered in the form of a fourth-order polynomial of the reservoir level () ([5], [25], [7]):

|

|

(2.2) |

- A reversible influence of the air temperature, which is assumed to follow an annual cycle. Its effect is approximated by the first terms of the Fourier transform:

|

|

(2.3) |

where and d is the number of days since 1 January.

- An irreversible term due to the evolution of the dam response over time. A combination of monotonic time-dependant functions is frequently considered. The original form is [12]:

|

|

(2.4) |

The model parameters are adjusted by the least squares method: the final model is based on the values which minimise the sum of the squared deviations between the model predictions and the observations.

The main advantages are:

- It frequently provides useful estimations of displacements in concrete dams [13].

- It is simple and thus easily interpretable: the effect of each external variable can be isolated in a straightforward manner, since they are assumed to be cumulative.

- Since the thermal effect is considered as a periodic function, the time series of air temperature are not required. This widens the possibilities of application, as only the reservoir level variation needs to be available to build an HST model.

- It is well known by practitioners and frequently applied in several countries [13].

It also features relevant limitations:

- The functions have to be defined beforehand, and thus may not represent the true behaviour of the structure [7].

- The governing variables are supposed to be independent, although some of them have been proven to be correlated [26].

- They are not well-suited to model non-linear interactions between input variables [6].

Several alternatives have been proposed to overcome these shortcomings. Penot et al. [27] introduced the HSTT method, in which the thermal periodic effect is corrected according to the actual air temperature.

Related approaches also based on linear regression were applied in dam safety, often by means of the addition of further input variables following some heuristics or after a trial-and-error process [18], [6], [7], [11], [28]. In all cases, the need to make a priori assumptions about the model remains, although variable selection procedures have also been proposed, such as Stojanovic et al. [29], who combined greedy MLR with variable selection by means of genetic algorithms (GA).

2.2.1.2 Consideration of delayed effects

It is well known that dams respond to certain loads with some delay [8]. The most typical examples are the change in pore pressure in an earth-fill dam due to reservoir level variation [30] and the influence of the air temperature in the thermal field in a concrete dam body [7].

Several alternatives have been proposed to account for these effects. The most popular is based on an enrichment of the linear regression by including moving averages or gradients of some explanatory variables in the set of predictors. Guedes and Coelho [31] predicted the leakage flow on the basis of the mean reservoir level over the course of a five-days period. Sánchez Caro [32] included the 30 and 60 days moving average of the reservoir level in the conventional HST formulation to predict the radial displacements of El Atazar Dam. Further examples are due to Popovici et al. [33] and Crépon and Lino [34].

A more formal alternative to conventional HST to account for delayed effects was proposed by Bonelli [35], [25]. It was intended to account for the delayed response of an arch dam in terms of the temperature field, with the final aim of predicting radial displacements. Lombardi et al. [4] suggested an equivalent formulation, also to compute the thermal response of the dam to changes in air temperature. Although the formulation differs from a multiple linear regression, its numerical integration leads to a predictive model which is a linear combination of:

- the value of the predictors at and .

- the value of the output variable at .

which is the conventional form of a first order auto-regressive exogenous (ARX) model.

This is the most enriched version of multiple linear regression, where predictors of different types are combined. This gives greater flexibility to the algorithm to adapt to different situations or response variables. By contrast, the number of potential inputs can become very large, which generally leads to the need for some variable selection procedure. For example, Piroddi and Spinelli [36] applied a specific algorithm for selecting 11 out of 40 predictors considered. Principal component analysis (PCA) was also employed for variable selection (e.g. [37], [38], [6]).

A further drawback of linear regression with many input variables is that model interpretation becomes difficult, since the contribution of each predictor is harder to isolate.

Moreover, the use of the previous (lagged) value of the output to calculate a prediction for current record may induce to question a) whether the observed previous value or the precedent prediction should be used, and b) whether the model parameters should be readjusted at every time step.

In addition, current and previous values of response variables different from the target variable (e.g. radial displacements or leakage) can be considered as inputs. They implicitly encompass information from unrecorded or unknown phenomena, so the resulting model will probably be more accurate. However, it can also “learn” the anomalous behaviour and consider it as normal, in which case it would be inappropriate to detect anomalies.

The higher accuracy obtained by increasing the information given to the model invites exploring the utility of this approach, keeping their limitations in mind.

2.2.2 Machine learning based models

Among the non-conventional data-based algorithms, neural networks (NNs) are by far the most popular in the field of dam monitoring data analysis. NN models are flexible, and allow modelling complex and highly non-linear phenomena. Most of the published works employ the conventional multi-layer perceptron (MLP) and some sigmoid as the activation function.

These models often result in greater accuracy than MLR, due to the higher flexibility. However, the results are highly dependent on some issues to be determined by the user:

- The network architecture, i.e., number of layers and perceptrons in each layer, which is not known beforehand. Some authors focus on the definition of an efficient algorithm for determining an appropriate network architecture [39], whereas others use conventional cross-validation [18] or a simple trial and error procedure [40].

- The training process, which may reach a local minimum of the error function. The probability of occurrence of this event can be reduced by introducing a learning rate parameter [40].

- The stopping criterion, to avoid over-fitting. Various alternatives are suitable for solving this issue, such as early stopping and regularisation [41].

The fitting procedures greatly differ among authors. While Simon et al. [7] trained an MLP with three perceptrons in one hidden layer for 200,000 iterations, Tayfur et al. [40] used regularisation with 5 hidden neurons and 10,000 iterations. Neither of them followed any specific criterion to set the number of neurons. For his part, Mata [18] tested NN architectures with one hidden layer having 3 to 30 neurons on an independent test data set. He repeated the training of each NN model 5 times with different initialisation of the weights.

It can be concluded that NNs share some of the target features (flexibility, accuracy), but lack ease for implementation and robustness. Model interpretation is not straightforward, and the results depend on the initialisations, so several models need to be trained and their results averaged to increase robustness. Moreover, only numerical inputs can be considered, which need to be normalised for model fitting (and de-normalised afterwards).

Other ML approaches were also applied in dam safety, such as Adaptive neuro-fuzzy systems (ANFIS) ([21], [42]), Support Vector Machines (SVM) ([43], [17]), or K-nearest neighbours (KNN) ([44]). They mostly share the mentioned properties of NNs: greater flexibility and accuracy, more difficult interpretation and potential over-fitting.

2.3 Methodological issues

Although each algorithm has its peculiarities, they all need to face intrinsic aspects of the problem to be solved, which can be analysed independently of the selected technique. Some of them have been mentioned before as variable selection. Others are specific to data-based prediction tasks, and in particular to the dam behaviour problem.

2.3.1 Input selection

The vast majority of statistical and ML algorithms are highly dependent on the inputs considered, which results in a need for input variable selection. The issue has arisen in combination with the use of NN [45], [46], [47], [48], [49], ARX [36], MLR [29] and ANFIS models [21].

The selection of predictors can be useful to reduce the dimensionality of the problem (essential for ARX models), as well as to facilitate the interpretation of the results.

The criterion to be used depends on the type of data available, the main objective of the study (prediction or interpretation), and the characteristics of the phenomenon to be modelled. Engineering judgement is thus essential to make these decisions.

By contrast, some ML algorithms are insensitive to the presence of highly-correlated or uninformative predictors, such as those based on decision trees. Boosted regression trees (BRTs) and random forests (RFs) stand out among those included in this category, though they are relatively new and unknown for most dam engineers.

2.3.2 Model interpretation

There is an obvious interest in model interpretation to analyse the effect of each input on dam response, once the parameters have been fitted. This contributes to answer the first question posed by Lombardi [4]: does the dam behave as expected/predicted? For example, an arch dam is expected to move in the downstream direction in front of a combination of high hydrostatic load and low temperature.

The evolution over time is particularly relevant, since it is related to the second and third questions [4]:

- Does the dam behave as in the past?

- Does any trend exist which could impair its safety in the future?

The effect of time, hydrostatic load and temperature can be easily obtained from an HST model, since it is based on the assumption that they are additive. However, it was already mentioned that they are actually correlated. Paraphrasing Breiman [24], when a pre-defined model is fit to data, ``the conclusions drawn are about the model's mechanism, not about nature's mechanism 1. Moreover, “if the model is a poor emulation of nature, the conclusions may be wrong”.

Therefore, the interpretation of a more accurate predictive model will offer more reliable conclusions. The price to be paid for the greater flexibility and accuracy is the more difficult interpretation.

The vast majority of published studies are limited to the analysis of model accuracy for the output variable under consideration, as compared to HST. Only a few come to deal with model interpretation, that is, to analyse the strength and nature of the contribution of each action to the dam response. They are often limited to cases where a low number of inputs are considered (e.g. [18], [50], [7], [33]).

(1) Breiman employs “nature” to denote any phenomenon partially understood, which associates the predictor variables to the outcome. In this research, ``nature's mechanism is homologous to “dam behaviour”

2.3.3 Training and validation sets

Accuracy is the main (and most obvious) measure of model performance, i.e. how well the model predictions fit to the observed data. However, it is well known that an increase in the number of parameters results in models more susceptible to over-fit. The higher complexity of ML algorithms has a similar effect as regards over-fitting. Hence, model accuracy must be computed properly.

It has been proven that the prediction accuracy of a data-based model, measured on the training data, is an overestimation of its overall performance [51]. Therefore, part of the available data needs to be reserved for model accuracy estimation (validation set). In principle, any sub-setting of the available data into training and validation sets is acceptable, provided the data are independent and identically distributed (i.i.d.).

This is not the case in dam monitoring series, which are time-dependant in general. Moreover, the amount of available data is limited, what in turn limits the size of the training and validation sets. Ideally, both should cover all the range of variation of the most influential variables.

On another note, a minimum amount of data is necessary to build a predictive model with appropriate generalisation ability. Some authors estimate the minimum period to be 5 [5] to 10 years [52], though it is case-dependent.

A further problem for the application of data-based models is that transient phenomena take place during the first years of operation [4]. Therefore, data from that period should be analysed in detail, since it might not be representative of subsequent dam performance.

In spite of these issues, many authors use the training set for computing model generalisation capability, or use a small sample for validation. This raises doubts about the actual accuracy of these models, in particular of those more strictly data-based, such as NN or SVM.

The deviation between predictions and observations is essential for dam behaviour assessment [4]. Moreover, the prediction intervals are typically based on some multiple of the standard deviation of the residuals. Hence, the proper estimation of model accuracy, over an adequate validation set, is fundamental from a practical viewpoint.

This topic is covered in depth in Chapter 5.

2.3.4 Practical implementation

Despite the increasing amount of literature on the use of advanced data-based tools, very few examples described their practical integration in dam safety analysis. The vast majority were limited to the model accuracy assessment, by quantifying the model error with respect to the actual measured data.

The information provided by reliable automated systems, based on highly accurate models, can be a great support for decision making regarding dam safety [3], [2].

To achieve that goal, the outcome of the predictive model must be transformed into a set of rules that determine whether the system should issue a warning. The actions to be taken need to be defined on a case-by-case basis, taking into consideration the relevance of each device as regards the overall dam safety [4].

Actually, an overall analysis of the most representative instruments is recommended, to identify (and discard) any isolated reading error. Cheng and Zheng [43] proposed a procedure for calculating normal operating thresholds (“control limits”), and a qualitative classification of potential anomalies: a) extreme environmental variable values, b) global structure damage, c) instrument malfunctions and d) local structure damage.

A more accurate analysis could be based on the consideration of the major potential modes of failure to obtain the corresponding behaviour patterns and an estimate of how they would be reflected on the monitoring data. Mata et al. [53] employed this idea to develop a system that takes the measurements of several devices and classifies them as correspondent to normal or accidental situation. This scheme can be easily implemented in an automatic system, though requires a detailed analysis of the possible failure modes, and their numerical simulation to provide data with which to train the classifier.

2.4 Conclusions

There is a growing interest in the application of innovative tools in dam monitoring data analysis. Although only HST is fully implemented in engineering practice, the number of publications on the application of other methods has increased considerably in recent years, specially NN.

It seems clear that the models based on ML algorithms can offer more accurate estimates of the dam behaviour than the HST method in many cases. In general, they are more suitable to reproduce non-linear effects and complex interactions between input variables and dam response.

However, most of the published works refer to specific case studies, certain dam typologies or determined outputs. Many focus on radial displacements in arch dams, although this typology represents roughly 5% of dams in operation worldwide.

A useful data-based algorithm should be versatile to face the variety of situations presented in dam safety: different typologies, outputs, quality and volume of data available, among others. Data-based techniques should be capable of dealing with missing values and robust to reading errors.

These tools must be employed rigorously, given their relatively high number of parameters and flexibility, what makes them susceptible to over-fit the training data. It is thus essential to check their generalisation capability on an adequate validation data set, not used for fitting the model parameters.

The main limitation of these methods is their inability to extrapolate, i.e., to generate accurate predictions outside the range of variation of the training data. Therefore, before applying these models for predicting the dam response in a given situation, it should be checked whether the load combination under consideration lies within the values of the input variables in the training data set.

From a practical viewpoint, data-based models should also be user-friendly and easily understood by civil engineering practitioners, typically unfamiliar with computer science, who have the responsibility for decision making.

Finally, two overall conclusions can be drawn from the review:

- ML techniques can be highly valuable for dam safety analysis, though some issues remain unsolved.

- Regardless of the technique used, engineering judgement based on experience is critical for building the model, for interpreting the results, and for decision making with regard to dam safety.

3 Algorithm Selection

3.1 Introduction

In view of the conclusions of the literature review, a set of ML algorithms were selected for a detailed comparative analysis. The main features were already known, but there was a need for testing their appropriateness to build dam behaviour models.

A selection of algorithms were faced to a practical case study, and the results were compared. Specifically, the following techniques were considered: random forests (RF), boosted regression trees (BRT), support vector machines (SVM) and multivariate adaptive regression splines (MARS). Both HST and NN were also used for comparison purposes. Similar analyses had been previously performed in other fields of engineering, such as the prediction of urban water demand [54].

3.2 Case study

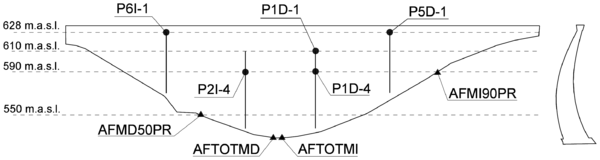

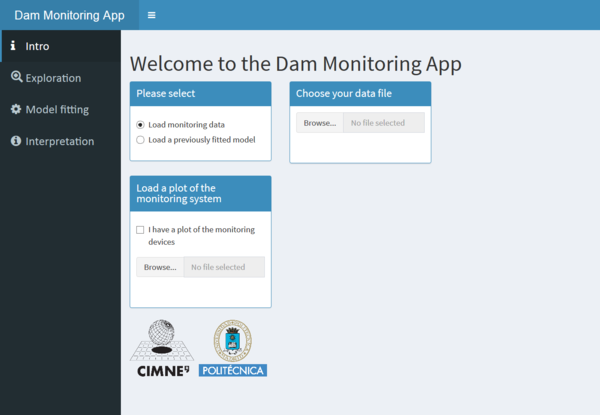

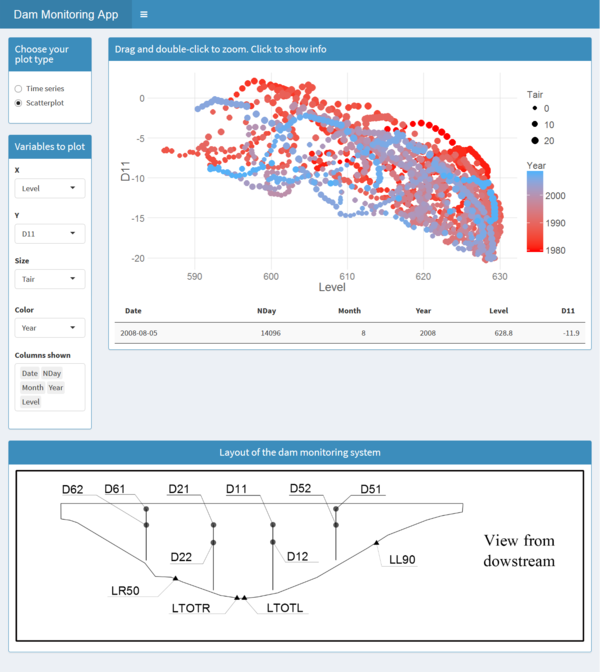

The data used for the study correspond to La Baells dam. It is a double curvature arch dam, with a height of 102 m, which entered into service in 1976. The monitoring system records the main indicators of the dam performance: displacement, temperature, stress, strain and leakage. The data were provided by the Catalan Water Agency (Agencia Catalana de l'Aigua, ACA), the dam owner, for research purposes. Among the available records, the study focused on 14 variables: 10 correspond to displacements measured by pendulums (five radial and five tangential), and four to leakage flow. Several variables of different types were considered in order to obtain more reliable conclusions. The details of the available data are included in the article, whereas the location of each monitoring device is depicted in Figure 2.

|

| Figure 2 |

The specific features of dam monitoring data analysis were taken into account to design the experiment. In all cases, approximately 40% of the records (from 1998 to 2008) were left out as the testing set. This is a large proportion compared with previous studies, which typically leave 10-20 % of the available data for testing [21], [18], [44]. A larger test set was selected in order to increase the reliability of the results.

On another note, it is well known that the early years of operation often correspond to a transient state, non-representative of the quasi-stationary response afterwards [4]. In such a scenario, using those years for training a predictive model would be inadvisable. This might lead to question the optimal size of the training set in achieving the best accuracy ([52], [6]). The available time series for La Baells dam span from 1979 to 2008. To analyse this issue, four different training sets were chosen to fit each model, spanning five, 10, 15 and 18 years of records. In all cases, the training data used correspond to the closest time period to the test set (e.g. periods 1993-1997, 1988-1997, 1983-1997, and 1979-1997, respectively).

The predictor set included inputs related to the environmental actions: air temperature and hydrostatic load. A time-dependent term was also added, to account for possible variations in dam behaviour over the period of analysis. Several variables derived from those actually measured at the dam site (reservoir level and the average daily temperature) were also included. They are listed in Table 1.

| Code | Group | Type | Period (days) |

| Level | Hydrostatic load | Original | - |

| Lev007 | Hydrostatic load | Moving average | 7 |

| Lev014 | 14 | ||

| Lev030 | 30 | ||

| Lev060 | 60 | ||

| Lev090 | 90 | ||

| Lev180 | 180 | ||

| Tair | Air temperature | Moving average | 1 |

| Tair007 | 7 | ||

| Tair014 | 14 | ||

| Tair030 | 30 | ||

| Tair060 | 60 | ||

| Tair090 | 90 | ||

| Tair180 | 180 | ||

| Rain | Rainfall | Accumulated | 1 |

| Rain030 | 30 | ||

| Rain060 | 60 | ||

| Rain090 | 90 | ||

| Rain180 | 180 | ||

| NDay | Time | Original | - |

| Year | - | ||

| Month | Season | Original | - |

| n010 | Hydrostatic load | Rate of variation | 10 |

| n020 | 20 | ||

| n030 | 30 |

The variable selection was performed according to dam engineering practice. Both displacements and leakage are strongly dependant on hydrostatic load. Air temperature is well known to affect displacements, in the form of a delayed action. It may also influence leakage flow (as Seifart et al. reported for Itaipú Dam [55]), although it is uncertain (Simon et al. observed no dependency [7]). Both the air temperature and some moving averages were included in the analysis.

A relatively large set of predictors was used to capture every potential effect, overlooking the high correlation among some of them. The comparison sought to be as unbiased as possible, thus all the models were built using the same inputs1 and data pre-process (only normalisation was performed when necessary). While it is acknowledged that this procedure may favour the techniques that better handle noisy or scarcely important variables, theoretically all learning algorithms should discard them automatically during the model fitting.

(1) with the exceptions of MARS and HST, as explained in the article

3.3 Results and discussion

Table 2, contains the mean absolute error (MAE) for each target and model, computed as:

|

|

(3.1) |

where is the size of the training (or test) set, are the observed outputs and the predicted values.

| Type | Target | RF | BRT | NN | SVM | MARS | HST |

| Radial (mm) | P1DR1 | 1.70 | 0.93 | 0.58 | 0.75 | 2.32 | 1.35 |

| P1DR4 | 1.05 | 0.71 | 0.68 | 0.76 | 1.50 | 1.37 | |

| P2IR4 | 0.94 | 0.97 | 1.02 | 1.05 | 0.85 | 1.12 | |

| P5DR1 | 0.86 | 0.70 | 0.64 | 1.35 | 0.89 | 0.88 | |

| P6IR1 | 1.47 | 0.69 | 0.72 | 0.60 | 1.67 | 0.91 | |

| Tangential (mm) | P1DT1 | 0.24 | 0.25 | 0.52 | 0.35 | 0.55 | 0.47 |

| P1DT4 | 0.15 | 0.15 | 0.18 | 0.19 | 0.22 | 0.20 | |

| P2IT4 | 0.13 | 0.11 | 0.13 | 0.12 | 0.14 | 0.10 | |

| P5DT1 | 0.40 | 0.22 | 0.19 | 0.38 | 0.47 | 0.18 | |

| P6IT1 | 0.28 | 0.27 | 0.39 | 0.94 | 0.39 | 0.51 | |

| Leakage (l/min) | AFMD50PR | 1.24 | 0.90 | 2.11 | 4.25 | 1.74 | 2.24 |

| AFMI90PR | 0.18 | 0.15 | 0.07 | 0.33 | 0.25 | 0.28 | |

| AFTOTMD | 1.82 | 1.60 | 3.04 | 5.38 | 1.85 | 2.60 | |

| AFTOTMI | 0.91 | 0.42 | 0.83 | 1.49 | 1.49 | 1.11 |

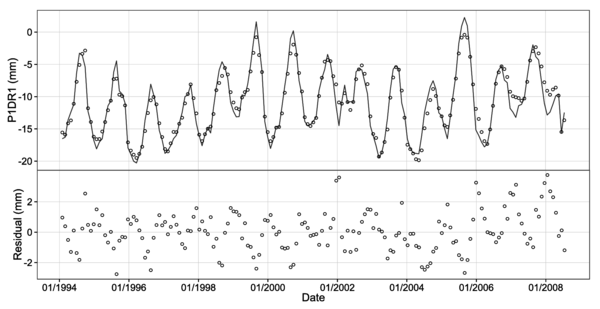

It can be seen that models based on ML techniques mostly outperform the reference HST method. NN models yield the highest accuracy for radial displacements, whereas BRT models are better on average both for tangential displacements and leakage flow. It should be noted that the MAE for some tangential displacements is close to the measurement error of the device ().

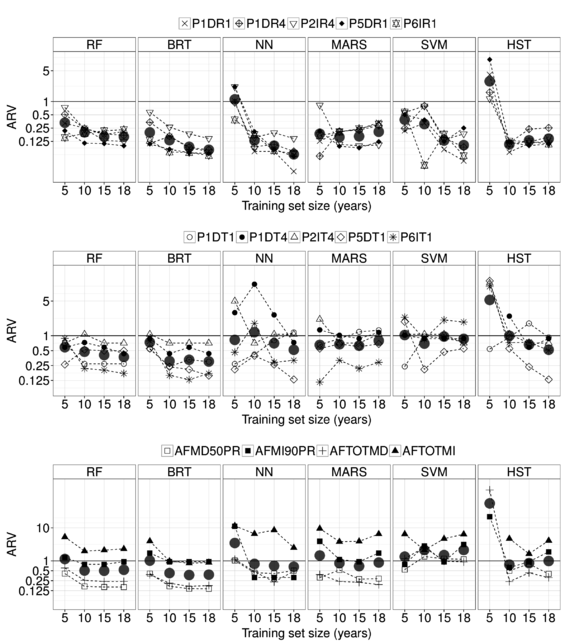

The effect of the training set size is depicted in Figure 3, where the model accuracy is measured in terms of the average relative variance (ARV) [56]:

|

|

(3.2) |

where is the output mean. Given that ARV denotes the ratio between the mean squared error (MSE) and the variance (), it accounts both for the magnitude and the deviation of the target variable. Furthermore, a model with ARV=1 is as accurate a prediction as the mean of the observed outputs.

Although the use of the whole training set is optimal for six out of 14 targets, significant improvements are reported in some cases by eliminating some of the early years. Surprisingly, for two of the outputs, the lower MAE corresponds to a model trained over five years, which in principle was assumed to be too small a training set. MARS is especially sensitive to the size of the training data. The MARS models trained on five years improve the accuracy for P1DR4 and P6IT1 by 13.3 % and 14.8 % respectively.

These results strongly suggest that it is advisable to select carefully the most appropriate training set size. This should be done by leaving an independent test set.

3.4 Conclusions

It was found that the accuracy of currently applied methods for predicting dam behaviour can be substantially improved by using ML techniques.

The sensitivity analysis to the training set size shows that removing the early years of dam life cycle can be beneficial. In this work, it has resulted in a decrease in MAE in some cases (up to 14.8% Hence, the size of the training set should be considered as an extra parameter to be optimised during training.

Some of the techniques analysed (MARS, SVM, NN) are more susceptible to further tuning than others (RF, BRT), given that they have more hyper-parameters and are more sensitive to the presence of correlated or uninformative inputs. As a consequence, the former might have a larger margin for improvement than the latter.

However, both detailed tuning and careful variable selection increase the computational cost and complicate the analysis. Since the objective is the extension of these techniques for the prediction of a large number of variables of many dams, the simplicity of implementation is an aspect to be considered in model selection.

In this sense, BRT showed to be the best choice: it was the most accurate for five of the 14 targets; easy to implement; robust with respect to the training set size; able to consider any kind of input (numeric, categorical or discrete), and not sensitive to noisy and low relevant predictors.

4 Model Interpretation

4.1 Introduction

As a result of the comparative analysis, BRT was selected as the most appropriate tool to achieve the research objectives. In this stage, the possibilities of interpretation were investigated to:

- Identify the effect of each external variable on the dam behaviour

- Detect the temporal evolution of the dam response

- Provide meaningful information to draw conclusions about dam safety

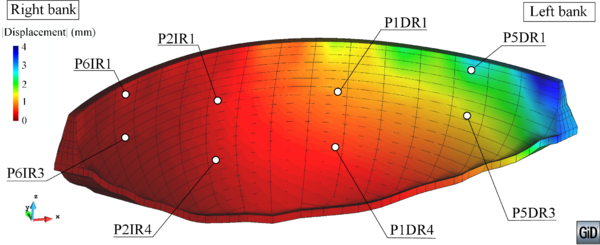

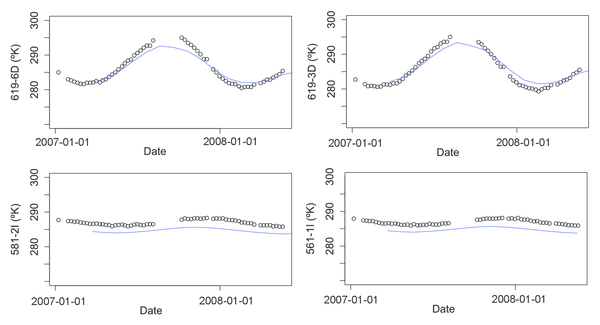

For this purpose, the same data from La Baells Dam were employed, though the analysis focused on 12 variables: 8 corresponded to radial displacements measured by pendulums (along the upstream-downstream direction), and four to leakage flow. The location of each monitoring device is depicted in Figure 4.1.

Geometry and location of the targets considered for model interpretation. Left: view from downstream. Right: highest cross-section.

Since BRT models automatically discard those predictors not associated with the output [57], the initial model considered the same inputs as described in section 3. All the calculations were performed on a training set covering the period 1980-1997, and the model accuracy was assessed for a validation set correspondent to the years 1998-2008.

4.2 Methods

4.2.1 Boosted regression trees

BRT models are built by combining two algorithms: a set of single models are fitted by means of decision trees [58], and their output is combined to compute the overall prediction using boosting [59]. For the sake of completeness, a short description of both techniques follow, although excellent introductions can be found in [60], [61], [62], [20].

4.2.1.1 Regression trees

Regression trees were first proposed as statistical models by Breiman et al. [58]. They are based on the recursive division of the training data in groups of “similar” cases. The output of a regression tree is the mean of the output variable for the observations within each group.

When more than one predictor is considered (as usual), the best split point for each is computed, and the one which results in greater error reduction is selected. As a consequence, non-relevant predictors are automatically discarded by the algorithm, as the error reduction for a split in a low relevant predictor will generally be lower than that in an informative one.

Other interesting properties of regression trees are:

- They are robust against outliers.

- They require little data pre-processing.

- They can handle numerical and categorical predictors.

- They are appropriate to model non-linear relations, as well as interaction among predictors.

By contrast, regression trees are unstable, i. e., small variations in the training data lead to notably different results. Also, they are not appropriate for certain input-output relations, such as a straight line [62].

4.2.1.2 Boosting

Boosting is a general scheme to build ensemble prediction models [59]. It is based on the generation of a (frequently high) number of simple models (also referred to as “weak learners”) on altered versions of the training data. The overall prediction is computed as a weighted sum of the output of each model in the ensemble. The rationale behind the method is that the average of the prediction of many simple learners can outperform that from a complex one [63].

The idea is to fit each learner to the residual of the previous ensemble. The main steps of the original boosting algorithm when using regression trees and the squared-error loss function can be summarised as follows [64]:

- Start predicting with the average of the observations (constant):

- For to

- Compute the prediction error on the training set:

- Draw a random sub-sample of the training set ()

- Consider and fit a new regression tree to the residuals of the previous ensemble:

- Update the ensemble:

|

|

|

|

|

|

|

|

It is generally accepted that this procedure is prone to over-fitting, because the training error decreases with each iteration [64]. To overcome this problem, it is convenient to add a regularization parameter , so that step (d) turns into:

|

|

Some empirical analyses showed that relatively low values of (below 0.1) greatly improve generalisation capability [59]. In practice, it is common to set the regularisation parameter and consider a number of trees such that the training error stabilises [60]. Subsequently, a certain number of terms are pruned using for example cross-validation. This is the approach employed in this work, with and a maximum of 1,000 trees. It was verified that the training error reached the minimum before adding the maximum number of trees.

Five-fold cross-validation was applied to determine the amount of trees in the final ensemble. The process was repeated using trees of depth 1 and 2 (interaction.depth), and the most accurate for each target was selected. The rest of the parameters were set to their default values [65].

All the calculations were performed in the R environment [66].

Several procedures to interpret ML models, often termed “black box” models, can be found in the literature. In this work, the relative influence (RI) of each predictor and the partial dependence plots (PDP) were employed.

4.2.2 Relative influence (RI)

BRT models are robust against the presence of uninformative predictors, as they are discarded during the selection of the best split. Moreover, it seems reasonable to think that the most relevant predictors are more frequently selected during training. In other words, the relative influence (RI) of each input is proportional to the frequency with which they appear in the ensemble. Friedman [59] proposed a formulation to compute a measure of RI for BRT models based on this intuition. Both the relative presence and the error reduction achieved are considered in the computation. The results are normalised so that they add up to 100.

Based on this measurement, the most influential variables were identified for each output, and the results were interpreted in relation to dam behaviour. In order to facilitate the analysis, the RI was plotted as word clouds [67]. These plots resemble histograms, with the advantage of being more appropriate to visualise a greater set of variables. The code representing each predictor was displayed with a font size proportional to its relative influence with the library “wordcloud” [68].

Furthermore, two degrees of variable selection were applied, based on the RI of each predictor. First, a BRT model (M1) was trained with all the variables considered (section 5.2.3). Second, the inputs with were selected to build a new model (M2). This criteria is heuristic and based on the 1-SE rule proposed by Breiman et al. [58]. Finally, a model with three predictors was generated (M3), featuring the more relevant variables of each group: temperature, time and reservoir level for radial displacements, and rainfall, time and level for leakage flows.

These three versions were generated to analyse the effect of the presence of uninformative variables in the predictor set. Moreover, the simplest model facilitates the analysis, as the effect of each action is concentrated in one single predictor.

In this sense, the temporal evolution is particularly relevant for dam safety evaluation, as it can help to identify a progressive deterioration of the dam or the foundation, which might result in a serious fault if not corrected.

4.2.3 Partial dependence plots

Multi-linear regression models and HST in particular are based on the assumption that the input variables are statistically independent, so the prediction is computed as the sum of their contributions. As a result, the effect of each predictor in the response can be easily identified, by plotting .

This method is not appropriate for BRT models, as interactions among predictors are accounted for. While this results in more flexibility, it also implies that the identification of the relation between predictors and response is not straightforward.

Nonetheless, it is possible to examine the predictor-response relationship by means of the partial dependence plots [59]. This tool can be applied to any black box model, as it is based on the marginal effect of each predictor on the output, as learned by the model. Let be the variable of interest. A set of equally spaced values are defined along its range: . For each of those values, the average of the model predictions is computed:

|

|

(4.1) |

where is the value for all inputs other than for the observation .

Similar plots can be obtained for interactions among inputs: the average prediction is computed for couples of fixed , where takes two different values. Hence, the results can be plotted as a three-dimensional surface (section 4.3.3). In this work, partial dependence plots were restricted to the simplest model, which considered three predictors. Therefore, three 3D plots allowed investigating the pairwise interactions among all the inputs considered in the simplified model.

4.2.4 Overall procedure

The complete process comprised the following steps:

- Fit a BRT model on the training data with the variables in table 1 (M1).

- Compute the RI and generate the word cloud.

- Select the most relevant predictors with the 1-SE rule [58] and fit a new BRT model (M2).

- Build a simple BRT model (M3) with the most influential variable of each group (temperature, level and time for displacements, and rainfall, level and time for leakage).

- Generate the univariate and bivariate partial dependence plots for the simplest model.

- Compute the goodness of fit for each model in both the training and the validation sets.

4.3 Results

4.3.1 Effect of input selection

Table 3 contains the error indices for each target. For those models with variable selection, the predictors are also listed. The results show that BRT efficiently discarded irrelevant inputs, since the fitting accuracy was similar for each version in most cases (i.e., the presence of uninformative predictors did not damage the fitting accuracy).

0.9 !

| Train | Validation | ||||

| Target | MAE | ARV | MAE | ARV | Inputs |

| P1DR1 | 0.64 | 0.03 | 0.91 | 0.08 | All |

| 0.68 | 0.03 | 0.81 | 0.06 | Tair090,Level,NDay,Lev007,Lev014 | |

| 0.69 | 0.03 | 0.78 | 0.06 | NDay,Tair090,Level | |

| P1DR4 | 0.46 | 0.03 | 0.65 | 0.08 | All |

| 0.50 | 0.03 | 0.66 | 0.08 | Level,Tair090,NDay,Lev007,Lev014,Lev030 | |

| 0.51 | 0.03 | 0.67 | 0.08 | NDay,Tair090,Level | |

| P2IR1 | 0.66 | 0.03 | 1.03 | 0.09 | All |

| 0.85 | 0.05 | 1.09 | 0.09 | Tair090,Level,Lev007,Lev014 | |

| 0.71 | 0.04 | 0.98 | 0.08 | NDay,Tair090,Level | |

| P2IR4 | 0.48 | 0.05 | 0.90 | 0.14 | All |

| 0.61 | 0.06 | 0.93 | 0.14 | Level,Tair090,Lev007,Lev014,Lev030 | |

| 0.53 | 0.06 | 0.94 | 0.16 | NDay,Tair090,Level | |

| P5DR1 | 0.66 | 0.05 | 0.82 | 0.08 | All |

| 0.64 | 0.05 | 0.87 | 0.10 | Tair060,Level,Tair030 | |

| 0.83 | 0.08 | 0.93 | 0.11 | NDay,Tair060,Level | |

| P5DR3 | 0.25 | 0.03 | 0.47 | 0.21 | All |

| 0.33 | 0.05 | 0.55 | 0.22 | Tair060,Level,Tair030 | |

| 0.31 | 0.04 | 0.52 | 0.24 | NDay,Tair060,Level | |

| P6IR1 | 0.60 | 0.04 | 0.80 | 0.09 | All |

| 0.65 | 0.05 | 0.78 | 0.08 | Tair060,Tair030,Level,NDay | |

| 0.83 | 0.08 | 0.85 | 0.1 | NDay,Tair060,Level | |

| P6IR3 | 0.23 | 0.02 | 0.40 | 0.08 | All |

| 0.37 | 0.05 | 0.67 | 0.17 | Tair060,Level,Tair030 | |

| 0.29 | 0.03 | 0.43 | 0.09 | NDay,Tair060,Level | |

| AFMD50PR | 1.28 | 0.16 | 0.93 | 0.19 | All |

| 1.45 | 0.17 | 1.36 | 0.28 | Level,Lev014,Lev007 | |

| 1.16 | 0.14 | 1.23 | 0.48 | NDay,Rain090,Level | |

| AFMI90PR | 0.08 | 0.09 | 0.15 | 0.51 | All |

| 0.08 | 0.10 | 0.12 | 0.45 | Lev007,NDay,Level,Lev014,Lev030 | |

| 0.08 | 0.10 | 0.12 | 0.46 | NDay,Rain030,Lev007 | |

| AFTOTMD | 1.64 | 0.15 | 1.67 | 0.37 | All |

| 1.87 | 0.19 | 1.73 | 0.45 | Level,Lev007,Lev014 | |

| 1.69 | 0.18 | 1.97 | 0.52 | NDay,Rain180,Level | |

| AFTOTMI | 0.41 | 0.11 | 0.44 | 0.40 | All |

| 0.44 | 0.12 | 0.44 | 0.42 | NDay,Lev060,Lev014,Lev007,Lev030,Lev180,Lev090,Level | |

| 0.54 | 0.18 | 0.46 | 0.60 | NDay,Rain180,Lev060 | |

4.3.2 Relative influence

The analysis of the wordclouds of RI allowed identifying some interesting features of La Baells dam behaviour. As for the radial displacements, (Figure 4.3.2), the thermal inertia was observed as higher RI for Tair060 and Tair090 than for Tair (which in fact resulted negligible). By contrast, the reservoir level at the date of the record was always more influential than all the moving averages, what reveals an immediate response of the dam to this load.

Other conclusions derived from Figure 4.3.2 are:

- The thermal inertia was lower near the abutments.

- The RI of the temperature with respect to that of the hydrostatic load increased from the foundation towards the crown, and from the centre to the abutments.

- The dam behaviour is sensibly symmetrical.

Word clouds for the radial displacements analysed.

The same analysis for the leakage flows revealed a clear different behaviour between the right (AFMD50PR and AFTOTMD) and the left margins (AFMI90PR and AFTOTMI). While the former responded mainly to the hydrostatic load, with little inertia, the latter showed a remarkable dependence on time, as well as a greater relevance of several rolling means of reservoir level. Figure 4.3.2 shows the word clouds for the leakage flows.

Word clouds for the leakage measurement locations analysed.

The low inertia with respect to the hydrostatic load suggests that most of the leakage flow comes from the reservoir, while the effect of rainfall is negligible.

4.3.3 Partial dependence plots (PDPs)

The resulting PDPs allowed verifying that the dam “behaved as expected”, in terms of the first question posed by Lombardi. Figure 4.3.3 contains the univariate PDP for P1DR1, which shows that higher hydrostatic load and lower air temperature are associated with displacement towards downstream and vice-versa.

Partial dependence plot for P1DR1. Movement towards downstream correspond to lower values in the vertical axis, and vice-versa.

Similar plots can be generated in 3D, which allow investigating the pairwise interactions for all the inputs considered (Figure 4.3.3).

3D PDPs for the main acting loads and P1DR1.

The analysis of the leakage flows (Figure 4.3.3) confirmed that the time effect was irrelevant in the right abutment, except by certain erratic behaviour in the first two years and in the last three. On the contrary, a sharp decrease in leakage flow was revealed around 1983 for both locations in the left abutment, and a lower decrease in later years.

The shape of the effect of the hydrostatic load is sensibly exponential, with low influence for reservoir level below 610 m.a.s.l.

Partial dependence plot for leakage flows.

The PDPs also provide information to answer the second and third questions, by means of analysing the partial dependence on time. In the particular case of P1DR1, these plots show a step around 1991-1992 for the whole ranges of level and temperature, which might represent some change in dam response (Figures 4.3.3 and 4.3.3. This issue was object of further verification.

First, an HST model was fitted and similarly interpreted (Figure 4.3.3). The time effect was a linear trend towards downstream, in contrast with the step suggested by the BRT model.

On another note, the average reservoir level in the period 1991-1997 was significantly higher than before 1991, and might be the cause of the step registered in Figures 4.3.3 and 4.3.3: it represents a greater displacement towards downstream in the most recent period, which is consistent with the higher average hydrostatic load.

Contribution of time, temperature and hydrostatic load on P1DR1, as derived from the interpretation of HST.

To clarify the divergence in the results, a new BRT model was fitted to artificial data generated by plugging actual time series of reservoir level into the HST model, while removing the time-dependent terms:

|

The artificial time series data maintains the original reservoir level variation, and thus the higher load in the 1991-1997 period. Figure 4.3.3 contains the partial dependence plot for this BRT model, which clearly shows that the independence of the artificial data with respect to time was correctly captured. This result confirms that the step in the time dependence captured by BRT is not a consequence of the higher hydrostatic load in 1991-1997.

Partial dependence plot for the artificial time-independent data. P1DR1. It should be noted that time influence is negligible.

4.4 Conclusions

The interpretation of BRT models resulted in meaningful information on dam behaviour and the effect of each input variable. It allowed verifying that the dam response was in agreement with intuition (e.g. higher hydrostatic load generated displacement towards downstream), and isolating the evolution over time.

The observation of the relative influence of each predictor allowed detecting the thermal inertia of the dam, its symmetrical behaviour, as well as the high variation over time for the leakage flows in the left abutment.

Moreover, the analysis of the time effect suggested that partial dependence plots based on BRT models are more effective to identify performance changes, as they are not coerced by the shape of the regression functions that need to be defined a priori for HST.

5 Anomaly detection

5.1 Introduction

In the precedent sections, the first three questions posed in Chapter 1 were answered: BRT models allowed to study the dam response to the main loads, the relevance of each of the potential inputs, and the evolution over time. The high accuracy of BRTs imply that the conclusions drawn from the model interpretation are reliable.

However, the main objective of dam safety is to prevent failures, for which anomalies need to be detected at early stage. This refers to the fourth question: “was any anomaly in the behaviour of the dam detected?” [4]. The capability of predictive models to identify anomalies has been much less frequently studied than their accuracy. Mata et al. [53] developed a model based on linear discriminant analysis for the early detection of developing failure scenarios. This methodology belongs to the Type 2 among those defined by Hodge and Austin [69]: the system is trained with both normal and abnormal behaviour data, and classifies new inputs as belonging to one of those categories. The drawback of this approach is that the failure mode must be defined beforehand and simulated with sufficient accuracy to provide the training data. Hence, the system is specific for the failure mode considered.

Jung et al. [70] used a similar approach: abnormal situations were defined based on the discrepancy between model predictions and observed data. They focused on embankment dam piezometer data, and only the reservoir level was considered as external variable (although they acknowledge that the rainfall can also be influential). It is not clear whether this methodology could be applied to other dam typologies or response variables.

Cheng and Zeng [43] presented a methodology based on the definition of some control limits, which depend on the prediction error of a regression model. In addition, they proposed a classification of anomalies based on the trend of the deviation and on how the overall deviance is distributed among the devices considered. It has the advantage of being simultaneously applied to a set of devices, although the case study presented is simple and the test period considered very short (30 days), as compared to the available data (1,555 days).

Other examples of application of advanced tools together with prediction intervals have been published by Gamse and Oberguggenberger [71], who employed the procedure of probabilistic quality control, Yu et al. [11], based on principal component analysis (PCA), Kao and Loh [47], who used PCA together with neural networks (NN), Li et al. [23], who considered the autocorrelation of the residuals and Loh et al. [48], who presented models for short and long term prediction.

Most of these works follow a conceptually similar methodology: a prediction model is built, the density function of the residuals is calculated and used to define the prediction intervals, which are applied to detect anomalies. In all cases, the efficiency is verified by means of its application to a short period of records. As an exception, Jung et al. [70] and Mata et al. [53] used abnormal data obtained from finite element models (FEM).

In this Chapter, the results of the previous stages are implemented in a methodology for early detection of anomalies, with the following innovative features:

- The prediction model is based on boosted regression trees (BRTs), which showed to be more accurate than other machine learning and statistical tools in previous works [22].

- Causal, non-causal and auto-regressive models are considered and jointly analysed.

- Artificially-generated data are taken as reference. They were obtained from a FEM model considering the coupling between thermal and hydrostatic loads. This allows to identify normal and abnormal behaviour, as observed by some authors ([70], [53]). In this work, the FEM results are compared to actually observed data to verify their reliability.

- A methodology is proposed to neglect false anomalies due to the occurrence of extraordinary loads. It is based on the values of the two main actions (thermal and hydrostatic).

- Three types of anomalies are considered, affecting both to isolated devices and to the whole structure.

- Although radial displacements in an arch dam were selected for the case study, the method can be applied to other dam typologies and response variables. Moreover, it adapts well to different amount and type of input variables, due to the great flexibility and robustness of BRTs.

The outputs considered correspond to the same radial displacements employed in Chapter 4 (Figure 4.1).

5.2 Methods

5.2.1 Prediction intervals

As mentioned above, most of the published works on the application of data-based models in dam monitoring are limited to the assessment of the model accuracy. However, the main practical utility of these models is the early detection of anomalies, for which it is necessary to compare the predictions with monitoring readings, and verify whether they fall within a predefined range. If the residual density function follows a normal distribution, that range can be defined in terms of the standard deviation of the residuals. For example, Kao and Loh [47] presented the 99% prediction intervals for models based on neural networks, while Jung et al. [70] tested 1, 2 and 3 standard deviations of the residuals as the width of the prediction interval.

Based on the results of a preliminary study [72], the prediction interval was set to , being and the mean and the standard deviation of the residuals, respectively. Special attention was paid to the determination of a realistic residual distribution. It is well known that the accuracy of a machine learning prediction model must be calculated from a data set not used for model fitting [73] (validation set). In the case of time series, this validation set should be more recent in time than the training data, since in practice the model is used for predicting a time period subsequent to the training data [51].

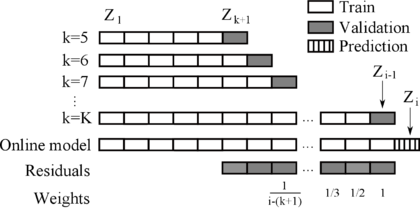

The hold-out cross-validation method meets this requirement, with the most recent data in the hold-out set (Figure 4).

|

| Figure 4: Hold-out cross-validation scheme. |

However, this implies discarding the most recent data for the model fit, which are generally the most useful, since they represent the most similar behaviour to that to be predicted (assuming there may be a gradual change in behaviour over time). Moreover, the validation data may be biased, if they correspond, for instance, to a especially warm (or cold) period.

To overcome these drawbacks while maintaining good estimate of the prediction error, an approach based on the hold-out cross validation method suggested by Arlot and Celisse [51] for non-stationary time series data was employed.

The proposed method takes into account the following specific aspects of dam behaviour: a) changes in the dam-foundation system are generally gradual, and b) dam behaviour models are typically revised annually, coinciding with the update of safety reports.

Let us consider that a behaviour model is to be fitted at the beginning of year , to be applied for anomaly detection during that year. The available data corresponds to the years , with being the initial year of dam operation. With the simple hold-out method, a model is fitted with data in years , whose accuracy is evaluated on data in .

In this work, a minimum training period of 5 years was considered. This value was chosen in view of the results of previous studies [22], and the evolution of model accuracy on the reference data, as described in section 5.3.2. Then, an iterative process was followed to reduce potential bias in the loads during . A set of predictions is generated as follows:

- For

- Fit a model trained with the period .

- Compute as the residuals of when predicting year .

- Compute the mean () and standard deviation () of

At the end of the process, residuals for a set of models are obtained, with the particularity that they are computed over different time periods, always subsequent to the training set . That is, the amount of observations in the training sample increases, and are used to predict the following year. The potential bias of some abnormal loads for one year is compensated by averaging, while a realistic prediction error is achieved, since it is always based on precedent data. A similar approach was employed by Herrera et al. to estimate demand in water supply networks, who employed the term growing window strategy [54].

Additionally, since the model accuracy typically increases as the training data grows, the actual model accuracy for the application period (year ) will be more similar to that obtained for . Hence, is more representative of the expected model performance for . To account for this issue, the prediction intervals are based on a weighted average of and . In particular, the weights for each year decrease geometrically from the most recent to the first available. A schematic representation of the procedure is included in Figure 5.

Finally, to take advantage of all the available data, a model is fitted with the entire period , with which the predictions for the following year () are computed.

Since the test set becomes part of the validation period in the subsequent years, the residuals generated during the application of the model in the test period can be added to those computed for previous years, so that there is no need to repeat the whole process: the previous residuals can be employed to obtain the new prediction interval, after updating the correspondent weights.

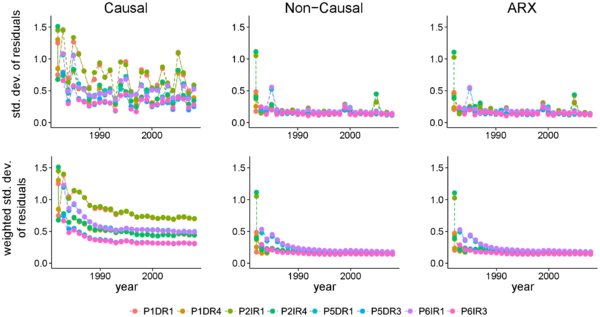

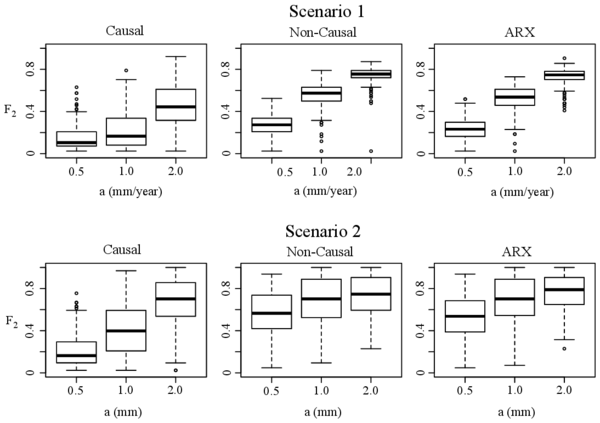

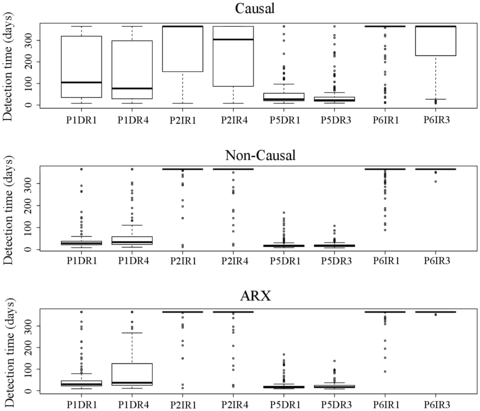

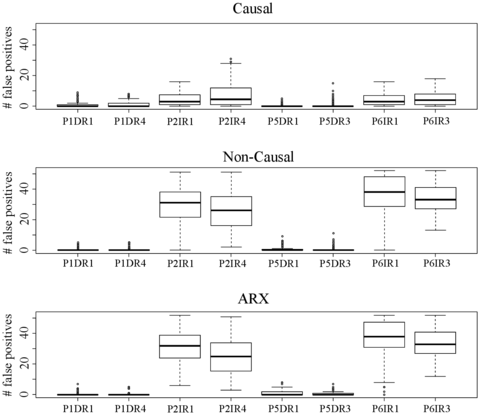

5.2.2 Causal and non-causal models

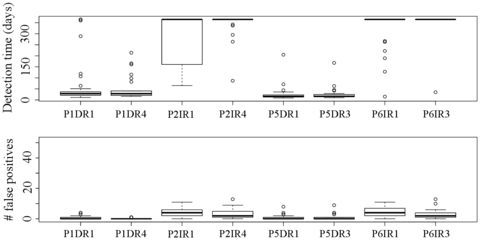

BRT models are robust against the presence of uninformative or highly correlated predictors [59], [74]. Hence, variable selection is much less influential for tree-based methods than for other machine learning tools [57]. This property was employed to build BRT models of three types.