Abstract

Progressive fracture in quasi-brittle materials such as concrete, rocks, soils, is often treated as strain softening in continuum damage mechanics. Such constitutive relations favour spurious strain localization and ill-posedness of boundary value problems, and call for some kind of regularization. In the present work, two different approaches are presented: a partially regularized local damage model that adjusts the softening part of a stress-strain law depending on the size of the element, and a fully regularized non-local damage model that introduces the characteristic length as an additional material parameter controlling the size of the fracture process zone.

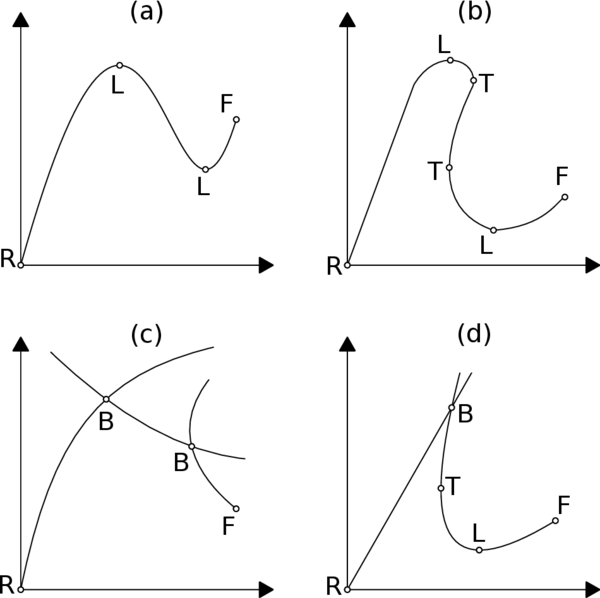

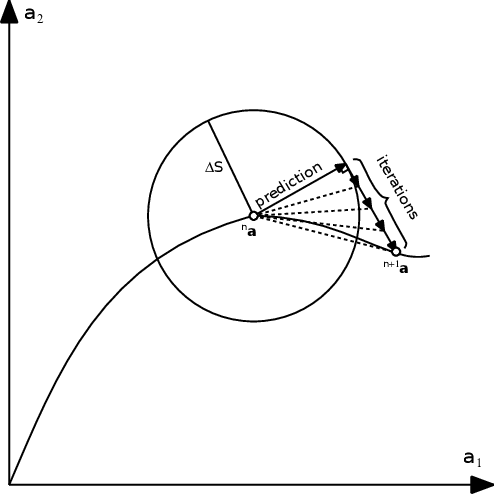

In addition, the strain softening of such models usually results in highly complex structural responses, including the snap-back type, and thus in this work we will be discussing non-linearity associated to damage modelling, and a global arc-length method for tracing the equilibrium path will be exposed.

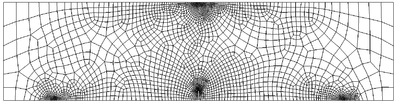

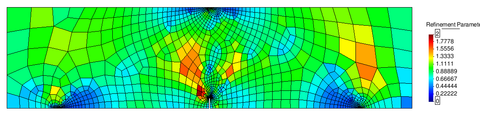

Furthermore, in the context of non-local damage models it is crucial to work with fine spatial discretizations at the damage progress zone, so that elements are smaller than the characteristic length. In this regard, a mesh-adaptive technique has been implemented with the purpose of enhancing the efficiency of the numerical analysis.

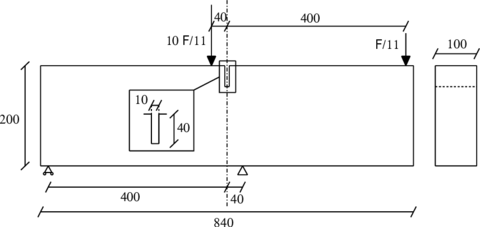

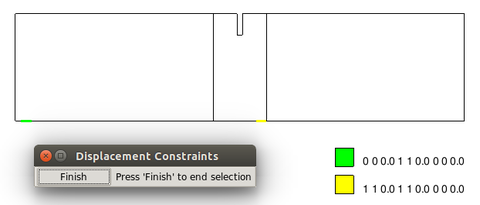

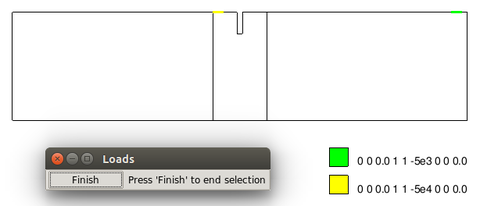

Finally, two classical examples, the three-point bending test, and the single-edge notched beam test, are performed in order to analyse the mesh objectivity of the implemented integral-type non-local damage model, and assess the strengths and limitations of the mesh-adaptive procedure.

Resum

L'evolució de la fractura en materials quasi-fragils com el formigó, les roques, o els sols, sovint és tractada amb estovament de deformacions dins el marc de la mecanica del dany continu. Aquestes relacions constitutives afavoreixen la localització fictícia de deformacions i el mal plantejament del les equacions i condicions de contorn del problema i, per tant, necessiten algun tipus de regularització. En aquest treball es plantegen dues metodologies diferents: un model de dany local parcialment regularitzat que ajusta la part d'estovament de la llei tensió-deformació en funció de la mida de l'element, i un model de dany no local plenament regularitzat que introdueix la longitud característica com un parametre del material addicional, que controla l'extensió de la zona de fractura.

D'altra banda, l'estovament de deformació d'aquests models normalment porta associades respostes estructurals complexes, incloent les de tipus snap-back i, per aixo, en aquest treball també exposarem les conseqüencies de la no-linealitat associada als problemes de dany, i presentarem un metode d'arc global per traçar el camí d'equilibri de la solució.

A més, en el marc dels models de dany no locals, és crucial treballar amb discretitzacions de l'espai prou fines a les zones de progrés del dany, per tal que els elements siguin menors que la longitud característica. En aquest sentit, s'ha implementat un tecnica de refinament de malla adaptatiu amb l'objectiu de millorar l'eficiencia dels analisis numerics.

Finalment, s'han dut a terme dos exemples classics, l'assaig d'una biga flectada per tres punts, i l'assaig d'una biga amb flexió no simetrica per quatre punts, amb l'objectiu de provar l'objectivitat de malla del model de dany no local, i analitzar els punts forts i limitacions del metode de refinament de malla adaptatiu.

1 Introduction and Objectives

Failure of quasi-brittle materials, particularly in geo-materials such as concrete, soils and rocks, is preceded by a gradual development of a non-linear fracture process zone and a localization of strain. Realistic failure analysis of such quasi-brittle structures requires the consideration of progressive damage due to micro-cracking, which is usually modelled by a constitutive law with strain softening. This typically results in highly non-linear structural responses and so efficient non-linear solvers based on arc-length control are needed for the numerical simulations.

If the damage parameter depends only on the strain state at the point under consideration, the boundary value problem becomes ill-posed and, as a result, numerical simulations exhibit a pathological mesh dependence and the energy consumed by the fracture process tends to zero as the mesh is refined.

Simple remedies based on the adjustment of the softening part of the stress-strain law, depending on discretization parameters like the size and type of the finite elements, try to enforce the correct energy dissipation by keeping the product of the width of the process zone and the local dissipation energy constant.

Advanced regularization methods introduce an additional material parameter, the characteristic length, which is related to the size and spacing of inhomogeneities and controls the width of the fracture process zone. These techniques, including non-local averaging methods, need sufficiently fine spatial discretizations in the process zone to resolve narrow bands of highly localized strains. In this regard, mesh-adaptive techniques can increase the efficiency of the analysis, automatizing the process.

In this context, the main objective of this work is to implement a robust isotropic damage model for the numerical modelling of geo-materials failure, that guarantees mesh objectivity of the solution. Furthermore, we will need to implement an incremental-iterative strategy based on the arc-length control, in order to deal with the strong non-linearities derived from the damage progression. Finally, an adaptive-mesh refinement technique would be a very attractive tool as a means to enhance the efficiency of the damage simulations.

This work is organized as follows. First of all, damage mechanics theory is introduced in the second chapter, stressing the strain localization phenomenon of classical local damage models and discussing two alternatives for regularizing the problem: the partially regularized local damage model, and the integral-type non-local damage model.

In the third chapter we will be reviewing all those tools that the damage model requires for its numerical implementation. This includes, an overview of the finite element method, a discussion on the non-linearity associated to damage problems, presenting a global arc-length strategy, and an introduction to mesh-adaptive techniques.

Finally, in the fourth chapter, two classical numerical examples in the damage mechanics field are performed in order to determine whether the non-local damage model is a robust method to model failure of quasi-brittle materials, and to test the implemented mesh-adaptive technique.

2 Damage Mechanics

2.1 Introduction

Many engineering materials subjected to unfavourable mechanical and environmental conditions undergo micro-structural changes that decrease their strength. Since these changes impair the mechanical properties of these materials, the term damage is generally used.

This effect is particularly relevant and difficult to predict in brittle or quasi-brittle materials such as concrete, rocks, mortar or other geo-materials. For instance, concrete is a highly heterogeneous, anisotropic, brittle material with a very complex non-linear mechanical behaviour due to the occurrence of localization of deformation. This localization of deformation can appear as cracks, if cohesive properties are dominant, or as shear zones, if frictional properties prevail. As a result of strain localization, material softening takes place and a significant reduction of the material stiffness during cyclic loading occurs. A good understanding of the mechanism of the formation of localization is of crucial importance since it acts as a precursor of fracture and failure.

In order to describe the behaviour of quasi-brittle materials, different approaches have been developed in the the last decades: continuum models within fracture mechanics [1], continuum damage mechanics [2,3], plasticity [4,5], coupled damage and plasticity [6,7], micro-plane theory [8,9], discrete models using a lattice approach [10,11] and the discrete element method (DEM) [12,13], to name but a few.

In the present work we have focused our efforts on developing an isotropic continuum damage model for geo-materials that works in a small deformation regime.

Particularly, this chapter will be devoted to present some basic notions on continuum damage mechanics, stressing the most relevant aspects of the implemented damage model; and then expose the strain localization phenomenon that arises in these kind of simulations, introducing two possible approaches to avoid the problems of localization.

2.2 Basic Concepts on Continuum Damage Mechanics

Continuum damage mechanics is a branch of continuum mechanics that describes the progressive loss of material integrity due to the propagation and coalescence of micro-cracks, micro-voids, and similar defects. These changes in the micro-structure lead to an irreversible material degradation, characterized by a loss of stiffness that can be observed on the macro-scale.

The term “continuum damage mechanics” was first used by Hult [14], but the concept of damage had already been introduced by Kachanov in 1958 in the context of creep rupture [15], and further developed by Rabotnov [16], Hayhurst [17], and Leckie and Hayhurst [18].

In the pioneering work of Kachanov [15] the concept of effective stress was introduced, and by using continuum damage he solved problems related to creep in metals. Rabotnov [16] gave the problem physical meaning by suggesting that the reduction of the sectional area was measured by means of the damage parameter. Thermodynamic formalism involved in the irreversible process of damage was developed by Lemaitre and Chaboche [19], and other important contributions to our knowledge about damage mechanics include: Mazars [20], Mazars and Pijaudier-Cabot [21], Chaboche [22], Simo and Ju [23], Ju [24,25], Oliver, Oller and Oñate [26], Oliver and Oller [27,28], Cervera and Chiumenti [29,30], etc.

The main objective of standard Continuum Damage Mechanics is to propose a continuum-mechanics based framework that allows to characterize, represent and model at the macroscopic scale the effects of distributed defects and their growth on the material behaviour. In order to fully understand these concepts, first it is important to make clear some basic notions of damage mechanics.

2.2.1 Description of classical uniaxial damage theory

Damage models work with certain internal variables that characterize the density and orientation of micro-defects. To introduce its basic concepts and understand the basis of damage mechanics, it is easier to begin with a uniaxial stress case.

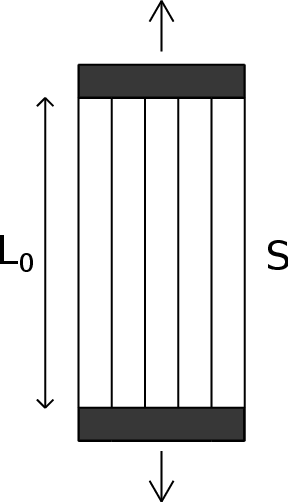

|

| Figure 1: Idealized material for the description of the uniaxial damage theory. |

For the present purpose, the material is idealized as a bundle of fibers parallel to the loading direction (Figure 1). Initially, all the fibers respond elastically, and the stress is carried by the total cross section of all fibers (Figure 2.1). As the applied strain is increased some fibers start breaking (Figure 2.2). Each fiber is assumed to be perfectly brittle, meaning that the stress in the fiber drops down to zero immediately after a critical strain level is reached. However, since the critical strain can differ from one fiber to another, the effective area (the area of unbroken fibers that can still carry stress) will decrease gradually from to . Of course, when the applied force diminishes (Figure 3.2), the affected fibers remain broken and so the damaged area of the specimen is irrecoverable.

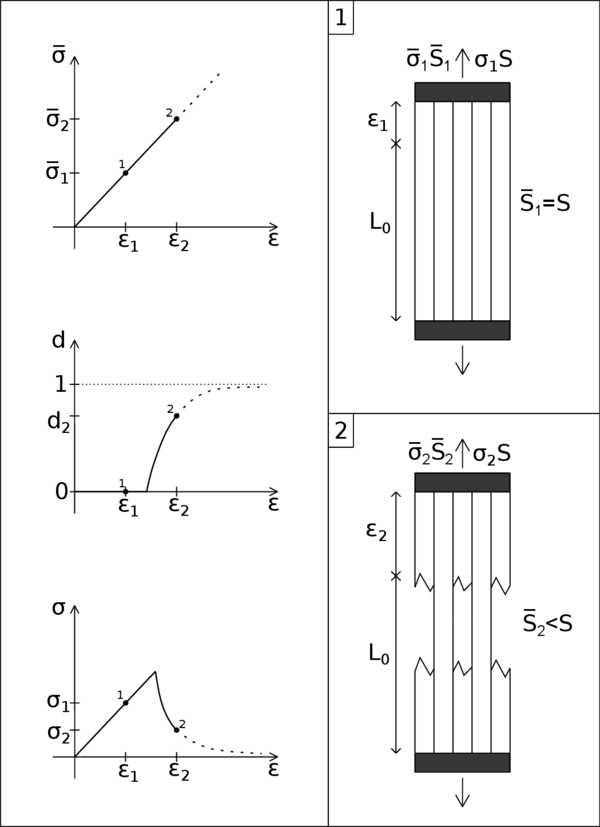

|

| Figure 2: Scheme of a uniaxial damage model through a monotonic loading process. |

|

| Figure 3: Scheme of a uniaxial damage model through a non-monotonic loading process. |

It is important to make a distinction between the nominal stress , defined as the force per unit initial area of the cross section, and the effective stress , defined as the force per unit effective area. The nominal stress enters the Cauchy equations of equilibrium on the macroscopic level, while the effective stress is the "actual" stress acting in the material micro-structure. From the condition of equivalence, , we obtain:

|

|

(1) |

The ratio of the effective area to the total area, , is a scalar characterizing the integrity of the material. Within the classical approach, a very simple measure of the damage amplitude in a given plane is obtained by measuring the area of the intersection of all defects with that plane. Thereby, we can define the damage parameter as:

|

|

(2) |

where is the damaged part of the area. An undamaged material is characterized then by , i.e., by . Due to propagation of micro-defects and their coalescence, the damage variable grows and at the late stages of degradation process it approaches asymptotically the limit value , corresponding to a complete damaged material with effective area reduced to zero.

In the simplest version of the presented scheme, each fiber is supposed to remain linear elastic up to the strain level at which it breaks. Consequently, the 1D effective stress is related to the elastic strain of the material by the uniaxial Hooke's law:

|

|

(3) |

where E is the elastic modulus of the undamaged material. Combining (1), (2) and (3), it is easily seen that the constitutive law for the nominal stress takes the form:

|

|

(4) |

For the uniaxial model formulation, equation (4) must be complemented with the damage evolution law, which can be characterized by the dependence between the damage variable and the applied strain:

|

|

(5) |

Function affects the shape of the stress-strain diagram and can be directly identified from a uniaxial test.

The evolution of the effective stress, damage variable, and nominal stress in a material that remains elastic up to the peak stress is shown in Figure 2. This description is valid only for monotonic loading by an increasing applied strain . When the material is first stretched up to a certain strain level that induces damage and then the strain decreases (Figure 3), the damaged area remains constant and the material responds as an elastic material with a reduced Young's modulus . This means that, during unloading and partial reloading, the damage variable in (4) must be evaluated from the largest previously reached strain and not from the current strain . This means that it is crucial to introduce an internal state variable characterizing the maximum strain level reached in the previous history of the material up to a given time :

|

|

(6) |

The expression implies that , where is the so-called damage threshold, a material parameter that represents the value of strain at which damage starts. In this formula, is not necessarily the physical time (it can be any monotonically increasing parameter controlling the loading process). The damage evolution law (5) is then rewritten in the form:

|

|

(7) |

which remains valid not only during monotonic loading but also during unloading and reloading. The evolution of the effective stress, damage variable, and nominal stress in a non-monotonic test is shown in Figure 3. Note that, upon a complete removal of the applied force, the strain returns to zero (due to elasticity of the unbroken fibers), i.e., the pure damage model does not take into account any permanent strains. Nevertheless, the material state will be different from the initial virgin state because, according to (6) and (7), when the state variable becomes greater than , the damage variable will not be zero again, thus the stiffness and strength mobilized in a new tensile loading process will be smaller than their initial values. Therefore, we can say that the loading history is always reflected by the value of the internal state variable .

To gain further insight, we can rewrite the constitutive law (4) in the form where is the apparent or damaged modulus of elasticity. Furthermore, instead of defining the variable like (6), we can introduce a loading function and postulate the loading-unloading conditions in the Kuhn-Tucker form:

|

|

(8) |

The first condition indicates that can never be smaller than , while the second condition means that cannot decrease. Finally, according to the third condition, can grow only if the current values of and are equal.

At this point, we can already summarize the basic components of the uniaxial damage theory:

- The stress-strain law in the secant format:

|

|

(9) |

with:

|

|

(10) |

- The law governing the evolution of the damage variable (7)

- The damage criterion, comprising:

- The loading function:

|

|

(11) |

specifying the elastic domain , i.e., the set of states for which damage does not grow.

- The loading-unloading conditions (8)

2.2.2 A simple isotropic damage theory

The simplest extension of the uniaxial damage theory to general multiaxial stress states is achieved by the isotropic damage model with a unique scalar variable. Isotropic damage models are based on the simplifying assumption that the stiffness degradation is isotropic, i.e., the stiffness moduli corresponding to different directions decrease proportionally, independently of the direction of loading. Since an isotropic elastic material is characterized by two independent elastic constrains, a general isotropic damage model should deal with two damage variables. The model with a single variable makes use of the additional assumption that the relative reduction of all the stiffness coefficients is the same, in other words, that the Poisson's ratio is not affected by damage. In this regard, the damaged constitutive tensor is expressed as:

|

|

(12) |

where is the elastic constitutive tensor of the intact material, and is the damage variable. In the present context, is the secant constitutive tensor that relates the total strain to the total stress , according to:

|

|

(13) |

One can clearly notice that (12) is a generalization of (10), and (13) is a generalization of (9) and (4). Furthermore, equation (13) can alternatively be written as:

|

|

(14) |

which is the multidimensional generalization of (1), and where is the effective stress tensor defined as:

|

|

(15) |

Similar to the uniaxial case, we introduce a loading function specifying the elastic domain and the states at which damage grows. The loading function now depends on the strain tensor , and on a variable that controls the evolution of the elastic domain. Physically. represents a scalar measure of the largest strain level ever reached in the history of the material. States for which are supposed to be below the current damage threshold. Damage can grow only if the current state reaches the boundary of the elastic domain, i.e., when . Essentially, we can postulate the damage criterion for a multiaxial isotropic damage model with:

- The loading function:

|

|

(16) |

- The loading-unloading conditions (8)

where is the equivalent strain, i.e., a scalar measure of the strain level, and is the largest value of equivalent strain calculated in the previous deformation history of the material up to its current state. In this regard, (6) can now be generalized to:

|

|

(17) |

An important advantage of an isotropic damage model is that the stress evaluation algorithm is usually explicit and there is no need to use an iterative solution for non-linear equations.

Thereby, for a particular strain increment, the corresponding stress is obtained by computing the current value of equivalent strain, updating the maximum previously reached equivalent strain and the damage variable, and reducing the effective stress according to (13). In essence, one must follow the scheme of Table 1:

| Input data for time : , , , |

| 1. Determine new strain vector: |

| 2. Evaluate effective stresses: |

| 3. Compute equivalent strain from the new strain vector: |

| 4. Update with (17): If |

| 5. Update damage variable: |

| 6. Compute stresses: |

2.2.2.1 Equivalent strain forms

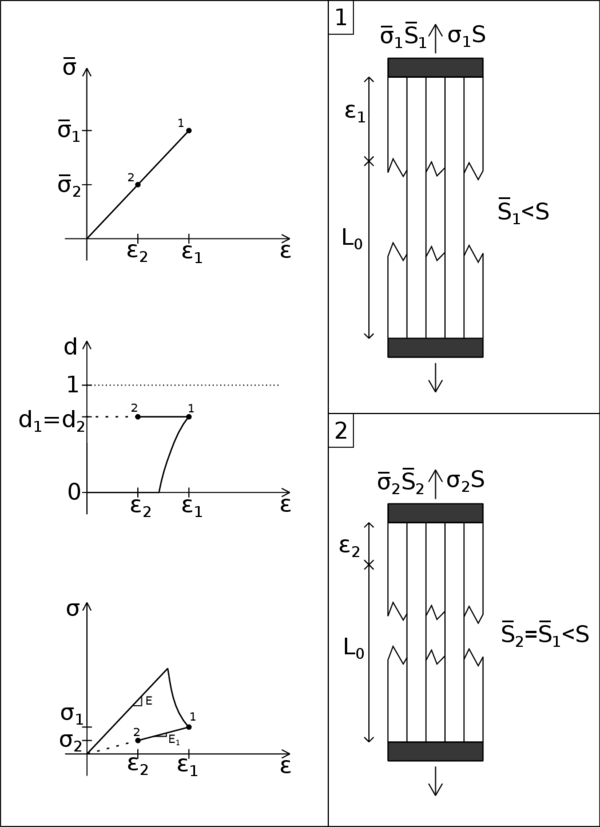

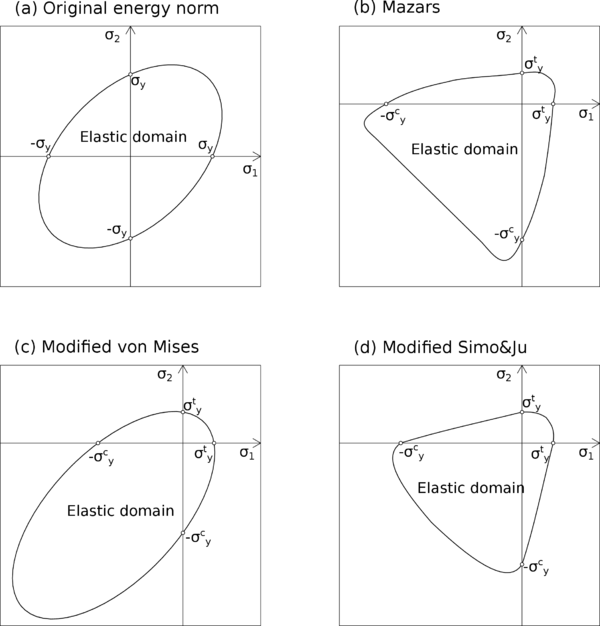

To some extent, the expression defining the equivalent strain plays a role similar to the yield function in plasticity, because it directly affects the shape of the elastic domain (Figure 4).

|

| Figure 4: 3D elastic domain for a generic equivalent strain. |

There are numerous forms of equivalent strain in the literature, but the simplest choice is to define it as the Euclidean norm of the strain tensor:

|

|

(18) |

or as the energy norm:

|

|

(19) |

where are the components of the elastic constitutive tensor and normalization by Young's modulus is introduced to obtain a strain-like quantity.

The two norms of introduced above, lead to symmetric elastic domain in tension and compression. Nevertheless, several materials (rocks, concrete, ceramics, etc.) often show a non symmetric damage surface, i.e., the yield value in compression can be several times the value in tension. In order to overcome this limitation, it is necessary to adjust the definition of the equivalent strain.

Micro-cracks in concrete grow mainly if the material is stretched, and so it is natural to take into account only normal strains that are positive (tensile strains) and neglect those that are negative (compressive strains). This leads to the so-called Mazars definition of equivalent strains [31]:

|

|

(20) |

or to its energetic counterpart:

|

|

(21) |

where McAuley brackets denote the "positive part" operator. For scalars , i.e.,

|

|

(22) |

For symmetric tensors, such as the strain tensor , the positive part is a tensor with the same principal directions as the original one, but replacing its principal values by their positive parts . The subscript ranges from 1 to 3 (the number of spatial dimensions). If we consider the following diagonalized strain tensor:

|

|

(23) |

the positive part of is expressed as:

|

|

(24) |

Thereby, knowing that , definition (20) can be rewritten as:

|

|

(25) |

An alternative formula, called the modified von Mises definition [32], reads:

|

|

(26) |

where is a model parameter that sets the ratio between the uniaxial compressive strength and the uniaxial tensile strength, is the Poisson's ratio of the material, is the first invariant of the strain tensor, and is the second invariant of the deviatoric strain tensor. Given a generic symmetric strain tensor :

|

|

(27) |

The first invariant is the trace of the strain tensor:

|

|

(28) |

One can always decompose the strain tensor into its volumetric and deviatoric parts :

|

|

(29) |

|

|

(30) |

From the deviatoric strain tensor we can calculate and :

|

|

(31) |

|

|

(32) |

Another possibility is to work with an evolution of the model proposed by Simo and Ju [23], using the energy norm of the strain tensor and modifying it to take into account the different degradation in tension and compression of concrete-like materials. In this case, the equivalent strain takes the form:

|

|

(33) |

where the parameter is a weighting factor depending on the effective stresses , given by:

|

|

(34) |

As mentioned before, the parameter is defined by means of the ratio between the compression elastic limit and the tension elastic limit , i.e.:

|

|

(35) |

In the case of concrete .

The equivalent strains presented in this subsection lead to different damage surfaces but all three share a common trait, the elastic limit in tension is much lower than the elastic limit in compression (Figure 5.b, 5.c, 5.d). In contrast, the equivalent strain obtained from the original energy norm definition (19) leads to a symmetric damage surface in which there is no difference between the elastic limit in tension and compression (Figure 5.a).

|

| Figure 5: Damage surfaces for different equivalent strains in the 2D principal stress space. |

2.2.3 Damage evolution laws

As we stated for the uniaxial case, one of the basic components of a damage model is the law governing the evolution of the damage variable (7). There are various damage governing laws that can be effectively used to model damage growth in geo-materials, and in the following lines we will present some of the most widely used options.

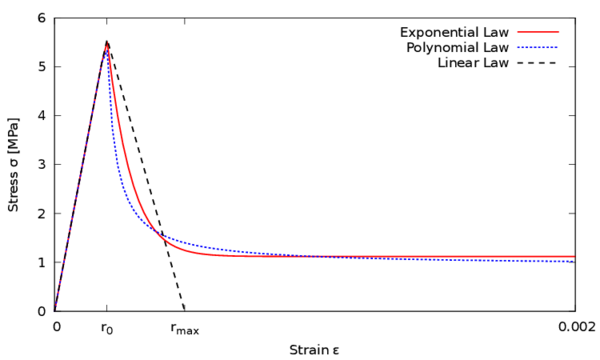

Two typical choices to describe the evolution of damage above the damage threshold are the exponential law [20]:

|

|

(36) |

and the polynomial law [33]:

|

|

(37) |

In (36) and (37) parameter is associated to residual strength and parameter controls the slope of the softening branch at the peak of the stress-strain curve.

Another option, especially suited for simplified analyses, is the linear softening law. Limiting the state variable between the damage threshold and a maximum admissible value , damage evolves according to:

|

|

(38) |

which leads to a linear softening branch in the stress-strain curve.

The effect of using one form of damage evolution law or another is clearly seen in a uniaxial case. Figure 6 shows the aspect of the stress-strain curve in a unidimensional case using the three softening laws presented above. For all three cases we have used a damage threshold of and a Young's modulus of MPa. The exponential law is represented with and , the polynomial law with and , and for the linear law we have defined . One can see that when the strain exceeds the damage threshold after the elastic branch , a softening post-peak branch starts as a result of the damage growing.

|

| Figure 6: Unidimensional stress-strain curves for different damage evolution laws. |

An alternative exponential softening model was proposed in [27]:

|

|

(39) |

The parameter is obtained from the following expression:

|

|

(40) |

where is the specific fracture energy per unit area, is the characteristic length for the fractured domain, usually taken as the characteristic length of the finite elements, and is the damage threshold which, for the Simo and Ju model, can be computed from:

|

|

(41) |

being the tensile strength of the material, and the Young's modulus.

Another popular damage evolution law specifically designed for concrete was proposed by Mazars [31,20]. He introduced two damage variables, and , that are computed from the same equivalent strain (25) using two different damage functions, and . Function is identified from the uniaxial compressive test while corresponds to the tensile test. The evolution law governing damage results from the combination of the two parts:

|

|

(42) |

Functions characterizing the evolution of damage in compression and in tension were proposed in the exponential form:

|

|

(43) |

|

|

(44) |

where , , , are material parameters related to the shape of uniaxial stress-strain diagrams.

The coefficient in (42) ranges from 0 to 1 and takes into account the character of the stress state. It is evaluated from:

|

|

(45) |

where are the principal strains due to positive effective stresses, i.e., the principal values of :

|

|

(46) |

being the identity tensor.

The parameter in (42) was equal to 1 in the original version of the model [31]. When it is higher than 1, allows to slow down the evolution of damage under shear loading (when principal stresses have different sign).

The definition of tells us that if all principal stresses are negative then and , and if all principal stresses are positive we have and . These are the "purely compressive" and "purely tensile" stress states. For intermediate stress states the value of is between and , depending on the relative magnitudes of tensile and compressive stresses.

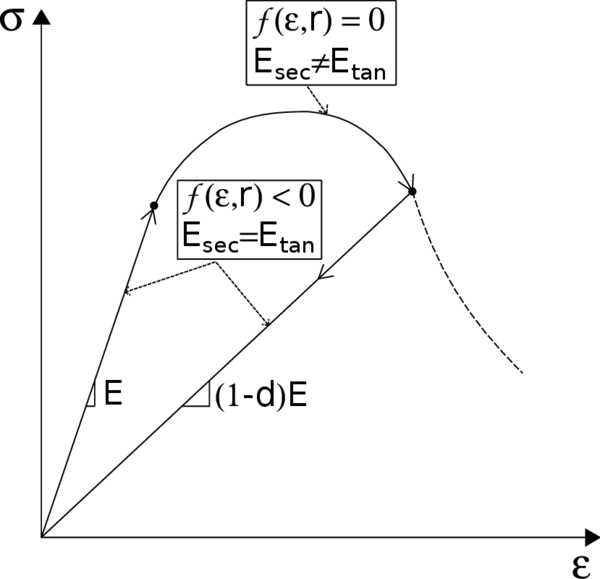

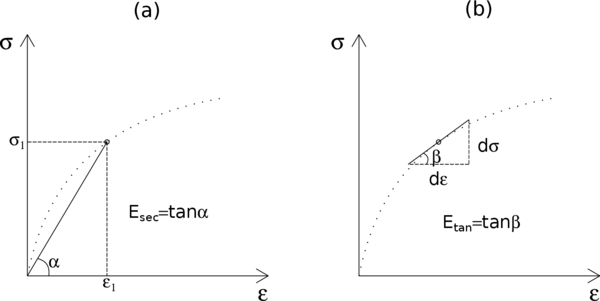

2.2.4 Elastic-damage tangent constitutive tensor

In this section we will present the tangent constitutive tensor, which gives us an important advantage, from a computational point of view, when dealing with incremental iterative solution procedures. Basically, with the tangent constitutive tensor one can achieve a quadratic convergence rate, whereas with the secant constitutive tensor, the solution is limited to a linear convergence.

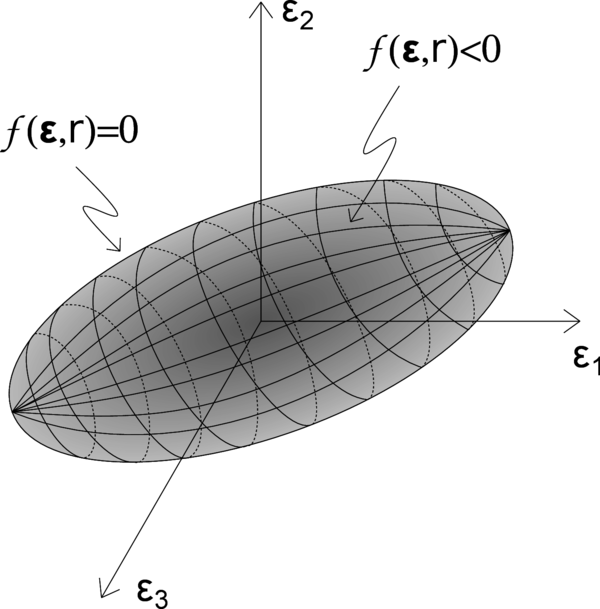

The damaged or secant constitutive tensor introduced in (12) and (13) links the total stress to total strain and plays the role of the tangent stiffness only for processes with elastic loading or unloading with constant damage (). To obtain the tangent stiffness for a loading process with growing damage ( and ), it is necessary to find the link between stress and strain increments. This concepts are clearly seen in a unidimensional case (see Figure 7). In this regard, knowing the difference between secant and tangent moduli (see Figure 8.a and 8.b) is useful to understand the generalization to secant and tangent constitutive tensors.

|

| Figure 7: Uniaxial stress-strain diagram of a loading-unloading process. |

|

| Figure 8: Secant and tangent moduli. |

In essence, we define the elastic-damage tangent constitutive tensor as the one that satisfies the following relation:

|

|

(47) |

Now, by considering the equation (13), we can obtain the rate of change of stress as follows:

|

|

(48) |

from which we should distinguish two possible situations:

- A process with elastic loading or unloading ()

- A loading process with growing damage ()

With equation (48) becomes , from which we obtain that the elastic-damage tangent constitutive tensor coincides with the secant constitutive tensor:

|

|

(49) |

In this case, in order to obtain an explicit expression of the tangent constitutive tensor, the damage rate should be expressed in terms of the strain rate . This can be achieved by imposing the consistency condition in equation (16) and combining it with the rate of equation (7):

|

|

(50) |

For convenience, we introduce symbols for the derivative of the damage function , and for the second order tensor obtained from the partial derivative of the equivalent strain with respect to the strain tensor . Thereby, substituting into the rate of change of stress (48), we get:

|

|

(51) |

and, consequently, the elastic-damage constitutive tensor results:

|

|

(52) |

where the form of and depend on the damage evolution law, and on the equivalent strain expression chosen for the damage model.

For instance, for a model with an equivalent strain based on the energy norm of equation (19), tensor is given by:

|

|

(53) |

and the resulting tangent constitutive tensor for this particular case is:

|

|

(54) |

which preserves symmetry (). It must be noticed though that, for other definitions of equivalent strain, this kind of symmetry is lost.

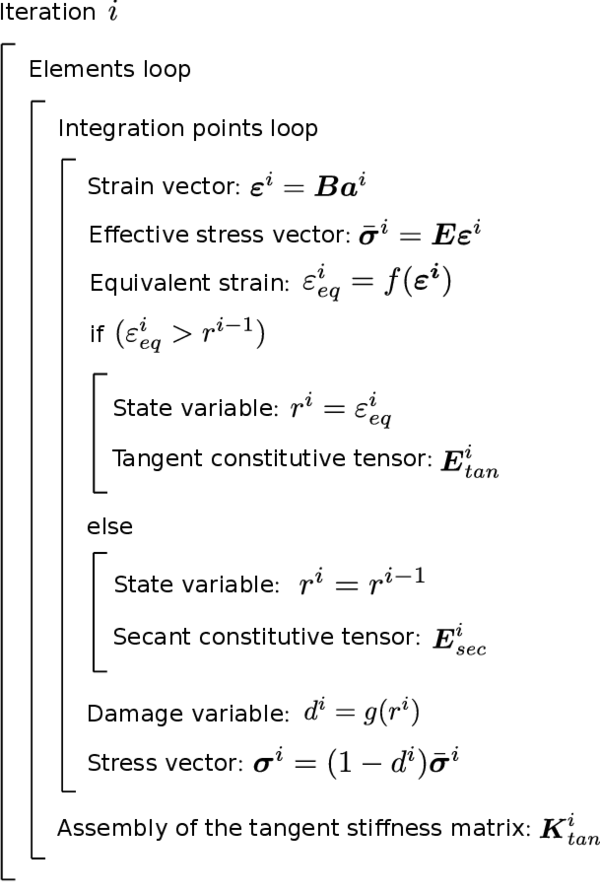

The typical scheme for the numerical implementation of the elastic-damage tangent constitutive tensor is shown in Table (2).

| Input data for time : , , , , | |

| If () | Loading with growing damage (): |

| 1. Compute damage function derivative: | |

| 2. Calculate equivalent strain partial derivative: | |

| 3. Compute tangent constitutive tensor with (52): | |

| Else | Elastic loading or unloading (): |

2.3 Local and Non-local Damage Models

In previous sections we have presented the basic ideas of isotropic damage models with a unique scalar variable . Although these models are quite simple, they are often used to model the failure of concrete and other quasi-brittle materials that show important strain localization. If the damage parameter depends only on the strain state at the point under consideration, the numerical simulations exhibit a pathological mesh dependence, and physically unrealistic results are obtained.

This is the typical behaviour of the so-called local damage models, which are not able to properly describe both the thickness of localization and distance between them. They suffer from mesh sensitivity (for size and alignment) and produce unreliable results. The strains concentrate in one element wide zones and the computed force-displacement curves are mesh-dependent. The reason is that differential equations of motion change their type (from elliptic to hyperbolic in static problems) and the rate boundary value problem becomes ill-posed.

Thus, classical constitutive models require an extension in the form of a characteristic length to properly model the thickness of localized zones. Such extension can be done with micro-polar [34,35], strain gradient [36,37,38], viscous [39,40,41] and non-local terms [42,43,44,45]. In this work we have developed the latter approach.

Similarly to previous sections, in order to make it more understandable, we will present the inconveniences arisen due to strain localization with a uniaxial example. After that, the basic concepts of the implemented non-local damage model will be stated.

2.3.1 Strain localization phenomenon

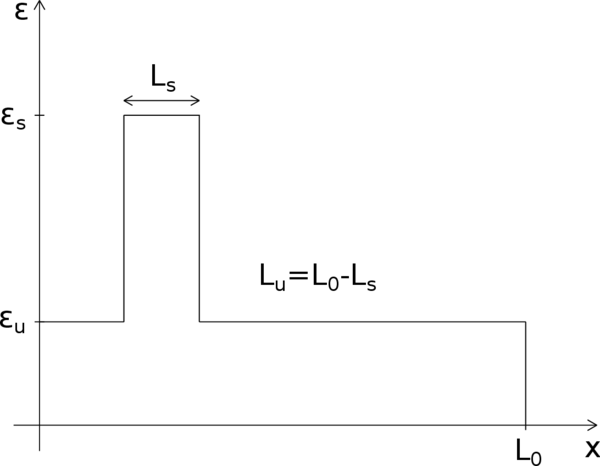

The idea of modelling damaged concrete and other quasi-brittle materials as strain-softening continua, was not immediately accepted by all the scientific community. Actually, most of the early analyses were not truly objective and, upon mesh refinement, their results would not converge to a robust solution. Let us explain the nature of the problem by means of a unidimensional example.

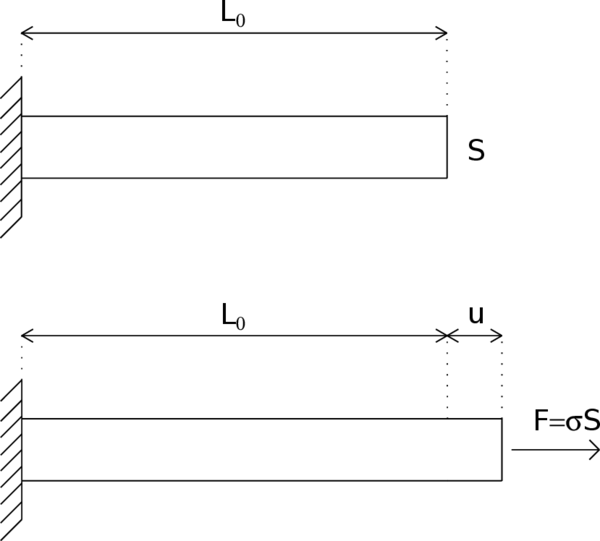

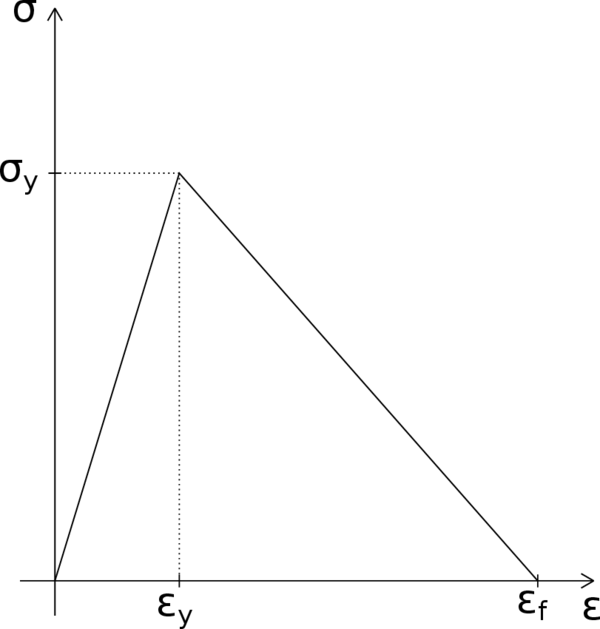

Consider a straight bar with a constant cross section and a total length under uniaxial tension (Figure 9). The material is assumed to obey a simple stress-strain law with linear elasticity up to the peak stress , followed by linear softening (Figure 10). If the bar is loaded in tension by an applied displacement at its right extreme, the response remains linear elastic up to , instant at which the force transmitted by the bar (reaction at the support) attains its maximum value . After that, the resistance of the bar starts decreasing until the strain reaches and the transmitted stress completely disappears.

|

| Figure 9: Bar under uniaxial tension. |

|

| Figure 10: Stress-strain diagram with linear softening. |

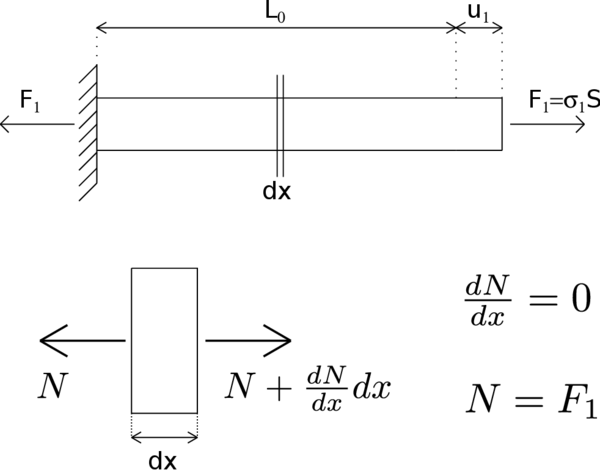

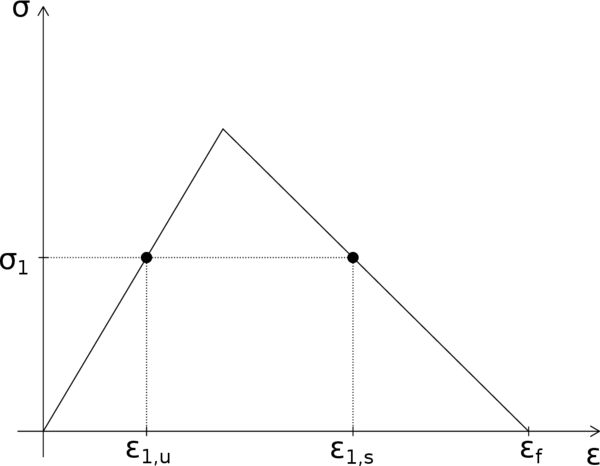

Equilibrium equations imply that axial forces are constant along the bar and so the stress profile must remain also uniform (Figure 11). However, at a given stress level between zero and , there are actually two values of strain, and , that satisfy the constitutive equation (Figure 12). This is quite straightforward if one considers that each cross section can be either damaged, or undamaged. Indeed, an undamaged section will be on the elastic unloading branch with , whereas a damaged one will fall in the softening branch with .

|

| Figure 11: Axial force acting along the bar. |

|

| Figure 12: Possible strain values corresponding to the same stress level. |

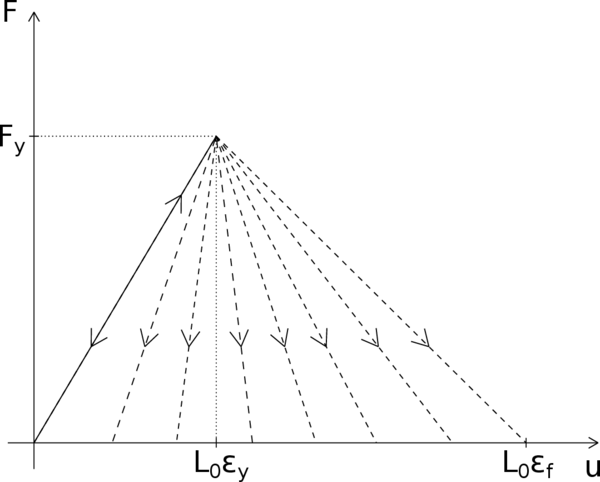

Thereby, the strain profile along the bar does not have to be necessarily uniform. In fact, any piecewise constant strain distribution that jumps between the two possible strain values represents a valid solution (Figure 13). In Figure 13 we have denoted by the cumulative length of the softening regions and by the cumulative length of the unloading regions. When stress is completely relaxed, the strain in the unloading region is and the strain in the softening region is . Thus the total elongation of the bar in this case is . At this point, although is perfectly known from the linear softening law, is totally undetermined. This means that the problem has infinite possible solutions with its corresponding post-peak branches of the load-displacement diagram (Figure 14). This fan of post-peak branches is bounded on one side by the solution with uniform softening () and on the other side by the solution with uniform unloading (). The first limit corresponds to a totally damaged bar while the latter represents the case in which the bar is unloaded before any damage takes place. All the other solutions describe processes in which a part of the bar is damaged. However, it is not that easy to know which of all these solutions is the one that reflects better the actual failure process.

|

| Figure 13: Piecewise constant strain profile along the bar. |

|

| Figure 14: Fan of possible post-peak branches of the load-displacement diagram. |

The ambiguity is removed if imperfections are taken into account. The material properties and sectional dimensions of a real bar are not perfectly uniform. Thereby, supposing that there is a small region where the strength is lower than in the remaining portion of the bar, when the applied stress reaches the peak of that weaker region, softening starts and the stress decreases. Consequently, the material outside the damaged region must unload elastically because its strength has not been exhausted. This leads to the conclusion that the size of the softening region is related to the size of the region with minimum strength. The problem is that such a region can be arbitrarily small and so the corresponding softening branch is arbitrarily close to the elastic unload branch. Therefore, the standard strain-softening continuum formulation leads to a solution with several pathological features:

- The softening region is infinitely small.

- The load-displacement diagram always shows snap-back, regardless of the size of the structure and the ductility of the material.

- The total amount of energy dissipated during the failure process tends to zero.

From the mathematical point of view, these problematic features are related to the loss of ellipticity of the governing differential equation. The boundary value problem becomes ill-posed as a result, i.e., it no longer has a unique solution with continuous dependence on the given data.

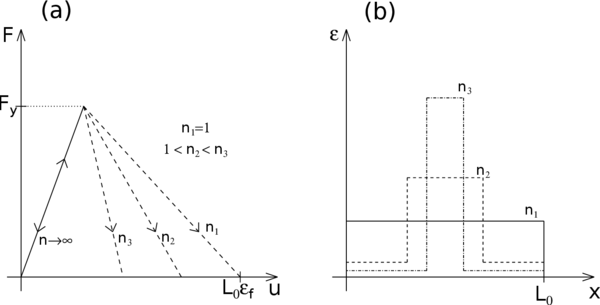

From the numerical point of view, these inconveniences are manifested by a pathological sensitivity of the results to the size of the finite elements. For instance, let us suppose that the bar is discretized uniformly by two-node elements with linear displacement interpolation and that the weakest region is located at the middle of the bar. The numerical algorithm will capture a very localized solution with a softening region extending over one only element. In consequence, the softening length will decrease as the number of elements increases () and thus the softening post-peak branch will depend completely on the number of elements of the mesh. Indeed, as it is shown in Figure 15, for all the bar is damaged and the softening length is the total length of the bar , whereas for the softening region is more localized with strains becoming especially important at the damaged element. In the limit case of the softening branch would coincide with the elastic branch.

|

| Figure 15: Mesh dependence of the numerical results. (a) Load-displacement diagrams for different number of elements. (b) Strain profiles for a prescribed imposed displacement. |

In this section we have seen a problem that commonly arises in the simulation of damage processes involving quasi-brittle materials: the strain localization phenomenon. Although only a uniaxial case has been discussed, this problem is also present in multidimensional problems with similar effects on the numerical results.

The simple one-dimensional example presented in this section illustrates the essence of the problem with localization of inelastic strain into a zone of an arbitrarily small width. In uniaxial cases, localization occurs when the peak of the stress-strain diagram is reached, independently of the specific constitutive model used. In multiple dimensions, the analysis of the localization process is more complicated and the derivation of a criteria for the start of localization represents a challenging problem.

Mesh refinement in multiple dimensions leads to a reduction of the total dissipated energy (area under the load-displacement curve) with a lower peak load and a more brittle response. Apart from this, upon further refinement, one can also face convergence difficulties due to the abrupt change of strain distribution, from a smoothly distributed to a highly localized one. These effects will be shown more clearly with the simulation of a bi-dimensional case later on (see Chapter 4).

2.3.2 Regularization of the problem

In the present work, two different damage models were implemented in the research of a robust code that avoided pathological sensitivity of the finite element results to the mesh size.

2.3.2.1 Partially regularized local damage model

As a first attempt, we tried a simple partially regularized local damage model, based on the crack band models [46].

This model is, essentially, an isotropic damage model following the classical local damage theory, in which an energy based adjustment of the stress-strain diagram, depending on the size of the element, is introduced in the definition of the damage parameter.

In this regard, the model makes use of the damage evolution law in (39), which depends on the characteristic length of the fractured domain included in (40). This characteristic length of the fractured domain is actually what allows to partially regularize the model.

In essence, for elements larger than a prescribed limit length , the fracture length takes the value of the characteristic length of the element , whereas for elements smaller than the limit length, the fracture length is taken as such limit length.

|

|

(55) |

Therefore, before calculating the damage parameter, one will have to compute the characteristic length of the element and then compare it with the limit length, a material parameter usually related to the maximum aggregate size of the composite material. The characteristic length of the element, on the other hand, can be computed from the dimensions of the element. In two-dimensional analysis, for instance, the characteristic length of the element can be defined as the diameter of the circle that contains the area of the element.

|

|

(56) |

where is the area of the considered element.

This approach is endowed with some, but not all, of the properties of fully regularized damage models. It can ensure a correct energy dissipation in a localized damage band, but the width of the numerically resolved fracture process zone depends on the element size and tends to zero as the mesh is refined. This is why this approach cannot be considered as a true localization limiter. It provides only a partial regularization of the problem in the sense that global response characteristics do not exhibit spurious mesh sensitivity, but the mesh-induced directional bias is still present.

Scaling of the stress-strain diagram is straightforward only for models that explicitly control the evolution of inelastic strain, e.g., for softening plasticity [47] or smeared crack models [27]. In those cases, the desired scaling effect is achieved by a modification of the hardening modulus (derivative of stress with respect to inelastic strain). Nevertheless, in continuum damage mechanics, non-linearity and softening are controlled by the damage evolution law, and the reduction factor multiplies the total strain. For this reason, it is not easy to scale only the post-localization part of strain while keeping the unloading part unaffected.

Furthermore, in some cases, diffuse softening damage patterns in certain parts of the structure can coexist with localized cracks in other parts, and they may even change during the loading process. In such cases it is virtually impossible to define a reasonable rule for the adjustment of the stress-strain diagram according to the element size.

2.3.2.2 Fully regularized non-local damage model

The introduction of a characteristic length into the constitutive model, and the formulation of a non-local strain-softening model, have been shown to prevent the spurious localization of strain-softening damage, to regularize the boundary value problem, and to ensure numerical convergence to physically meaningful solutions.

In this regard, fully regularized description of localized inelastic deformation is achieved by a proper generalization of the underlying continuum theory, which can be done through two different approaches: generalization of the kinematic relations, i.e., continua with micro-structure (Cosserat-type continua or strain gradient theories), and continua with non-local strain (non-local elasticity); and generalization of constitutive equations, i.e., material models with gradients of internal variables, and materials models with weighted spatial averages of internal variables.

In this work we have worked with the second kind of generalization, because kinematic and equilibrium equations remain standard, and the notions of stress and strain keep their usual meaning. Moreover, in the generalization of constitutive equations through non-local models, we have focused on the integral-type methods.

Integral-type non-local models abandon the classical assumption of locality and admit that stress a certain point depends, not only on the state variables at that point, but also on the distribution of the state variables over the whole body, or over a finite neighbourhood of the point under consideration.

The first models of this type, proposed in the 1960s, aimed at improving the description of elastic wave dispersions in crystals. Non-local elasticity was further developed by Eringen, who later extended it to non-local elasto-plasticity [48,49]. Subsequently, it was found that certain non-local formulations could act as efficient localization limiters with a regularizing effect on problems with strain localization [43].

In a general manner, the non-local integral approach consists in replacing a certain variable by its non-local counterpart, obtained by weighted averaging over a spatial neighbourhood of each point under consideration.

Let be some local field in a domain , the corresponding non-local field is defined as:

|

|

(57) |

where is the chosen non-local weighting function.

In an infinite, isotropic and homogeneous medium, the weighting function depends only on the distance between the source point , and the receiver point . Thereby, we usually write , where is usually chosen as a non-negative function monotonically decreasing for .

One possible is the Gauss distribution function:

|

|

(58) |

where the characteristic length is a material parameter reflecting the internal length of the non-local continuum.

If a bounded support is preferred, one can also truncate the previous function as follows:

|

|

(59) |

where the interaction radius is a parameter related to the characteristic length . In the present work, we have considered .

Another typical choice for the weighting function is the following truncated quartic polynomial function:

|

|

(60) |

In essence, the interaction radius corresponds to the smallest distance between points and at which the interaction weight vanishes (for weighting functions with a bounded support) or becomes negligible (for weighting functions with an unbounded support). It represents a very important parameter because it controls the size of the softening region.

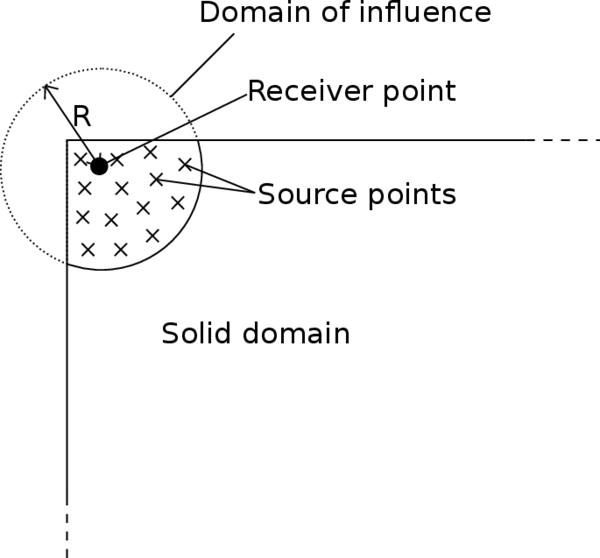

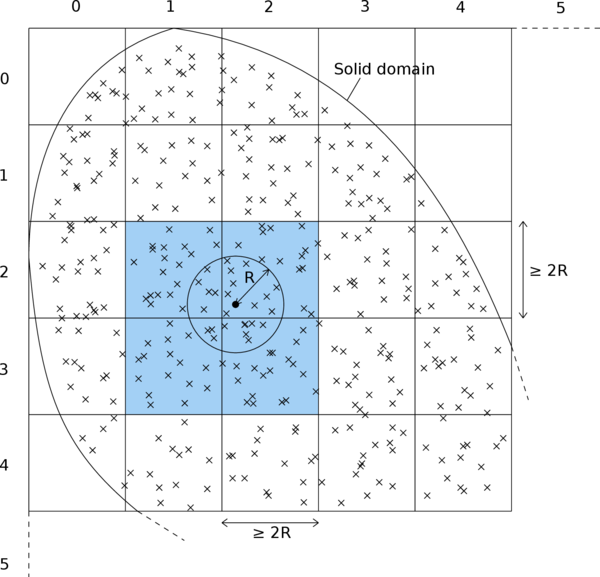

The interval, circle, or sphere of radius , centered at , is called the domain of influence of point . In the vicinity of the boundary of a finite body, it is simply assumed that the averaging is performed only on the part of the domain of influence that lies within the solid (Figure 16).

|

| Figure 16: Averaging zone when the domain of influence protrudes through the boundary of a solid. |

Thereby, if a weighting function with bounded support is chosen, the non-local average at (57) will be calculated as a weighted sum over the values at all the finite element integration points lying within the non-local interaction radius .

In the application to softening materials, it is often required that the non-local operator do not alter a uniform field (consistency of order 0), which means that the weighting function must satisfy the normalizing condition:

|

|

(61) |

In order to satisfy it, the weighting function is usually rescaled as:

|

|

(62) |

A suitable non-local damage formulation that restores well-posedness of the boundary value problem is obtained if damage is computed from the non-local equivalent strain [50].

In the loading function (16), the local equivalent strain is replaced by its weighted spatial average:

|

|

(63) |

The internal state variable is then the largest previously reached value of the non-local equivalent strain:

|

|

(64) |

It is important to note that the damage variable is evaluated from the non-local equivalent strain , whereas the strains used in (13) to compute the stresses are considered as local. This way, during the elastic range, when the damage variable remains equal to zero, the stress-strain relation is completely local. The process for the evaluation of stresses with this non-local damage model is schematically shown in Table 3.

| Input data for time : , , , |

| 1. Determine new strain vector: |

| 2. Evaluate effective stresses: |

| 3. Compute equivalent strain from the new strain vector: |

| 4. Calculate non-local equivalent strain from (63): |

| 5. Update with (64): If |

| 6. Update damage variable: |

| 7. Compute stresses: |

3 Numerical Implementation

3.1 Introduction

So far, we have presented the theoretical basis of the classical continuum damage mechanics, including an important limitation regarding mesh dependency of the solution, and we have seen one regularization technique to avoid the cited inconvenience.

At this point, before going into example tests of continuum damage models, we must actually understand how the explained theory can be applied in a practical way that allows the performance of such experiments. Essentially, this chapter will present a set of methods and tools, directly or indirectly related to the implementation of a damage model, that are necessary to provide a proper framework for the simulation of damage mechanics problems.

In this regard, I would like to start this section by shortly presenting KRATOS, the framework in which the damage model has been implemented.

|

| Figure 17: KRATOS' logo. |

KRATOS is an Open-Source framework for the implementation of numerical methods for the solution of engineering problems. All of its code is written in C++, and Python scripting language is used to define the main procedure of the problems, which significantly improves the flexibility of the framework in time of use. It features a "core and applications" approach, where standard tools (databases, linear algebra, search structures, etc.) come as a part of the core and are available as building blocks in the development of new applications which focus on the solution of particular problems of interest. KRATOS is designed to allow collaborative development by large teams of researchers focusing on modularity as well as on performance. Its ultimate goal is to simplify the development of new multi-disciplinary finite element programs.

Thereby, the implemented damage model must be understood as a "Damage Mechanics Application" inside that global framework, with all the consequences it implies. Perhaps the most important one is that the starting point of the "Damage Mechanics Application" was the already implemented linear-elastic Finite Element code from the "Solid Mechanics Application" of KRATOS. This means that, from the beginning of this work, I have been able to primarily focus on the implementation of the new damage model, knowing that the FEM core was properly working. The only drawback of this is that one needs to continuously adapt the new implementations to the previously set structure. That aside, it is actually advantageous to work inside KRATOS, being able to use the existing functions if convenient.

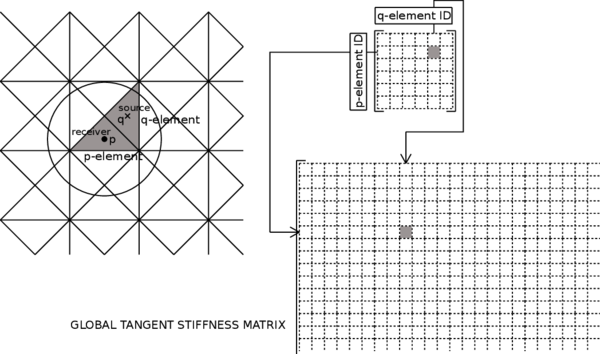

Knowing the framework of the monograph, in the following sections we are going to briefly review some basic notions on the classical Finite Element Method, focusing on the bi-dimensional elasticity theory (the one behind the examples performed). Then, we will discuss the non-linearity associated to the damage problem and its influence in the strategy chosen to solve it. Furthermore, we will review the basic aspects in the implementation of the damage model, pointing out the most relevant differences between a local and a non-local approach. Finally, we will introduce an adaptive mesh refinement technique that, although still under development, has already shown some interesting features that are worth mentioning.

3.2 Overview of the Finite Element Method

Most engineering structures are continuum, and so their behaviour cannot be properly represented in terms of a few number of discrete variables. A rigorous analysis of such structures requires the integration of the differential equations that govern the equilibrium of a generic differential element belonging to them.

The Finite Element Method (FEM) is a numerical technique for finding approximate solutions to boundary value problems for partial differential equations. It uses subdivisions of a whole problem domain, and variational methods to solve the problem by minimizing an associated error function. In the end, FEM connects many simple element equations over many small domains, to approximate a more complex equation over a larger domain.

The integration of partial differential equations helps the engineer to study uni, bi and three-dimensional structural problems, including its time evolution. In deed, although continuum structures are always three-dimensional, if the proper simplification hypothesis are fitted, one can accurately describe the behaviour of a structure by means of uni or bi-dimensional mathematical models, like in earth dams, tunnels, plates, etc.

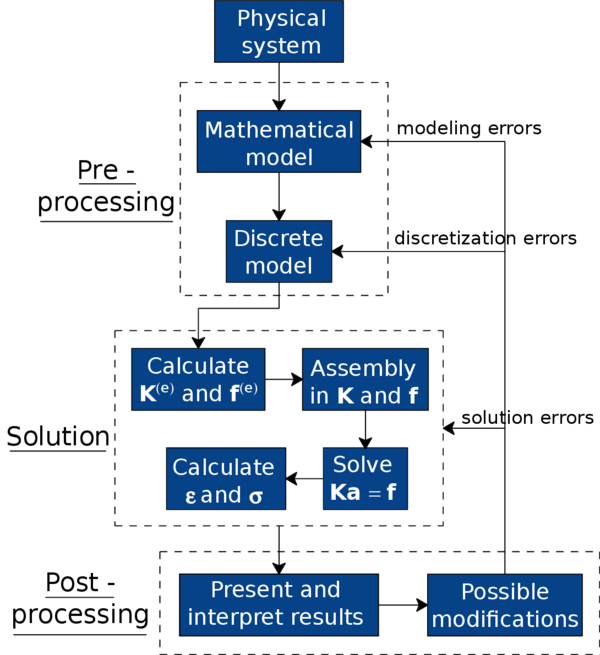

At this point, in order to properly understand the concepts explained later on, it is important to state the generic stages for the analysis of a structure through the Finite Element Method. They can be summarized as follows:

- First of all, knowing the physics of a structure, the boundary conditions and applied loads, one must select a mathematical model that appropriately describes its behaviour. Besides, one needs to define the mechanical properties of the materials and the regime of deformations of the structure (small or large displacements, static, quasi-static or dynamic analysis, etc.). In this work, we are going to work with the elastic bi-dimensional FEM theory in a small deformation regime and quasi-static analysis. Furthermore, for the formulation of the equilibrium equations, we are going to use the Principle of Virtual Work (PVW).

- Once the mathematical model is chosen, the structure must be discretized in non-intersecting portions called finite elements. These elements are interconnected through nodes, the entities that store the main variables of the problem. In order to obtain the value of these variables at any point of the geometry, one just needs to interpolate the values stored at the nodes. The set of finite elements that conform all the model is the so called mesh.

- From the expression of the PVW one can obtain the stiffness matrix and the force vector for each element.

- Afterwards, the global stiffness matrix and the nodal force vector are obtained through the assembly of the previous elemental stiffness matrix and force vector.

- The resulting system of equations must be solved in order to obtain the nodal displacement vector , i.e., the unknown variable of the FEM problem.

- From the obtained nodal displacement vector , it is quite straightforward to calculate the strains and stresses at each element, as well as the reactions at the nodes with prescribed displacements.

- Finally, one must present and interpret the numerical results, assessing whether the solution is accurately enough captured, and introducing modifications in the previous stages if necessary. It is important to notice that the Finite Element Method is an approximate method, and so results are expected to have some solution errors, discretization errors and/or modeling errors.

The previous stages are schematically represented in Figure 18.

|

| Figure 18: Global flowchart of the analysis of a system through the Finite Element Method. |

Since the basis of the damage simulations performed in this work is a two-dimensional elastic finite element code, from now on we will be focusing on this kind of FEM problems.

3.2.1 Two-dimensional elasticity theory

A large variety of structures with a great interest for engineers can be studied with the hypothesis of two-dimensional elasticity. All these structures have in common an approximate right prism shape. Nonetheless, depending on the proportion of such prism dimensions and on the load configuration, the problems involving these structures can be classified in one of the following groups:

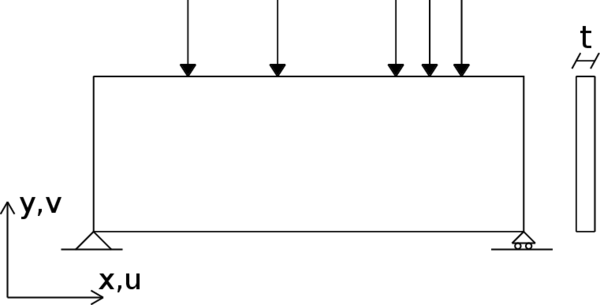

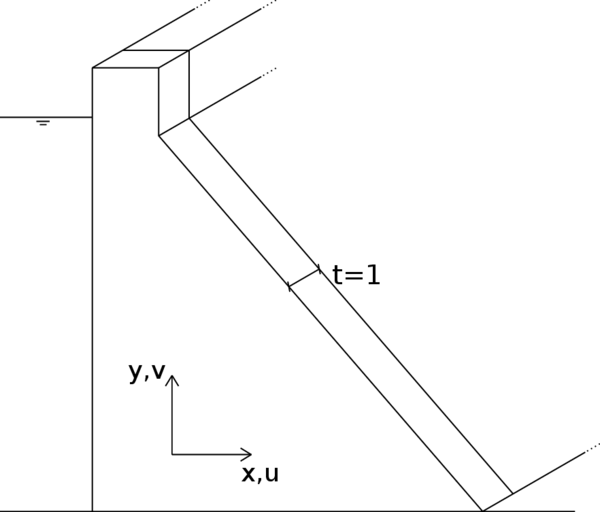

- Plane Stress Problems: one of the dimensions of the structure (thickness) is much smaller than the other two, and loads are contained in the mid-surface of that dimension (Figure 19).

- Plane Strain Problems: one of the dimensions of the structure (length) is much larger than the other two, and loads act uniformly distributed along this length, and are contained in planes orthogonal to the axis passing through the center of gravity of all cross sections (Figure 20).

|

| Figure 19: Example of a plane stress problem. |

|

| Figure 20: Example of a plane strain problem. |

3.2.1.1 Displacements, strains and stresses fields

In two-dimensional elasticity theory, a series of hypothesis are used in order to simplify the expressions defining displacements, strains and stresses. In the following lines we will be reviewing them.

The geometrical characteristics and load properties of a structure working in a plane state (plane stress or plane strain) permits stating the hypothesis that all sections perpendicular to the axis suffer identical deformations contained in its own plane. This way, considering a generic cross section contained in the plane of any of the structures in Figures 19 and 20, the displacement field of the structure can be perfectly defined if we know the displacement in the directions and for all points of that section. Thereby, the vector of displacements at any point is defined as:

|

|

(65) |

where and are the displacements of the point in the directions and , respectively.

From the displacements field (65) one can easily obtain the strains field following the general elasticity theory [51]:

|

In plane strain problems, there is the additional hypothesis that the strain is null, whereas in plane stress problems it is the stress that is zero. This makes that, in any case, it is no necessary to consider strain because it does not take part in the work of deformations since . Thereby, at any point in a plane stress or plane strain state, we can define the vector of significant strains like:

|

|

(67) |

From equations in (66) we can deduce that tangential stresses and are zero. Moreover, for the same reasons of the previous paragraph, stress does not work, and so the significant stress vector can be defined as follows:

|

|

(68) |

Once the strains and stresses have been presented, it is important to stablish the relation between them. This relation can be deduced by applying the previously stated hypothesis into the three-dimensional constitutive equation of elasticity [51]. The elastic stress-strain relation can be written like:

|

|

(69) |

where is the elastic constitutive matrix:

|

|

(70) |

For elastic isotropic materials the values in matrix are given by:

|

with being the Young's modulus, and the Poisson's ratio.

3.2.1.2 Natural coordinates and shape functions

One of the most important concepts of the Finite Element Method are the so called, shape functions. These functions are defined at each node of a finite element and allow us to obtain the value of a nodal variable at any point of the element through interpolation.

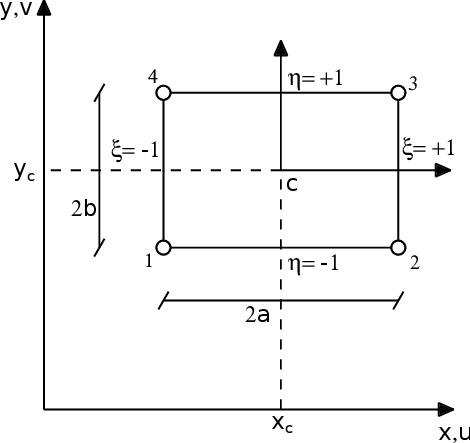

We will present the expressions of the shape functions for the quadrilateral element of four nodes, and for the triangular element of three nodes, since these are the elements that have been used in this work. To do so, we will first introduce the concept of natural coordinates, that will be used in the expressions of the shape functions.

The natural coordinates are normalized coordinates, meaning that they range from -1 to +1, like it is shown in Figure 21. From this same figure it can be deduced that:

|

|

(72) |

where and are the coordinates of the center of the element. Moreover:

|

|

(73) |

and so a differential of area is obtained like:

|

|

(74) |

and, as it will appear later on, the integral of a function over a rectangular element can be perform by means of the following transformation to the natural coordinates:

|

|

(75) |

|

| Figure 21: Geometry of a generic rectangular element. Natural and Cartesian coordinates. |

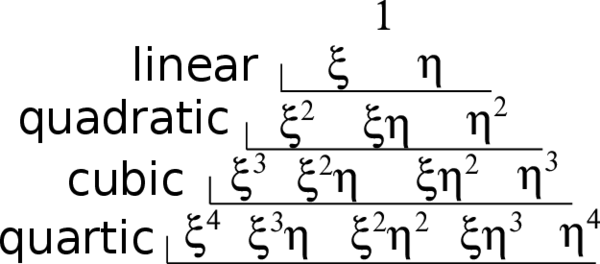

In order to properly understand the expressions of the shape functions, it is interesting to introduce the complete polynomials in two-dimensions.

The shape functions can reproduce with no error polynomial variations of order less than or equal to the order of the complete polynomial contained in those shape functions. It can be deduced then, that the higher is the order of that complete polynomial, the more accurate can be the finite element solution.

In a 2D natural coordinates system, a complete polynomial of order can be written like:

|

|

(76) |

where the number of terms of the polynomial is:

|

|

(77) |

This way, a complete linear polynomial () would be:

|

|

(78) |

An easy way to identify the terms of a 2D complete polynomial is by using the Pascal's triangle (Figure 22).

|

| Figure 22: Pascal's triangle in two dimensions. |

The shape functions of many elements (e.g. the 4-node quadrilateral) have terms of incomplete polynomials. Those terms generate nodal variables that barely contribute to the improvement of the element accuracy. Therefore, between two elements with shape functions containing complete polynomials of the same order, it is advisable to use that with less nodal variables.

That aside, all shape functions of an element must satisfy the following two conditions:

- Nodal compatibility condition

|

|

(79) |

- Rigid body condition

|

|

(80) |

where and represent node indexes, and is the number of nodes of the finite element.

At this point, we can already present the expressions of the shape functions, starting with the rectangular Lagrangian element of four nodes.

The shape functions of this element are based on polynomial interpolations of Lagrange in two dimensions. This permits defining the shape function of a generic node like the product between two uni-dimensional Lagrange polynomials in each direction of the two natural coordinates and at that node. Thereby, if we consider like the Lagrange polynomial of order in direction at node , and the one of order in direction , the shape function at that node is:

|

|

(81) |

In particular, for the 4-node rectangular Lagrangian element, the uni-dimensional Lagrange polynomials in each direction and coincide with the shape functions of the 2-node bar element. Thereby, at a node of the element we have:

|

|

(82) |

And consequently, the shape function at node results:

|

|

(83) |

The values of and can be seen in Figure 21 but are summarized in Table 4.

| Node | ||

| 1 | -1 | -1 |

| 2 | 1 | -1 |

| 3 | 1 | 1 |

| 4 | -1 | 1 |

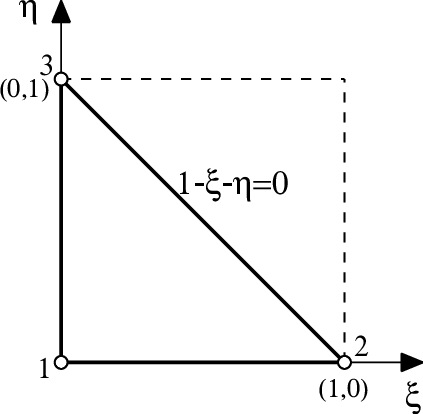

With regard to the triangular element of three nodes, we will present its shape functions also in terms of the natural coordinates, since it is how they are used in KRATOS.

If we define a natural coordinates system over the normalized geometry of a triangular element, like in Figure 23, we can formulate the shape functions for each of its three nodes like:

|

|

(84) |

|

| Figure 23: Natural coordinates in a triangular element. |

In this form, the triangular formulation becomes very similar to the quadrilateral one since, as it can be seen from figures 21 and 23, the normalized triangle is like a portion of a normalized square, which is very interesting when defining isoparametric elements.

3.2.1.3 Discretization of the displacements, strains and stresses fields

In summary, we have seen that thanks to the shape functions, one can obtain a variable at any point of the geometry through an interpolation from a limited number of nodal results.

Therefore, for a generic two-dimensional element of nodes, we can write the displacement field like:

|

|

(85) |

where and are the horizontal and vertical displacements and the shape function of node . Equations in (85) can be rewritten in matrix format like:

|

|

(86) |

or equivalently:

|

|

(87) |

where is the vector of displacements at a point of the element,

|

|

(88) |

are the matrix of shape functions of the element, and the matrix of shape functions of node , respectively, and

|

|

(89) |

are the vector of nodal displacements of the element, and the vector of nodal displacements of node .

In the case of the strain field, we can write:

|

and in matrix format:

|

|

(91) |

or

|

|

(92) |

where

|

|

(93) |

is the deformation matrix of the element, and

|

|

(94) |

is the deformation matrix of node .

Now, substituting equation (92) in the relation (69), we can express the stress vector like:

|

|

(95) |

If there were initial strains or stresses, they could be taken into account by using the following expression:

|

|

(96) |

3.2.1.4 Equilibrium equations of the discretization

The expressions of the stiffness matrix and the force vector can be obtained form of the equilibrium equations of the discretization. To do so, we are going to start from the expression of the Principle of Virtual Works applied at the equilibrium of an element.

Let us suppose that uniformly distributed forces per unit area act over the body of the element (mass forces ), and uniformly distributed forces per unit length act over one of its sides (surface forces ). Moreover, supposing that the equilibrium of the element is achieved at the nodes, we define punctual forces acting at the nodes (nodal forces of equilibrium ) that must balance the forces that appear due to the element deformation as well as the rest of acting forces.

Thereby, we can write the Principle of Virtual Works applied at the element like:

|

|

(97) |

The first term of the expression represents the work of the stresses over the virtual strains , whereas the terms on the right represent the work of the mass forces , the surface forces and the punctual forces over the virtual displacements and . and are the area and the contour of the element, and is the thickness. In plane stress problems, is the real thickness of the structure, while in plane strain is usually taken as 1.

From (87) and (92), we can write:

|

|

(98) |

Substituting (98) in (97), and rearranging terms, we obtain:

|

|

(99) |

Since the virtual displacements are arbitrary, we can write:

|

|

(100) |

Equation (100) represents the equilibrium between the nodal forces of equilibrium and the forces from the deformation of the element (first integral), the mass forces and the surface forces . If we now substitute the stresses from expression (96), we get:

|

|

(101) |

and rearranging terms:

|

|

(102) |

which can be expressed like:

|

|

(103) |

where:

|

|

(104) |

is the elastic stiffness matrix of the element, and:

|

|

(105) |

is the vector of equivalent nodal forces of the element, with:

|

|

(106) |

being the vectors of equivalent nodal forces due to initial deformations and initial stresses, respectively;

|

|

(107) |

is the vector of equivalent nodal forces due to uniformly distributed forces per unit area; and

|

|

(108) |

is the vector of equivalent nodal forces due to uniformly distributed forces per unit length.

The global equilibrium equation of the mesh is obtained by imposing that the sum of nodal forces of equilibrium at each node must equal the external nodal force:

|

|

(109) |

where the left part of the expression represents the sum of contributions of the vectors of nodal forces of equilibrium of the different elements that share the global node , and represents the vector of external punctual forces acting on such node.

Therefore, the global equilibrium equation of the mesh can be obtained by assembling the contributions of stiffness matrices and vectors of equivalent nodal forces of the different elements. The global matrix equation can be written like:

|

|

(110) |

where , and are, respectively, the stiffness matrix, the vector of nodal displacements, and the vector of equivalent nodal forces of the whole mesh.

3.2.1.5 Isoparametric elements and numerical integration

At this point, we have already obtained the equilibrium equations of the discretization, but we have not presented yet a convenient numerical technique to integrate the expressions of the stiffness matrix and the vectors of equivalent nodal forces of the elements.

The integration of the expressions of the stiffness matrix and the vectors of equivalent nodal forces, will be performed by means of Gauss-Legendre quadratures. This technique allow us to integrate any function over a normalized domain, using tabulated Gauss point coordinates and weights. However, we need to transform first the integrals over the element domain into integrals over the normalized space of the natural coordinates.

In order to do so, we must first introduce a very important concept in the Finite Element Method: the isoparametric interpolation. In this regard, we will present the isoparametric quadrilateral elements, and the isoparametric triangular elements.

The concept of isoparametric interpolation comes from the usage of the same shape functions to interpolate the geometry and the displacements. Thereby, just as we saw in (85), we can express the geometry of a two-dimensional isoparametric element from the coordinates and of its nodes like:

|

|

(111) |

where are the element shape functions. Equations in (111) relate the cartesian coordinates of a point with the natural coordinates and , which is very convenient for the transformation of the integration domain.

In this regard, in order to formulate the change of variables from cartesian coordinates to natural coordinates, we need to compute the determinant of the Jacobian matrix. This can be obtained by deriving the shape functions with respect to and . If we consider then, applying the chain rule, we have:

|

|

(112) |

or in matrix format:

|

|

(113) |

where is the Jacobian matrix of the transformation of natural coordinates to cartesian coordinates. From (113) we can derive:

|

|

(114) |

where is the determinant of the Jacobian.

The determinant of the Jacobian permits expressing the differential of area in natural coordinates [52] like:

|

|

(115) |

Now, using the isoparametric transformation (111) we can obtain the terms of the Jacobian:

|

|

and so:

|

|

(116) |

Furthermore, substituting the expressions of equation (114) in (94) we obtain the deformation matrix of an isoparametric element in terms of the natural coordinates:

|

|

(117) |

where

|

|

(118) |

Finally, by applying the previous expressions, the elastic stiffness matrix (104) can be written like an integral over the normalized domain of the natural coordinates.

For quadrilateral elements we have:

|

|

(119) |

And for triangular elements we must consider the different domain of integration (see Figure 23):

|

|

(120) |

At this point we can already use the Gauss quadrature to solve the integrals of the previous expressions. First, though, let us remind the fundamentals of the Gauss-Legendre quadrature for rectangular and triangular domains.

The integral of a generic function over the domain of natural coordinates of a quadrilateral element can be evaluated by means of a Gauss quadrature like:

|

|

(121) |

where and are the number of integration points in each direction and ; and are the natural coordinates of the integration points , and , the weights of that point corresponding to each direction.

On the other hand, the Gauss quadrature for a triangular element can be written like:

|

|

(122) |

The coordinates and weights of the integration points are tabulated. And the number of integration points must be properly chosen, taking into account that a Gauss quadrature of order in each natural direction permits integrating with no error a polynomial of order in the corresponding natural coordinate. In our case, we have been using 2x2 integration points for the quadrilateral elements of four nodes, and 1 integration point for the triangular elements of three nodes.

Now, substituting (121) in (119), we can obtain the expression of the elastic stiffness matrix for an isoparametric quadrilateral element, evaluated through numerical integration:

|

|

(123) |

And from (120) and (122) we obtain the expression corresponding to a triangular element:

|

|

(124) |

Thereby, we can see that the numerical integration of the stiffness matrix requires the evaluation of the Jacobian , its determinant , the deformation matrix , the constitutive matrix , and the thickness at each integration point.

Similarly, the computation of any of the vectors of equivalent nodal forces that imply integrals over a quadrilateral element, for instance (107), can be performed like:

|

|

(125) |

For triangular elements the double sum is replaced by the simple sum of (122).

Finally, the computation of the vector of surface forces (108) is a bit different because the integral is performed over the contour of the element . In general, such contour represents a straight line const or const in the space of natural coordinates. Therefore, for example, for a contour of an isoparametric quadrilateral element corresponding to the straight line , the differential of length is computed like:

|

|

(126) |

Substituting (126) in (108) we obtain a line integral that depends only on the natural coordinate and that can be computed through a uni-dimensional quadrature like:

|

|

(127) |

A more extended overview on the Finite Element Method can be found in [53,54].

3.3 Non-linearity Associated to Damage

In the previous section we reviewed fundamental concepts on the Finite Element Method centering our attention on the two-dimensional elasticity theory, which is the basis of the implemented damage code.

In this regard, when deriving the equilibrium equations of the discretization from the Principle of Virtual Works (97), we used an elastic stress-strain relation (69). Nonetheless, in the previous chapter we explained that in order to take into account the lost of stiffness due to damage progression, a different stress-strain relation had to be used.

If we now consider the stress-strain law (13) in voigt notation:

|

|

(128) |

and we introduce it into a general expression of the PVW (100), we obtain the following relation:

|

|

(129) |

where is the damage parameter.

Expression (129) can be rewritten like:

|

|

(130) |

where

|

|

(131) |

is the damaged or secant stiffness matrix of the element, and

|

|

(132) |

is the vector of equivalent nodal forces of the element, in which and coincide with (107) and (108), respectively.

Like we stated before, by assembling the contributions of the different elements of the mesh we can obtain the global matrix equation:

|

|

(133) |

where is the global secant stiffness matrix .

By the way, we saw in Chapter 2 that , where is the internal state variable depending on the equivalent strains . Therefore, since the strains are directly related to the displacements through (92), we can say that damage also depends on the displacements, i.e. . Taking into account this last consideration, expression (133) results:

|

|

(134) |

which is a non-linear system of equations.

Thereby, the inclusion of damage mechanics in the classical elasticity theory introduces a non-linearity that must be taken into account when solving this kind of problems. In this section we are going to review how we solve such non-linear system of equations, but before let us introduce the fundamentals of non-linear problems that will help us understand the results of the simulations performed.

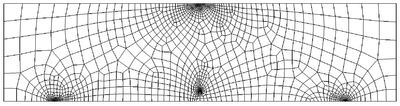

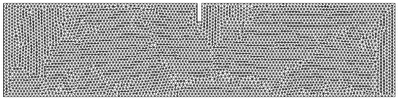

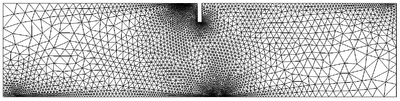

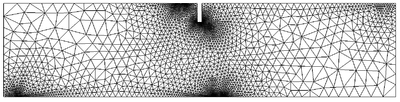

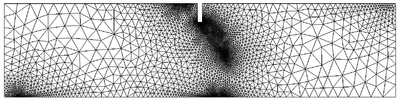

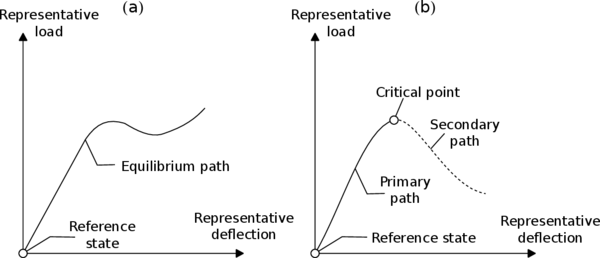

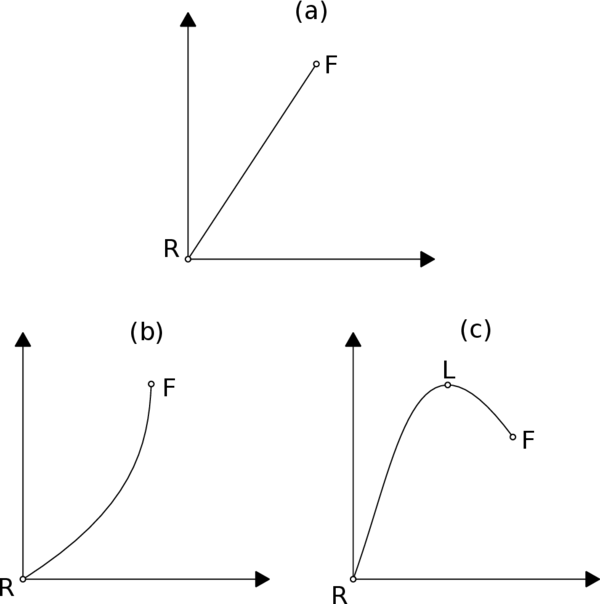

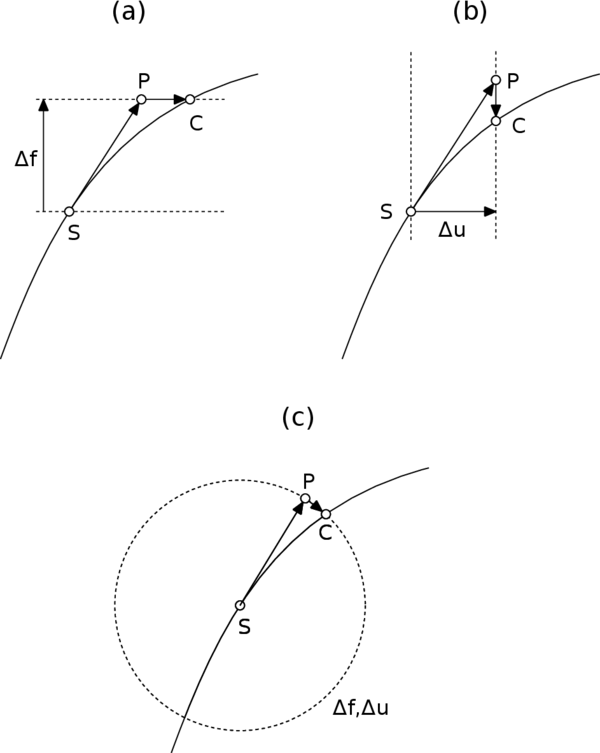

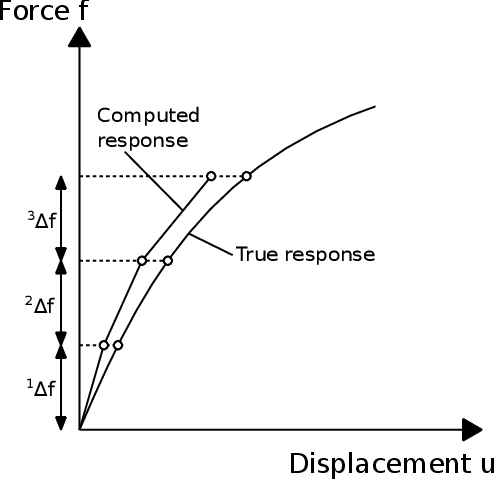

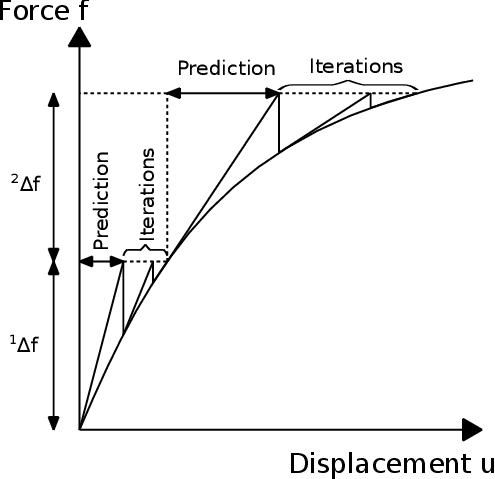

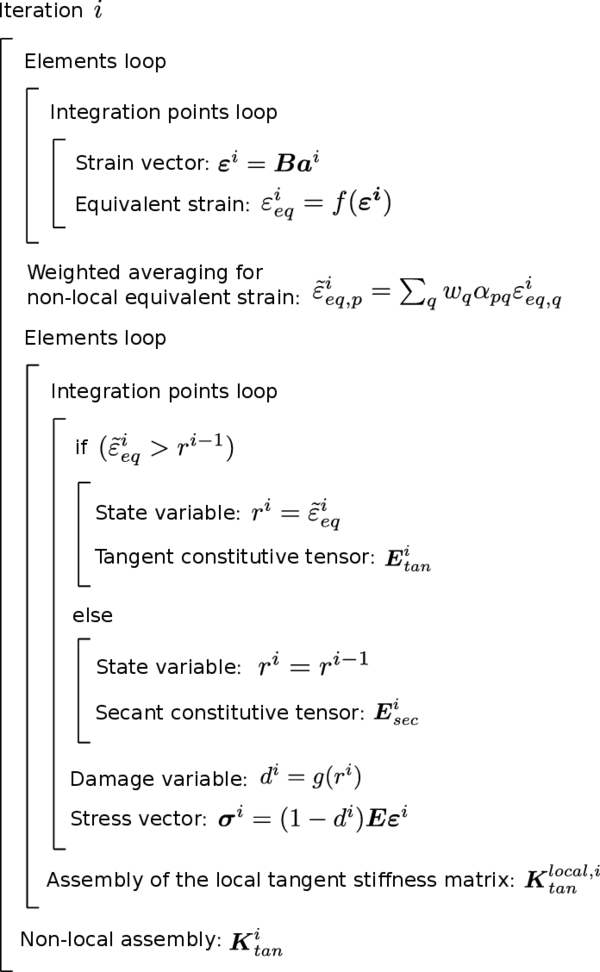

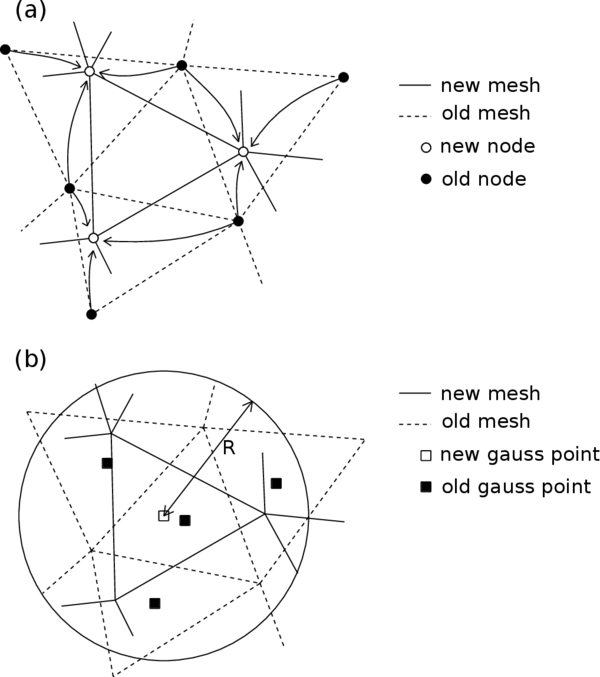

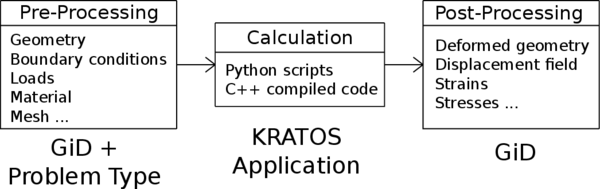

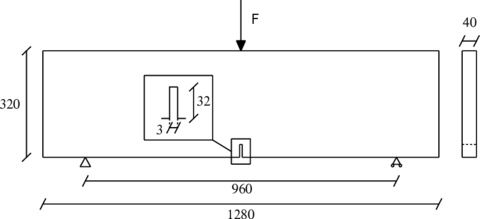

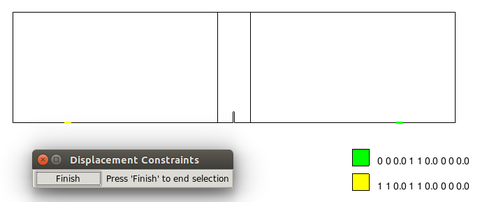

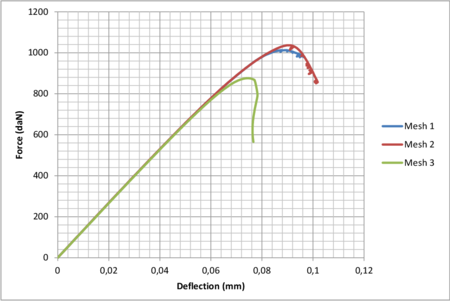

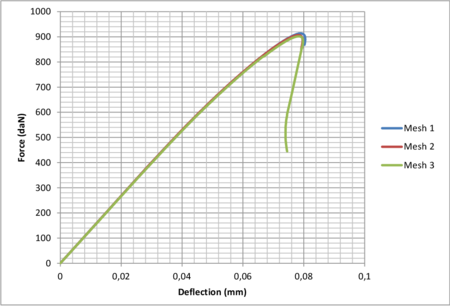

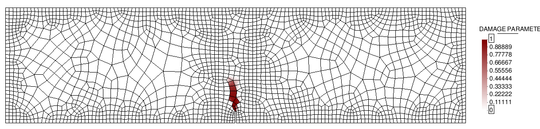

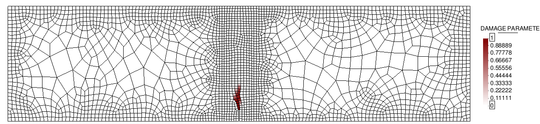

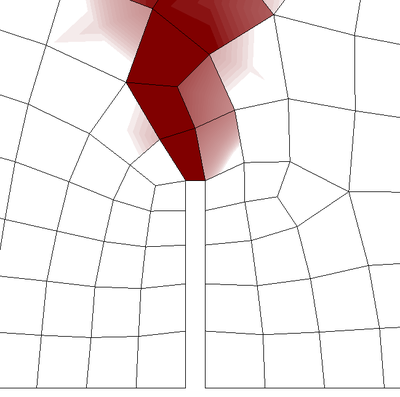

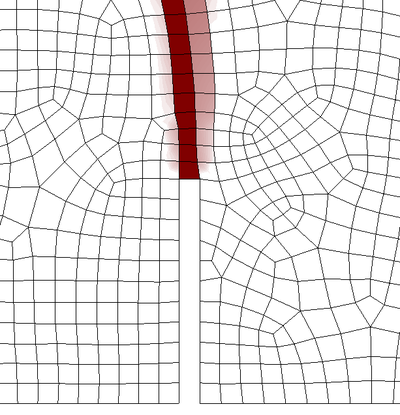

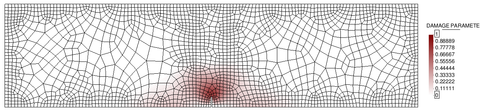

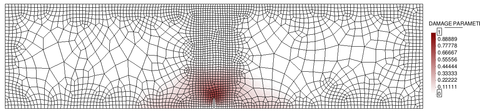

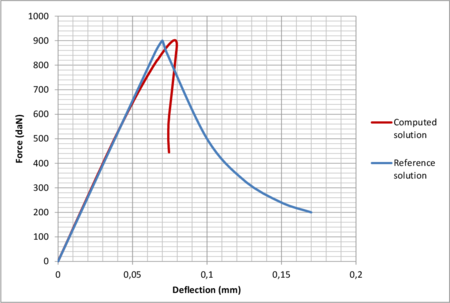

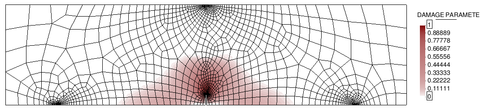

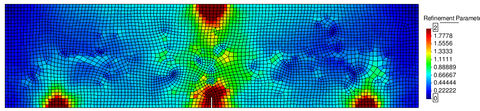

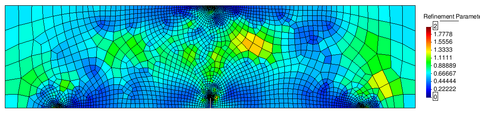

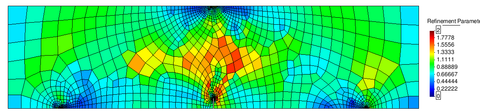

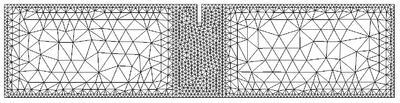

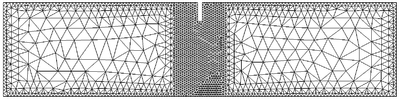

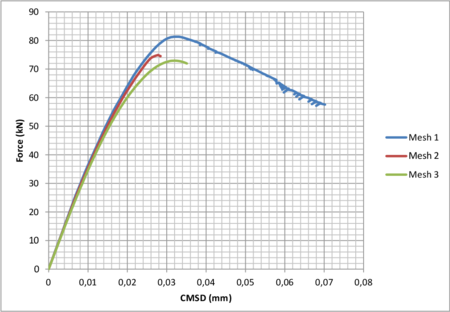

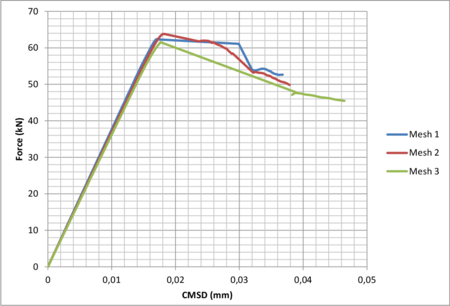

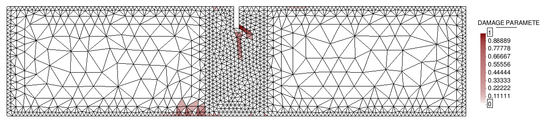

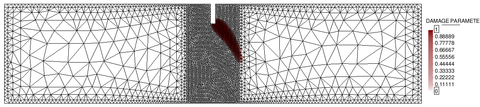

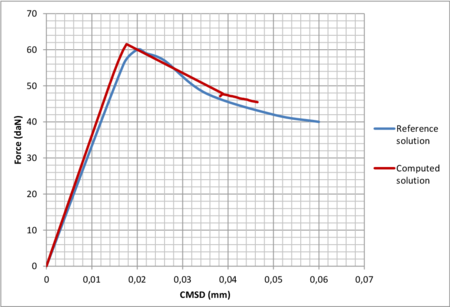

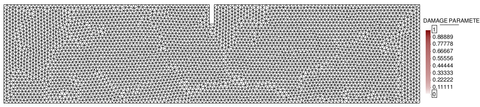

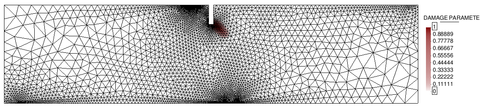

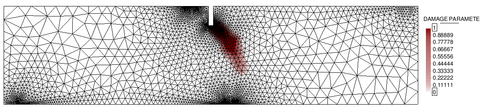

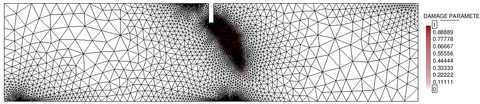

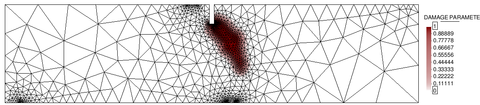

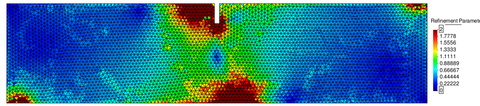

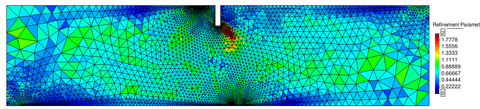

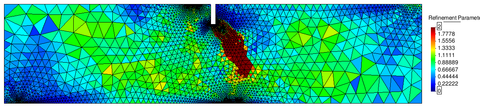

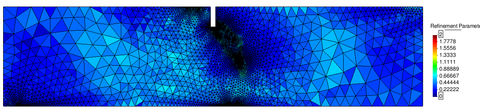

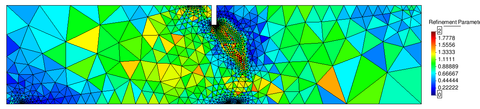

3.3.1 Fundamentals of non-linear problems