Abstract

The development of high-speed train lines has increased significantly during the last twenty-five years, leading to more demanding loads in railway infrastructures. Most of these infrastructures were constructed using railway ballast, which is a layer of granular material placed under the sleepers whose roles are: resisting to vertical and horizontal loads and facing climate action. Moreover, the Discrete Element Method was found to be an effective numerical method for the calculation of engineering problems involving granular materials. For these reasons, the main objective of the work is the development of a numerical modelling tool based on the Discrete Element Method which allows the users to understand better the mechanical behaviour of railway ballast.

The first task was the review of the specifications that ballast material must meet. Then, the features of the available Discrete Elements code, called DEMPack, were analysed. After those revisions, it was found that the code needed some improvement in order to reproduce correctly and efficiently the behaviour of railway ballast. The main deficiencies identified in the numerical code were related to the contact between discrete element particles and planar boundaries and to the geometrical representation of such a irregular material as ballast.

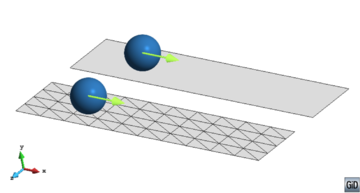

Contact interactions between rigid boundaries and Discrete Elements are treated using a new methodology called the Double Hierarchy method. This new algorithm is based on characterising contacts between rigid parts (meshed with a Finite Element-like discretisation) and spherical Discrete Elements. The procedure is described in the course of the work. Moreover, the method validation and the assessment of its limitations are also displayed.

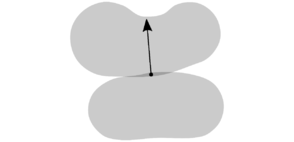

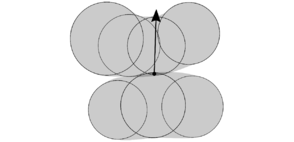

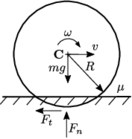

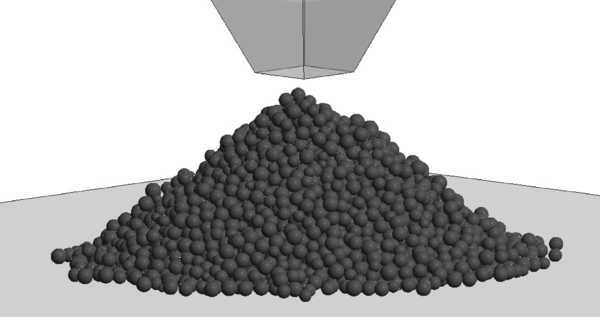

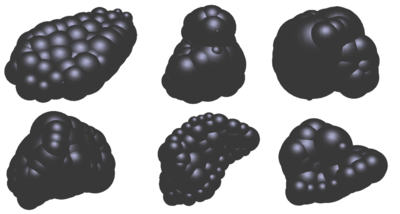

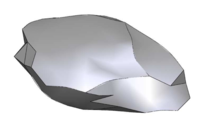

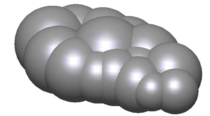

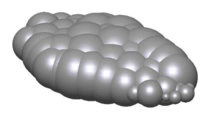

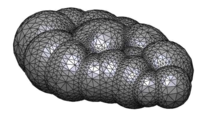

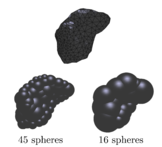

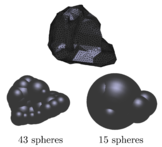

The representation of irregular particles using the Discrete Element Method is a very challenging issue, leading to different geometrical approaches. In this work, a deep revision of those approaches was performed. Finally, the most appropriate methods were chosen: spheres with rolling friction and clusters of spheres. The main advantage of the use of spheres is their low computational cost, while clusters of spheres stand out for their geometrical versatility. Some improvements were developed for describing the movement of each kind of particles, specifically, the imposition of the rolling friction and the integration of the rotation of clusters of spheres.

In the course of this work the way to fill volumes with particles (spheres or clusters) was also analysed. The aim is to control properly the initial granulometry and compactness of the samples used in the calculations.

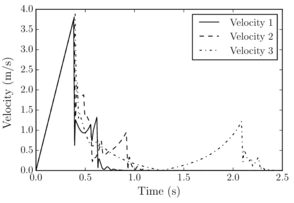

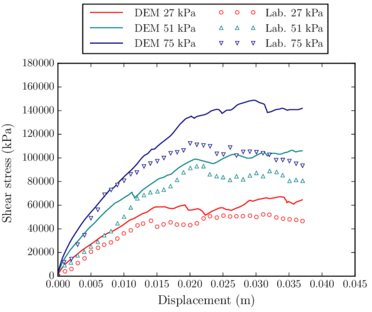

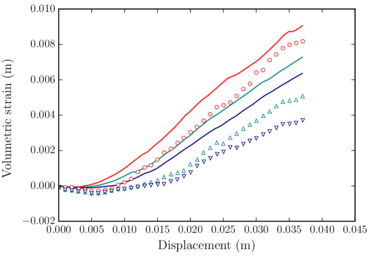

After checking the correctness of the numerical code with simplified benchmarks, some laboratory tests for evaluating railway ballast behaviour were computed. The aim was to calibrate the ballast material properties and validate the code for the representation of railway ballast. Once the material properties were calibrated, some examples of a real train passing through a railway ballast track were reproduced numerically. This calculations allowed to prove the possibilities of the implemented tool.

This publication is a revised version of the text of the PhD Thesis “Numerical analysis of railway ballast behaviour using the Discrete Element Method” of Joaquín Irazábal, presented at the Technical University of Catalonia on October 6th 2017

Acknowledgements

I would like to thank all people who contributed in one way or the other to the achievement of this work.

First of all I want to express my gratitude to my PhD advisors, Prof. Eugenio Oñate, for his support all over these years. It has been a pleasure to work with him.

I want to specially thank Fernando Salazar, the Director of CIMNE Madrid. He has been not only my boss but also my college. Without his invaluable help and advices this work would not be what it is. I want to thank also my other colleges in the CIMNE Madrid's office, David and Javier, for the good times we have spent and for letting me share my experiences with them, always giving me useful opinions.

Thanks to Miguel Ángel and Salva for their cooperation and assistance and also for making me feel one more of the team when I go to Barcelona. I can not forget to thank the other DEM Team members: Guillermo and Ferran for their outstanding work, which has been fundamental for the code developments implemented during this work. Thanks to Miquel Santasusana for making our collaboration so enjoyable, we shared an article and many hours of work, but also great moments out of the office. I should also thank Pablo and his patience with me during my first months in CIMNE helping me to start understanding KRATOS, Antonia who helped me always since I started studying the Master and the colleges in CIMNE Cuba, Roberto and Carlos, who assisted me from the moment I asked for help. I also want to thank Merce for her kind help in the difficult administrative issues. May be because I am in Madrid I am missing some people in Barcelona, but my intention is to thank everybody belonging to CIMNE family.

Last but not least to all my friends and family, specially my parents, Juanjo and Charo, and my sister, Lucía, for being always at my side. Finally, I would like to thank the person with whom I have shared more time and experiences the last ten years: thanks Patry for supporting me everyday but above all making me happy.

This work was carried out with the financial support of CIMNE via the CERCA Programme / Generalitat de Catalunya and the Spanish MINECO within the BALAMED (BIA2012-39172) and MONICAB (BIA2015-67263-R) projects.

Resumen

El desarrollo de las líneas de alta velocidad ha aumentado significativamente durante los últimos veinticinco años, dando lugar a cargas más exigentes sobre las infraestructuras ferroviarias. La mayor parte de estas infraestructuras se construyen con balasto, que es una capa de material granular colocada bajo las traviesas cuyas funciones principales son: resistir las cargas verticales y horizontales repartiéndolas sobre la plataforma y soportar las acciones climáticas. Por otra parte, se encontró que el Método de los Elementos Discretos es muy eficaz para el cálculo de problemas de ingeniería que implican materiales granulares. Por estas razones se decidió que el objetivo principal de la tesis fuera el desarrollo de una herramienta de modelación numérica basada en el Método de los Elementos Discretos que permita a los usuarios comprender mejor el comportamiento mecánico del balasto ferroviario.

La primera tarea fue la revisión de las especificaciones que el balasto debe cumplir. A continuación, se analizaron las características del código de Elementos Discretos disponible, denominado DEMPack. Después de esas revisiones, se encontró que el código necesitaba alguna mejora para poder reproducir correcta y eficientemente el comportamiento del balasto ferroviario. Las principales deficiencias identificadas en el código numérico están relacionadas con el contacto entre partículas y contornos planos y con la representación geométrica de un material tan irregular como es el balasto.

Los contactos entre contornos rígidos y elementos discretos se tratan usando una nueva metodo-logía llamada el Double Hierarchy method. Este nuevo algoritmo se basa en la caracterización de contactos entre elementos rígidos (discretizados de forma similar a los elementos finitos) y elementos discretos esféricos. El procedimiento de búsqueda y caracterización de contactos se describe detalladamente a lo largo de la tesis. Además, también se muestra la validación del método y se evalúan sus limitaciones.

La representación de partículas irregulares utilizando el Método de los Elementos Discretos se puede abordar desde diferentes enfoques geométricos. En este trabajo, se realizó una revisión de estos enfoques. Finalmente, se escogieron los métodos más adecuados: esferas con resistencia a la rodadura y clusters de esferas. La principal ventaja del uso de las esferas es su bajo coste computacional, mientras que los clusters de esferas destacan por su versatilidad geométrica. Se han desarrollado algunas mejoras para describir el movimiento de cada uno de los tipos de partículas, concretamente, la imposición de la resistencia a la rodadura y la integración de la rotación de clusters de esferas.

En el curso de este trabajo también se analizó la forma de llenar volúmenes con partículas (esferas o clusters). El objetivo es controlar adecuadamente la granulometría inicial y la compacidad de las muestras utilizadas en los cálculos.

Después de comprobar el comportamiento del código numérico con tests simplificados, se emularon numéricamente algunos ensayos de laboratorio con balasto ferroviario. El objetivo era calibrar las propiedades del balasto y validar el código para representar con exactitud su comportatimiento. Una vez calibradas las propiedades del material, se reprodujeron numéricamente algunos ejemplos de un tren pasando sobre una vía con balasto. Estos cálculos permiten demostrar las posibilidades de la herramienta numérica implementada.

1 Introduction

The railway line system plays an important role in the transport network of any country, and its maintenance is essential. Among other solutions, ballast is traditionally used to support railway tracks as it is relatively inexpensive and easy to maintain [1].

Before the advent of high-speed trains, most attention was given to the track superstructure (consisting of rails, fasteners and sleepers) than to the substructure (consisting of ballast, subballast and subgrade). However, the maintenance cost of the substructure is not negligible at all [2], and a better knowledge of railway ballast behaviour may allow a more efficient use of it, saving money and increasing rail transport safety.

Besides the importance of studying ballast properties, in economic and safety terms, it should be pointed that a new variable appeared in the last two decades: the increase of trains speed. High-speed trains improved people mobility all around the world, at the cost of increasing vibrations and loads on the railway track [3], making its behaviour more uncertain.

|

| Figure 1: High-speed train travelling over a ballasted railway track. |

Efficiency, safety and the more demanding loads and vibrations raised the interest on studying railway infrastructures deeply. Within those infrastructures, the ballast layer is one of the elements whose behaviour is more uncertain, due to its granular nature. For this reason, railway ballast laboratory tests are being preformed by different construction companies and research teams. However, real tests are difficult and expensive to carry out. In addition, there are parameters that can not be easily measured in the laboratory.

To overcome these problems, the calculation of railway ballast and other granular materials is being addressed by means of the development of different computational methods. Regarding the computational analysis, granular materials can be considered either as discrete individuals that interact through proper contact laws, or as a continuum, whose behaviour is governed by some constitutive law.

1.1 Numerical analysis of granular materials

Although, in general, granular materials can be defined as numerous collections of rigid or deformable bodies that interact with one another [4], they can be classified based on various criteria. There are granular materials whose particles can be of different grain sizes, from nanoscale powders to groups of stones; there are granular materials whose particles appear round and others with very sharp particles; and, there also exist very compacted granular materials and others whose particles appear disperse. These and other characteristics lead to no single predictive behaviour of granular materials, and their calculation must be addressed from diverse numerical perspectives depending on their properties.

In this particular case, the interest lies in the calculation of railway ballast behaviour. Railway ballast refers to the layer of crushed stones placed between and underneath the sleepers whose purpose is to provide drainage and structural support for the dynamic loading applied by trains. Railway ballast forms a dense collection of particles with relatively large grain size, as compared to the depth of the ballast bed. It typically works under high frequency loads exerted by high-speed trains travelling over the rails.

Traditionally, complex geomechanic problems were addressed using refined constitutive models based on continuum assumptions. Although these models may be accurate in the evaluation of the critical state of soils [5,6,7,8], or the flow of bulk material masses [9], they are not able to represent local discontinuities which typically play a fundamental role in the behaviour of granular materials. This discontinuous nature induces special features such as anisotropy or local instabilities, which are difficult to understand or model based on the principles of continuum mechanics [10].

The most natural choice to represent granular materials is the use of discrete techniques, intrinsically appropriate to account for their discontinuity and heterogeneity. The two principal approaches within the discrete framework are: Non-smooth and Smooth Discrete Element Methods [11].

Within Non-smooth Discrete Element Methods, interactions are described by shock laws and Coulomb friction, written as non-differentiable relations. Meanwhile, Smooth Discrete Element Methods describe the interactions between grains by regular functions involving gaps, relative velocities and reaction forces.

The most popular Non-smooth discrete element methods are:

- Event Driven (ED) methods, which are used for collections of rigid bodies distanced from each other that collide by pairs (these methods assume that the contact time is implicitly zero). Collisions are governed by shock laws with restitution coefficients, not always considering friction [12].

- Non-Smooth Contact Dynamics (NSCD) method, which is normally applied to dense collections of rigid or deformable bodies. Contact laws are described by non-continuous functions within the frame of convex analysis. Time integration is carried out using implicit schemes, contrary to ED methods [13,14].

Smooth Discrete Element Methods can also be addressed by different approaches, where two methods can be highlighted:

- Molecular Dynamics (MD) considers grains as rigid particles submitted to contact forces calculated from potential functions. Since this method was initiated to deal with colliding gas particles, their particles rotation is discarded. Explicit time integration schemes, together with simple interaction modelling, allow fast flows of data to be managed for numerous collections of particles.

- Discrete Element Method (DEM) is the result of considering rotational degrees of freedom and contact mechanisms to MD [15]. Presented by Cundall and Strack [16] in 1979, each material grain is calculated as a rigid particle. The deformation of the material is represented by the interaction between the particles, allowing small overlaps. The normal and tangential contact between the rigid particles define the material constitutive behaviour. The dynamic equation is solved by means of explicit integration schemes.

The main features of the four mentioned methods are summarised in Table 1.

| Non-smooth DEM | Smooth DEM | |||

| ED | NSCD | MD | DEM | |

| Valid for dense collections of particles | ⨉ | ✓ | ⨉ | ✓ |

| Valid for loose collections of particles | ✓ | ⨉ | ✓ | ✓ |

| Contact mechanism accurately characterised | ⨉ | ✓ | ⨉ | ✓ |

| Allows multiple contacts | ⨉ | ✓ | ✓ | ✓ |

| Implicit scheme for time integration | ⨉ | ✓ | ⨉ | ⨉ |

| Explicit scheme for time integration | ✓ | ⨉ | ✓ | ✓ |

DEM can be used for the calculation of loose collections of particles, but the computational time would be very high compared to ED method or MD.

In this research, ED and MD methods are discarded because they are not conceived for the calculation of dense collections of particles and the contact mechanism in both methods is not accurately treated. Between DEM and NSCD the main difference is the use of explicit and implicit time integration schemes respectively. Explicit algorithms are more straightforward than implicit ones, but their computational stability limits the calculation time step. On the other hand, the time step for implicit schemes is not limited by the system stability, at the cost of a higher computational demand.

Many studies [17,18,19] tried to evaluate which of DEM or NSCD is better for the calculation of dense collections of particles. In all of them the conclusion is that it depends on the case study, so there is not a general rule to choose one or another.

In this particular case, considering that high-speed trains exert high frequency loads over the ballast bed, the use of explicit time integration schemes could be computationally advantageous [20,21]. These schemes do not need to include any additional term to take into account the frequency of the input load. In order to correctly capture the dynamic response, the calculation time step should be several times smaller than the input load period. Under this condition, the explicit integration yields sufficient accuracy and the time step is generally below its stability limits. Another important outcome of the use of an explicit integration is the easier parallelisation of the code and the avoidance of linearisation and employment of system solvers. Such considerations induce to conclude that, although NSCD is an interesting method to address this problem, the DEM is more appropriate.

1.2 Objectives

This work falls within the framework of two research projects funded by the Spanish Ministry of Economy, Industry and Competitiveness (MINECO):

- BALAMED (BIA2012-39172): Numerical modelling of rail-sleeper-ballast interaction using the Discrete Element Method.

- MONICAB (BIA2015-67263-R): Numerical modelling of sand-fouled ballast in high-speed railways.

BALAMED project aims to develop a numerical tool, based on the DEM, for calculating the response of the rail-sleeper-ballast system under the loads of the high speed trains. It started in 2013 and finished in 2015. Meanwhile, MONICAB project started in 2016 and will finish in 2018. Its main objective is the development of a numerical modelling tool, based on the DEM for the analysis of the behaviour of the sand-fouled railway ballast.

Current thesis shows most of the progresses made within those projects. The research is conducted in parallel with the improvements performed in the open-source DEM code developed in CIMNE1, the so-called DEMPack (www.cimne.com/dempack/). The data structures and algorithms, used in DEMPack are implemented through the Kratos multiphysics software suite [22], an Open-Source framework for the development of numerical methods for solving multidisciplinary engineering problems.

|

| Figure 2: DEMpack logo. |

From all the above-mentioned, the general objective of this thesis can be stated as follows: develop a numerical tool based on the DEM, within DEMpack, able to reproduce ballast behaviour, including its interaction with other structures. To reach this goal some partial objectives should be fulfilled.

- Conduct a literature review on railway ballast, studying its physical properties, and the specifications it should meet. The aim is to determine the necessities of a numerical code able to reproduce railway ballast behaviour.

- Identify the limitations of the available DEM code for reproducing railway ballast, such as, the interaction between Discrete Elements (DEs) and rigid boundaries and the geometrical representation of irregular particles.

- Implement an accurate and efficient method to search neighbours and evaluate forces in calculations involving DEs and rigid boundaries.

- Evaluate the alternatives for the geometrical representation of granular materials. Choose the most suitable approaches for replicating railway ballast behaviour taking into account accuracy and computational efficiency.

- Explore and define suitable methodologies to generate samples of granular material with the desired granulometry, particle shape and compaction.

- Develop contact constitutive models for railway ballast particles interaction, taking into account the singularities of each geometrical approach chosen.

- Calibrate and validate the contact constitutive models by reproducing different laboratory tests previously identified.

- Finally, the usefulness of the numerical code was verified by calculating full scale cases where a train passes through different ballasted track sections.

(1) Centre Internacional de Metodes Numerics en Enginyeria.

1.3 Outline

The work is divided into eight chapters, including current one:

- Chapter 2 introduces the literature review on railway infrastructures, focusing mainly on railway ballast. The aim is to have as much information as possible to define the material properties in the most accurate manner. In this chapter, laboratory tests for the calibration and validation of the numerical code are also reviewed.

- Chapter 3 describes the basic aspects of the DEM with spherical particles, including the neighbour search and force evaluation strategies followed, the contact constitutive models used and the time integration schemes adopted.

- Chapter 4 is dedicated to the strategy followed to detect and solve contact between spherical particles and planar surfaces. The novel Double Hierarchy Method for contact with rigid boundaries is described. Validation examples and limitations analysis are also displayed.

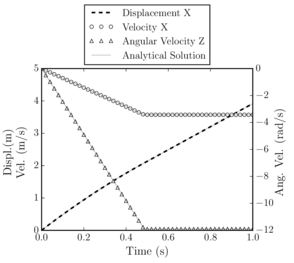

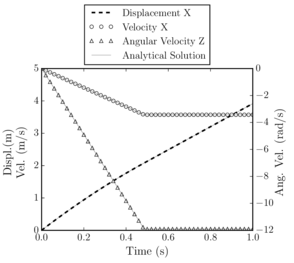

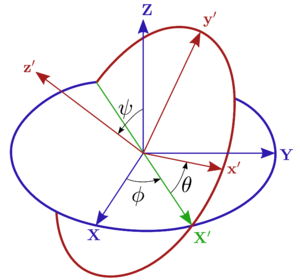

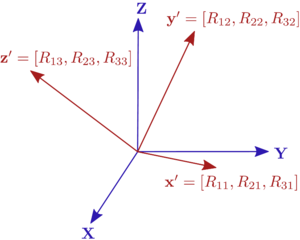

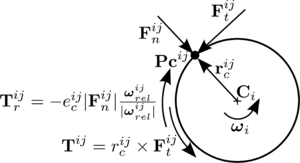

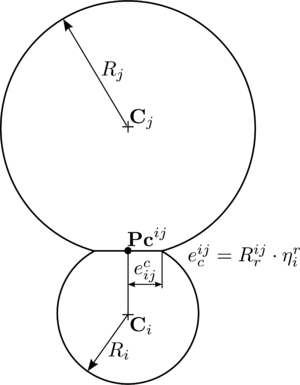

- Chapter 5 presents the geometric alternatives to represent railway ballast particles within the DEM. The two approaches finally chosen were the use of spherical particles and sphere clusters. Special features regarding spherical and non-spherical particles rotation are deeply described, detailing the new developments carried out during this research. The main improvements implemented concern rolling friction application to spherical particles and non-spherical particles rotational integration of motion. The validation procedure of those improvements is also presented.

Regarding particles arrangement, the generation of compacted groups of spherical particles and sphere clusters is also addressed in Chapter 5.

- Chapter 6 starts with the review of the material properties needed to accurately define railway ballast in the numerical calculations. Then, aiming to calibrate and validate the numerical code, some laboratory tests are reproduced.

- Chapter 7 shows the calculation of a real train passing through different ballasted track sections: the first one correctly compacted, the second including a bump and the third eroded laterally.

- Finally, Chapter 8 refers to the conclusions that can be drawn from this research and the future work that can be derived from its findings.

1.4 Related publications

1.4.1 Articles in scientific journals

- M. Santasusana, J. Irazábal, E. Oñate, J.M. Carbonell. The double hierarchy method. A parallel 3D contact method for the interaction of spherical particles with rigid FE boundaries using the DEM, Comp Part Mech 3 (3) (2016) 407–428.

- J. Irazábal, F. Salazar, E. Oñate. Numerical modelling of granular materials with spherical discrete particles and the bounded rolling friction model. Application to railway ballast. Comput Geotech 85 (2017) 220–229.

1.4.2 Communications in congresses

- M. Santasusana, E. Oñate, J.M. Carbonell, J. Irazábal, P. Wriggers. Combined DE/FE Method for the simulation of particle-solid contact using a Cluster-DEM approach, 4 International Conference on Computational Contact Mechanics (ICCCM 2015), Hannover.

- J. Irazábal, F. Salazar, E. Oñate. Numerical modelling of railway ballast behaviour using the Discrete Element Method (DEM) and spherical particles, IV International Conference on Particle-Based Methods (Particles 2015), Barcelona.

- M. Santasusana, E. Oñate, J.M. Carbonell, J. Irazábal, P. Wriggers. A Coupled FEM-DEM procedure for nonlinear analysis of structural interaction with particles, IV International Conference on Particle-Based Methods (Particles 2015), Barcelona.

- J. Irazábal, F. Salazar, E. Oñate. Geometrical representation of railway ballast using the Discrete Element Method (DEM), 7 European Congress on Computational Methods in Applied Sciences and Engineering (ECCOMAS Congress 2016), Crete Island.

- M. Santasusana, J. Irazábal, E. Oñate, J.M. Carbonell. Contact Methods for the Interaction of Particles with Rigid and Deformable Structures using a coupled DEM-FEM procedure, 7 European Congress on Computational Methods in Applied Sciences and Engineering (ECCOMAS Congress 2016), Crete Island.

- J. Irazábal, F. Salazar, E. Oñate. Shape characterisation of railway ballast stones for discrete element calculations, V International Conference on Particle-Based Methods (Particles 2017), Hannover.

2 Introduction to railway tracks

This chapter is divided in three different sections. The first one presents a brief introduction of railway infrastructures and a review of its components, from the superstructure to the substructure, focusing on the ballast layer. Meanwhile, section 2.2 describes the main features of ballast and sleepers. Finally, section 2.3 is a review of the state of the art of the laboratory tests used to evaluate railway ballast behaviour.

2.1 Railway infrastructures

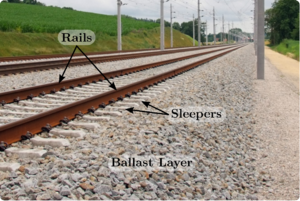

Railway infrastructures can be split in two parts: the superstructure, that transmits the train loads to the substructure and the substructure, that distributes those loads to the soil. The superstructure is directly in contact with the train wheels, while the substructure is in contact with the superstructure via the sleepers, that are embedded in the ballast layer as it is shown in Figure 3.

|

| Figure 3: Scheme showing the position of the rails, the sleepers and the ballast layer. |

2.1.1 Railway substructure

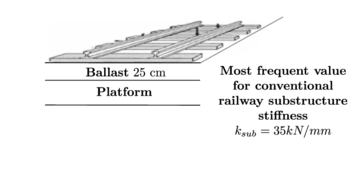

Traditionally, the cross-section of the substructure of conventional railway tracks consisted of a simple ballast layer over the platform. Besides distributing traffic loads, the ballast layer contributes to rainwater evacuation, platform protection to moisture variance, longitudinal and lateral stabilisation and high loads damping [23].

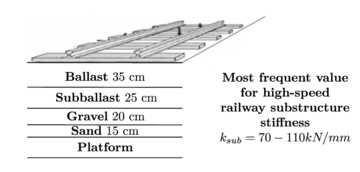

The typical cross-section of conventional and high-speed railway tracks is presented in Figure 4. The differences between both are due to the more demanding loads exerted by high-speed trains. Although the function of the ballast layer is the same in both types of tracks, in high-speed railway infrastructures the ballast layer is thicker and more layers of various materials (subballast, gravel and sand) are placed over the platform, in order to distribute more efficiently train loads and prevent the platform damage due to erosion. These differences lead to a higher stiffness of the substructure in high-speed railway tracks.

|

|

| (a) Conventional railway substructure. | (b) High-speed railway substructure. |

| Figure 4: Typical cross-section of conventional and high-speed railway tracks. Scheme including typical values for the vertical stiffness of the platform and subgrades (). Source: López Pita [28]. | |

2.1.2 Railway superstructure

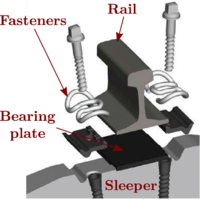

Railway superstructure primary role is to transmit loads from train wheels to the substructure. Figure 5 shows its main components.

- Rails are directly responsible of supporting the vehicles passing. Modern rails are typically manufactured from hot-rolled steel due to the high stresses they have to bear with. Their weight used to be about 60 kg/m [24].

- Fasteners are elements that fix the rails to the sleepers. There are four per sleeper, which corresponds to two per rail.

- Bearing plates are elastic pads collocated between the sleepers and the rails that provide greater vertical elasticity to the whole structure.

- Sleepers are fixed to the rails through the fasteners and are directly in contact with the ballast layer. Sleepers main features will be deeply commented in section 2.2.

|

| Figure 5: Superstructure configuration. |

In addition to the components shown in Figure 5, there is another significant element , called rubber sole, that forms the superstructure. Rubber soles are elastic elements located under the sleepers to modify track stiffness and increase elasticity. They are normally used in viaducts where track elasticity should be increased in order to reduce its rapid damage.

2.1.3 Railway geometrical quality

When a train travels over the track, it has three degrees of freedom corresponding to the displacement along its principal axes, which are: longitudinal (travelling direction), vertical and lateral. In addition, the train has other three degrees of freedom corresponding to the rotation about those axes, which are called: roll (about the longitudinal axis), yaw (about the vertical axis) and pitch (about the lateral axis). Figure 6 shows a diagram of those movements.

|

| Figure 6: Train translational and rotational degrees of freedom. The vehicle travels in the longitudinal direction. |

These displacements and rotations produce a set of loads that have to be taken into account for dimensioning the railway track. Vertical loads are the main criteria for designing the components of the track, lateral loads determine the maximum speed of vehicles movement, whereas longitudinal loads can cause horizontal buckling of the track.

The high loads to which the railway track is subjected, in any of the three directions, can trigger defects in the railway infrastructure. To measure the impact of this defects, in safety and comfort terms, it is important to quantify the track quality, which can be achieved by the following parameters [25]:

- Vertical alignment: this parameter defines the variations in height of the rail upper surface, relative to a reference plane.

- Cross level: this parameter sets the difference in height between the upper surface of both rails on a section normal to the axis of the road.

- Track gauge: parameter determining the distance between the active faces of the heads of the rails 14 mm below the rolling surface.

- Horizontal alignment: parameter which, for each rail, represents the distance, from above, compared to the theoretical alignment.

- Track twist: represents the distance between a point of the track and the plane formed by three points belonging to the same railway. It has an impact on possible derailments.

Figure 7 shows schematically some of these defects.

![Typical railway track alignment defects. Source: López Pita [28].](/wd/images/thumb/8/81/Draft_Samper_552093892-test-Ch2Fig5.png/600px-Draft_Samper_552093892-test-Ch2Fig5.png)

|

| Figure 7: Typical railway track alignment defects. Source: López Pita [28]. |

2.1.4 Railway stiffness

Railway stiffness refers to the resistance of the whole track against deformations in the vertical direction [26]. Burrow [27] highlighted that the magnitude of the railway stiffness is mainly influenced by the substructure. Considering only the layers that compose the substructure, ballast is the most relevant material. It should be noted that, comparatively, the platform influences more, but its stiffness is almost imposed and can not be adjusted.

From Burrow's developments [27], it can be concluded that there is an optimal railway stiffness (at least from the theoretical point of view). When railway stiffness is excessively low, too much rail deformation may occur, while, if it is high, the infrastructure would be damaged rapidly. López Pita et al. [28] proposed a range of optimal values for railway stiffness. Their work was based on optimizing maintenance costs and energy dissipation costs (due to excessive deformations). They used data from the high-speed tracks Paris-Lyon and Madrid-Sevilla. The study concluded that the overall railway stiffness must be between 70 and 80 kN/mm.

The EUROBALT II European project also dealt with the deterioration of railway tracks. Its main objective was to identify the parameters that should be controlled to reduce damage in railway tracks. The findings of this study were that the most influencing parameters to the track behaviour were: the stiffness, the displacement of the rails and the settlement of the different layers.

2.2 Ballasted tracks components

Railway ballast is the material under consideration in this thesis, for that reason it is important to perform a deep study of its properties. In the first part of this section, ballast specifications are described. Moreover, in the second part the main features of sleepers used in Spain are defined. Sleepers characteristics are significant in this research due to the fact that they directly interact with ballast. Finally, some aspects about the fasteners will be commented briefly.

2.2.1 Ballast specifications

Ballast, as structural element, is formed by a set of particles of different size and shape. The ballast is, thus, a layer of granular material which is placed under the sleepers and therefore develops important roles: resisting to vertical and horizontal loads (produced by the passing train over the rail) and facing climate action.

|

| Figure 8: Ballast stones. |

The Standard UNE-EN 13450:2015 [29] specifies the technical characteristics of the ballast used as a supporting layer in railway tracks. The Standard defines quality controls to which ballast should be subjected. Those quality controls have to do with five material properties: granulometry, Los Angeles coefficient (CLA)1, micro-Deval coefficient (MDS)2, flakiness index and particle length.

The size distribution of particles established by the Standard UNE-EN 13450:2015 is presented in Table 2. Ballast particles size should be between and . In addition, the presence of angular stones is discouraged, because those kind of particles slip easily and can obstruct tamping operation.

| Sieve size (mm) | Cumulative % passing through each sieve |

| 63 | 100 |

| 50 | 70-100 |

| 40 | 30-65 |

| 31.5 | 0-25 |

| 22.4 | 0-3 |

| 32-50 | 50 |

Tamping operation is performed by the tamping machine which moves each sleeper and rail into their correct vertical and horizontal position. Then, it introduces its tines into the ballast layer. When the tines reach the required depth they start to vibrate, in order to arrange the ballast stones under the sleeper in the most compacted manner. The need to carry out this operation lies in the necessity of correcting track misalignments by compacting the ballast under the sleepers, providing a solid foundation. If the track is not rigid enough when a vehicle is travelling through it, two different phenomenon can appear simultaneously: vertical deflection and lifting wave [24]. Vertical deflection affects a rail length of to approximately. Its maximum value in the point of application of the load ranges from to , considering a load of per wheel. Otherwise, lifting wave refers to the lift of the front part of the railway track in the direction of movement, the magnitude use to be about the of vertical deflection.

Regarding the train passage, it has to be said that each train axle produces a sleeper stroke to the ballast layer (on a freight train 150 strokes can be reached) which, together with the increasing weight of the sleepers (about ) may lead to a rapid deterioration of ballast stones. Aiming to minimise this deterioration and to resist tamping operation, a hard enough rock, difficult to break, is needed. According to the Railway Standard UNE 22950-1:1990 [31], this requirement is met if the original rock has a compression resistance of . The Standard UNE-EN 13450:2015 established a maximum value for the coefficient of Los Angeles, which should be less than . Abrasion needs are achieved by requiring the ballast a value over 15 in the Deval coefficient.

Particle shape also affects ballast behaviour, that is why flakiness index and particle length are evaluated. The flakiness index test is performed to determine the number of slabs in the granular material used in the construction of railway tracks. Stretched particles can break, leading to modifications of the particle size and decreasing the load capacity. In this context, slabs are the fraction of granular material whose minimum dimension (thickness) is less than 3/5 of the average size of the considered material fraction. The test consists of two sifting operations, the first to separate particles into groups, depending on their size, and the second with a parallel bars sieve. The bars of the second sieve are separated times the size of the first sieve. The flakiness index is expressed as the weight percentage of ballast passing through the bars sieve. According to the regulations, this index must be less than or equal to .

The particle length index is defined as, the mass percentage of ballast particles greater than or equal to 100 mm length, in a ballast sample weighting more than 40 kg. The test is performed by measuring each of the particles with a calliper. According to the legislation the particle length index must be lower than 4%

| Actions | Functions | Property | Property evaluation | |||||

| Vertical | Elasticity and damping | Elastic modulus | Load plate test | |||||

| Ballast thickness | Minimum thickness | |||||||

| Abrasion resistance | Resistance to abrasion | Wet MicroDeval | ||||||

| Alleviate pressure on the railway platform | Ballast thickness | Minimum thickness | ||||||

| Withstand rail shocks | Impact resistance | Los Angeles coefficient | ||||||

| Horizontal | Longitudinal resistance | Granulometry |

| |||||

| Transversal resistance | ||||||||

| Climate | Assist the drainage | Granulometry |

| |||||

| Ice resistance | Frost resistance |

|

Table 3 summarises other features required for materials used as ballast. To comply all those functions, the layer thickness should be between 25 and 35 cm [24]. The lower limit is set by the need of achieving the loads resistance objectives, with less ballast the specifications could not be met, while the upper value is set by the need to restrict seats and geometrical defects on the railway track.

From all the above-mentioned, it can be concluded that, in addition to deal with climate actions, the main functions of the ballast layer are:

- Help to provide stability and damping capacity to the railway track, which reduces the dynamic loads exerted by trains passage.

- Distribute pressures in the platform to avoid reaching the bearing capacity of the ground.

- Withstand particles abrasion that can be consequence of their successive contact with rigid infrastructures such as, for example, concrete bridges.

(1) Wear coefficient of Los Angeles (CLA). It measures wear resistance by attrition and impact of aggregates. It is the ratio of the difference in weight of the initial sample and the material retained by the sieve 1.6 UNE (once subjected to an abrasive and standardised process using iron balls), divided by the initial weight of the sample.

(2) Deval coefficient: determined by the value obtained in the Deval test, consisting of introducing 44 stones of 7 weighing 5 , in a cylinder that rotates around an inclined axis. The cylinder rotates for 5 hours until 10000 turns. Then the set of dispersed materials are weighed getting "P" in grams. The Deval coefficient is given by the ratio 400/P [30].

2.2.2 Sleepers

The elongated pieces made of various materials, located between the rails and the ballast layer are called sleepers, whose main functions are [32]:

- Keep rail position, supporting them and maintaining their separation and inclination.

- Distribute vertical, lateral and longitudinal loads from the rails to the ballast layer.

- Contribute (along with fasteners) to maintain electrical isolation between the two rails, in railway tracks with electrical signals.

- Preserve the horizontal stability of the track (in lateral and longitudinal direction) against stresses due to temperature variations or dynamic loads due to trains passage. Sleepers prevent buckling and ripping (lateral displacement) of rails.

- Preserve the stability of the track in the vertical plane, against static and dynamic loads produced by trains.

The variables that influence in a more significant way the sleepers behaviour are [32]: their dimensions, weight and elasticity.

Sleepers dimensions are important because they affect the supporting area available, which can reduce the stresses transmitted to the ballast layer. A high weight contributes to increase longitudinal and lateral stability of the track, however, heavier sleepers are more difficult to install in the construction phase. Regarding sleepers elasticity, it provides stability absorbing mechanical forces and preventing deterioration, which minimizes maintenance costs.

Different types of sleepers can be classified according to the material they are made of or based on their shape. Sleepers can be made of wood, steel, cast iron, reinforced concrete, pre-stressed concrete or synthetic materials. According to their shape, sleepers can be monoblock, semi-sleepers or twin block, as it is shown in Figure 9.

|

|

|

| (a) Monoblock. | (b) Semi-sleeper. | (c) Bi-block. |

| Figure 9: Different types of sleepers depending on their shape. Source: Álvarez and Luque [32]. | ||

Although the list of different sleepers may seem large, in Spain, basically three types are used:

- Wood sleepers (Figure 10a).

- Two different concrete sleepers: monoblock (Figure 10b) and twin block (Figure 10c).

|

|

|

| (a) Wood sleepers. | (b) Monoblock concrete sleepers. | (c) Twin block concrete sleepers. |

| Figure 10: Different kinds of sleepers, by material and shape. | ||

Wood sleepers, defined in the Standard NRV 3-1-0.0 [33], may be of different kinds of trees such as oak, beech or pine. These sleepers must comply the established regulations, which came from 1966 [34].

In the first railway tracks, constructed in the nineteenth century, the only material used to manufacture sleepers was wood, since their physical and mechanical properties, added to its abundance, made this material the best choice. However, time passes and the possibility of using concrete to build sleepers make wood sleepers almost disappear. Nowadays, wood sleepers are used very rarely and never in high-speed tracks. Wood sleepers can be used, for example, in tracks where the stiffness is very high, like in metal bridges.

Prestressed concrete displaced wood as a material for sleepers manufacturing. These are the most well-known advantages and drawbacks of concrete sleepers over wood sleepers [35]:

- Advantages:

- Concrete sleepers have a longer life, about two or three times more than wood sleepers.

- Their physical conditions are preserved all over the railway track.

- Better track resistance against displacement in its horizontal plane.

- Their greater weight provides higher lateral and longitudinal resistance against different forces.

- Their design can be easily changed to improve track properties.

- Concrete sleepers cost less than treated wood sleepers.

- Disadvantages:

- Concrete sleepers need special insulation to electrically isolate the two rails.

- Its weight, about for monoblock sleepers, compared to the weight of wood sleepers, about , make them an element difficult to handle.

- They are more brittle.

- They present a structural weakness at their centre (in case of monoblock sleepers) because their uniform support on ballast produce stresses on their upper face, being able to originate cracks in concrete.

The Standards N.R.V. 3-1-2.1 [36] and N.R.V. 3-1-3.1 [37] describe, respectively, the main features of monoblock and twin block sleepers. In Spain, monoblock sleepers are widely used because of their resistance (monoblock sleepers can be prestressed) and their bigger bearing surface, that allows a better distribution of loads. The advantages of twin block sleepers are that they are lighter and their behaviour to lateral movement is good (in France they are still used frequently). Twin block sleepers main problems are [38]:

- Its high cost due to the steel used in its central zone.

- They are not the best sleeper in maintaining rail track width, mainly due to its low vertical and horizontal stiffness.

- Their central strut may be corroded easily.

- Their behaviour against derailment is poor.

Regarding the materials used, technical specifications require a high quality cement with high strength and uniform size aggregates and siliceous. The compressive strength of the concrete must be greater than , and the tensile strength of the steel should be about .

There are many types of concrete sleepers depending on their dimensions which can be modified if it is necessary. If a new sleeper design wants to be used, it should be fully defined in a draft drawing signed by the applicant. The draft drawing must include basic dimensions that the department responsible for the railway infrastructure administration determines. Sleeper length should be about and the base width in the outer part is equal to , excluding very few exceptions.

For monoblock sleepers, which will be the object of study in this work as they are the most used in Spain, technical specification ET 03.360.571.8 [39] establishes the parameters that should be defined in the draft. Those parameters are displayed in in Table 4. Figure 11 shows graphically those parameters.

| Dimension | Description |

| Concrete element total length | |

| , | Concrete element lower and upper part thickness |

| Height in each position along the entire concrete element | |

| Distance from the outer reference point to the fasteners | |

| Distance between the outer reference point and the end of the concrete element | |

| Tilting of the rail support plane | |

| Flatness of each supporting area to two points far away 150 mm | |

| Relative torsion between the supporting planes of both rails |

![Sleeper geometry parameters [39].](/wd/images/thumb/4/42/Draft_Samper_552093892-test-Ch2Fig9.png/600px-Draft_Samper_552093892-test-Ch2Fig9.png)

|

| Figure 11: Sleeper geometry parameters [39]. |

2.2.2.1 Friction between ballast and sleepers

Direct interaction between ballast and sleepers makes ballast-sleeper friction a key parameter that affects greatly the behaviour of the whole track. Specifically, it is fundamental in the evaluation of the resistance of the track, mainly against loads in the lateral and longitudinal directions. However, it is a feature very difficult to obtain.

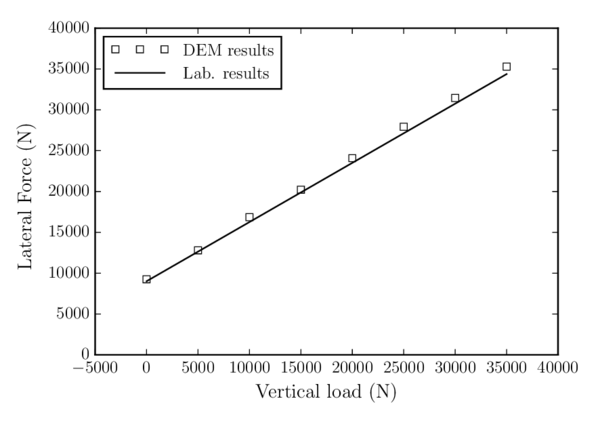

Zand and Moraal [40] compared the variation of the lateral force (force needed to move the sleeper laterally) with the variation of vertical load (weight on the sleeper), obtaining a friction coefficient value of 0.7247. They also compared their results with other previous studies whose conclusion was that the value of the ballast-sleeper friction coefficient used to be between 0.665 and 0.872.

2.2.3 Fasteners

The interaction between rails and sleepers is performed through the fasteners. There are many different types of fasteners since each administration uses the most convenient ones. Regardless of the model, they attach each rail to the sleepers in two points. The fasteners are elastic and their main function is fixing the foot of the rail to the sleeper that has to be prepared for the inclusion of these devices.

2.3 Railway ballast laboratory tests

Well-defined numerical and laboratory tests are available in the literature. These laboratory tests are suitable for validating the numerical code. In this section, some of the most used tests are briefly described.

2.3.1 Ballast lateral resistance

One of the problems that may appear in railway infrastructures is lateral buckling, which is one of the most critical troubles in railway tracks [41]. It can greatly affect the circulation and may cause catastrophic derailments [42]. Lateral buckling can be caused by mechanical or thermal loads, being relatively common in countries with large deviations in temperature between winter and summer. For this reason, lateral resistance of the track is one of the most important parameters regarding track stability, being the design of the ballast layer a key aspect.

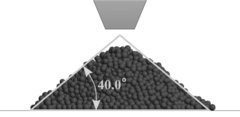

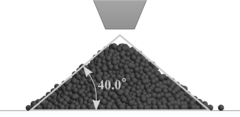

The evaluation of the ballast layer lateral resistance was studied by several researchers. Zand and Moraal [40] carried out some tests that consist of pulling on laterally a track panel with five sleepers inside a ballast bed, measuring the necessary force to move it. Moreover, Kabo [41] developed a numerical study, using the FEM, evaluating the influence of the ballast layer geometry on its lateral resistance. Figure 12 shows one of the analysed geometries.

![Perspective view of FE model of a sleeper embedded inside a ballast bed. Source: Kabo [41].](/wd/images/thumb/6/6b/Draft_Samper_552093892-test-Ch2Fig10.png/600px-Draft_Samper_552093892-test-Ch2Fig10.png)

|

| Figure 12: Perspective view of FE model of a sleeper embedded inside a ballast bed. Source: Kabo [41]. |

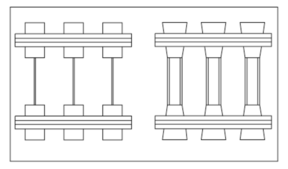

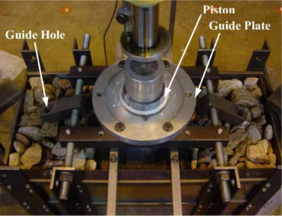

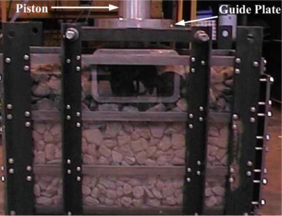

2.3.2 Box compression test

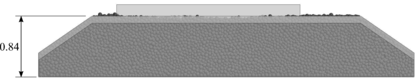

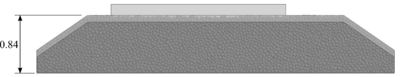

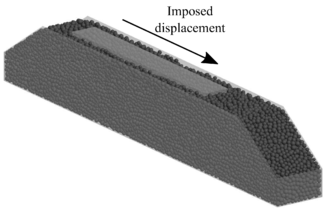

Repeated train loading implies ballast deterioration, which triggers undesired seats in the railway track and fouling of the ballast layer because of breakage. One of the available tests to evaluate the consequences of cyclic loading in the ballast layer is the box compression test (Figure 13).

|

|

| (a) Test set-up, top view. | (b) Test set-up, front view. |

| Figure 13: Box compression test set-up. Source: Lim [3]. | |

The box compression test consists of applying a cyclic load on a ballast bed inside a rigid box. The ballast deformation is measured comparing it with the number of load cycles. Indraratna et al [43] reproduced this test using a two-dimensional breakable DE model for ballast.

2.3.3 Large-scale direct shear test

Figure 14 shows the layout of the typical large-scale direct shear test. This laboratory test consists of introducing ballast inside a box divided in two halves, pulling from the lower box and computing the stress-strain curve of the ballast sample. Ballast can be confined, in order to reproduce more accurately railtrack ballast conditions.

![Box shear test sample. Source: Huang and Tutumluer [45].](/wd/images/thumb/1/16/Draft_Samper_552093892-test-Ch2Fig12.png/300px-Draft_Samper_552093892-test-Ch2Fig12.png)

|

| Figure 14: Box shear test sample.

Source: Huang and Tutumluer [45]. |

This test was already reproduced using the DEM by Indraratna et al. [44] and Huang and Tutumluer [45], among other authors. Numerically, the major interest of this test is that it is performed on a relatively small domain. This implies that the computational cost is moderate, so it can be very useful to perform multiple tests in the calibration phase.

2.3.4 Triaxial tests

The most frequently used test to characterize railway ballast is the triaxial test. The reason is that, in the triaxial test, the ballast sample is confined to a certain pressure, simulating its working conditions under the track. There exist several research works in which ballast triaxial tests were developed using the DEM [46,47,1,48].

One of the difficulties in the simulation of triaxial tests using the DEM is the introduction of the confinement pressure. In laboratory tests, this pressure is applied on a membrane that confines the sample, as it can be seen in Figure 15. Some authors use simplifications to introduce pressure on the sample, but there are methods for calculating discrete (for ballast particles) and finite (for the membrane) elements coupled, using explicit schemes [49]. The possibility of considering the DEs and FEs totally coupled would increase the possibilities of practical application of the model.

![Triaxial test device with ballast inside. Source: Harkness et al. [48].](/wd/images/thumb/8/89/Draft_Samper_552093892-test-Ch2Fig13.png/150px-Draft_Samper_552093892-test-Ch2Fig13.png)

|

| Figure 15: Triaxial test device with ballast

inside. Source: Harkness et al. [48]. |

3 Introduction to the Discrete Element Method with spherical particles

Since Cundall and Strack [16] presented the first ideas of the DEM in 1979, this numerical technique has increased its popularity being known, nowadays, as a powerful and efficient tool to reproduce the behaviour of granular materials.

For the analysis of granular materials with the DEM, each grain is represented as a rigid particle. In the first DEM approaches, those particles used to be spheres (3D) and circles (2D), but now, numerous advances were developed, in order to represent different geometries. Regardless the geometry of the particles, they interact among themselves in the normal and tangential directions, assuming material deformation concentrated at the points of contact. Material properties are defined by appropriate contact laws that can be seen as the formulation of the material model at the microscopic level.

In this chapter, the main features of the classical DEM, with spheres, is presented. The solving strategy is based on three main steps: contact detection, evaluation of forces and integration of motion.

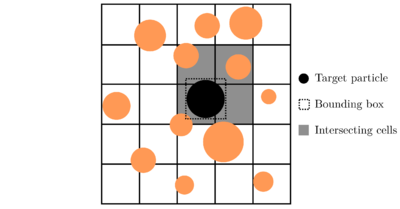

3.1 Contact detection algorithm

Due to the method formulation, the definition of the appropriate contact laws is fundamental and a fast contact detection is something of significant importance in DEM calculations. Contact status between two DE particles can be calculated from their relative position in the previous time step and it is used for updating the contact forces at the current step. The relative computational cost of the contact detection over the total computational cost is high in most of DEM simulations, and so, the problem of how to recognize all contacts precisely and efficiently has received considerable attention in the literature [50,51].

Contact detection for non-spherical particles may involve the resolution of a non-linear system of equations (see the case of superquadrics [49,52] or polyhedra [53,54,55]), for that reason, the use of spheres is the most efficient choice.

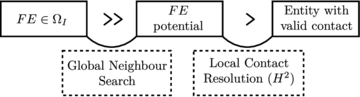

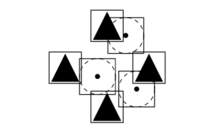

Traditionally, the contact detection is split into two stages:

- Global Neighbour Search (GNS) consists in determining potential contact objects for each given particle (target). In this stage the computational cost can be reduced from in an all-to-all check to a . Han et al. [56] compared the most common Global Neighbour Search algorithms, cell based and tree based, in simulations with spherical particles.

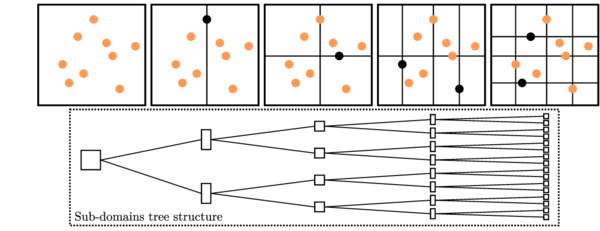

Cell based algorithms [57,58] divide the entire calculation domain in rectangles (2D) or cuboids (3D). Meanwhile, the target particle is surrounded by a simple bounding box, that is used to check rapidly which cells intersects with it. The list of intersecting cells are stored for each particle (see Figure 16). The potential neighbours for every target particle are all the elements stored in the different cells to which the bounding box belongs.

|

| Figure 16: Cell based algorithm scheme in 2D. |

Tree based algorithms [59,60] start splitting the entire domain into two sub-domains, taking into account the position of a centred particle. Then, two particles, one in each of those sub-domains, are used to divide them into smaller regions, alternating the splitting dimension (X, Y and Z in 3D). The process continues till the required number of sub-domains is obtained (see Figure 17). The potential neighbours are determined following the tree in upward direction.

|

| Figure 17: Two-dimensional sub-domain decomposition of tree based algorithm. |

Numerical tests showed better performance for the cell based algorithms (D-Cell [57] and NBS [58]) over the tree based ones (ADT [59] and SDT [60]), specially for large-scale problems. It should also be noted that the efficiency is dependent on the cell dimension and, in general, the size distribution can affect the performance. Han et al. [56] suggest a cell size of three times the average discrete object size for 2D and five times for 3D problems. It is worth noting that (using these or other efficient algorithms) the cost of the GNS represents typically less than 5 percent of the total computation while the total cost of the search can reach values over 75 percent [61]. In this sense the focus should be placed on the next stage rather than on optimizing the GNS algorithms.

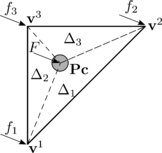

- Local Contact Resolution (LCR) consists in defining the actual contacting particles from the potential neighbours. If the particles are in contact, the normal and tangential force directions, the position of the point of contact and the characteristics of the overlap may also need to be determined at this stage.

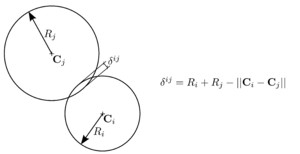

Figure 18 shows the LCR stage for spherical particles. It can be seen that the only data needed to completely define the contact are the centres of the spheres and their radius. In the example of Figure 18, contact exists between the target particle and particle because the distance between their centres is less than the sum of their radius

|

|

(3.1) |

where , are the centres and , the radius of the particles and respectively.

|

| Figure 18: Local Contact Resolution (LCR). |

On the other hand, Figure 18 shows that potential contact between particles , and , , which were found in the GNS stage, are discarded in the LCR stage.

The computation of contact characteristics is explained in section 3.2.2. For non-spherical particles, LCR may be more complex. This topic will be further discussed in chapters 4 and 5.

It is worth mentioning that, depending on the nature of the calculation, it may be advantageous not to perform contact detection at every time step, due to its computational cost. In most of the numerical calculations, contact duration lasts several time steps because particles position varies very little, specially in the computation of dense collections of particles, such as railway ballast numerical calculations. In this regard, computational efficiency can be improved by developing contact detection after several time steps, only updating the characteristics of previous contacts (point of contact, normal and tangential force direction and penetration). However, numerical stability should be taken into account, because if contacts are detected late, indentations (and forces) will achieve high values, leading to instabilities and inaccurate results.

In this work, GNS stage is carried out using a basic cell-based algorithm parallelised using OMP. Meanwhile, LCR is developed between spheres, leading to an efficient contact characterisation. In this work, LCR is always performed between spheres because particles used are spheres and clusters of spheres (rigid particles formed by groups of spheres overlapped in a rigid way, see Section 5.3). Issues related with contact between DEs and planar boundaries are explained in detail in chapter 4.

3.2 Force evaluation

The behaviour of granular materials is governed by grain-grain contact interactions. This is the basis of the DEM approach, where the material is characterised by means of defining the interactions between its constituent particles.

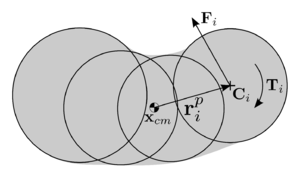

3.2.1 Equations of motion

In the basic DEM formulation, standard rigid body dynamic equations define the translational and rotational motion of particles. For the special case of spherical particles these equations, for the -th particle, can be written as

|

|

where , and are respectively the particle centroid displacement and its first and second derivative in a fixed coordinate system , is the angular acceleration, is the particle mass, is the second order inertia tensor with respect to the particle centre of mass, is the resultant force, and is the resultant moment about the central axes.

and are computed as the sum of:

- the forces and moments applied to the -th particle due to external loads and moments, and , respectively,

- the contact interaction forces from other particles , where is the index of the neighbouring particle ranging from to the number of elements in contact with the particle under consideration .

From the above-mentioned, and can be expressed as

|

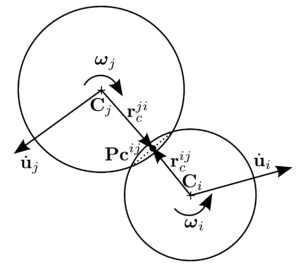

where is the vector connecting the centre of mass of the -th particle and the point of contact with the -th particle (Fig. 19). The contact between the two interacting spheres can be represented by the contact forces and , which satisfy .

|

| Figure 19: Contact between two spherical particles. |

3.2.2 Contact characteristics

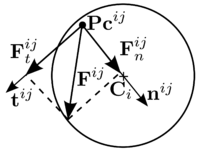

The contact force can be decomposed into the normal and tangential components at the point of contact . The local reference frame in the point of contact is defined by normal and tangential unit vectors, as it is shown in Figure 20.

|

| Figure 20: Decomposition of the contact force into normal and tangential components. |

The unit normal vector is defined along the line connecting the centres of the two particles. Its direction is towards the centre of particle .

|

|

(3.8) |

The indentation or inter-penetration is calculated from the distance between the centres of the particles and their radius (Figure 21).

|

| Figure 21: Indentation or inter-penetration . |

Vectors from the centre of particles to the point of contact and depend on the contact model. Therefore, the position of the point of contact is influenced by the contact model used.

The relative velocity between both contacting particles at the point of contact is determined by

|

|

(3.9) |

which can be decomposed in the local reference frame as:

|

The tangential unit vector can be obtained from the tangential component of the relative velocity between the particles and .

|

|

(3.12) |

Now the contact force between the two interacting spheres and can be decomposed into its normal and tangential components, as it can be seen in Figure 20 and eq. 3.13.

|

|

(3.13) |

The forces and are obtained from the contact constitutive model.

3.2.3 Contact constitutive models

Contact between granular material particles is, in general, a complex and highly non-linear problem, which, in the DEM framework, is simplified. For spherical particles, the constitutive contact models used depend on a few parameters such as the particles relative velocity, inter-penetration, radius and material properties. To take into account energy losses, some damping parameters need to be defined.

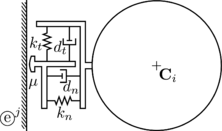

The main parameters used in standard constitutive models in the DEM are shown in Figure 22.

![Standard DEM contact interface [Onate2013].](/wd/images/thumb/5/55/Draft_Samper_552093892-test-Ch3Fig7.png/300px-Draft_Samper_552093892-test-Ch3Fig7.png)

|

| Figure 22: Standard DEM contact interface [Onate2013]. |

Those parameters are:

- : normal contact stiffness.

- : tangential contact stiffness.

- : normal local damping coefficient at the contact interface

- : tangential local damping coefficient at the contact interface.

- : friction coefficient.

The first contact model presented by Cundall and Strack [16] was the linear spring-dashpot model. It uses, for both normal and tangential direction, a linear elastic stiffness device and a damper to introduce viscous energy dissipation. Within the commonly used contact constitutive models, this is the simplest one.

Other contact models consider the relationship between force and displacement non-linear. The most popular non-linear contact models come from the Hertz-Mindlin and Deresiewicz theory. Hertz [62] proposed the relationship between normal force and normal displacement. Deresiewicz and Mindlin [63] requested a general tangential force model where the force-displacement relationship depends on the whole loading history as well as on the instantaneous rate of change of the normal and tangential force or displacement.

In the literature, there can be found other contact models, but the model used in this work is the Hertz-Mindlin model simplified by Thornton et al. [64], due its balance between simplicity and accuracy [15].

3.2.3.1 Normal contact force calculation within the Hertz theory

Throughout the Hertzian theory, the normal contact force is decomposed into the elastic part and the damping contact force :

|

|

(3.14) |

The elastic part of the normal compressive contact force is calculated as:

|

|

(3.15) |

while the contact damping force is assumed to be of viscous type and given by:

|

|

(3.16) |

The damping coefficient is taken as a fraction of the critical damping , which depends on the particles mass and on the contact stiffness :

|

|

(3.17) |

The fraction is related with the coefficient of restitution , which is a material property that defines the kinetic energy that remains after a collision between two objects. It can also be defined as the fractional value representing the ratio of speeds after and before an impact

|

|

(3.18) |

Taking this into account, can be expressed as a function of through the following expression [64]:

|

|

(3.19) |

However, as the coefficient of restitution is a material parameter and the parameter to be used in the DEM contact force evaluation, is more convenient to express as a function of . The solution can be accurately fitted by a curve of the form

|

|

(3.20) |

where

|

|

(3.21) |

Thornton et al. [64] defined which are the appropriate values of the parameters for each linear or Hertzian contact model respectively.

3.2.3.2 Tangential contact force calculation within the Deresiewicz and Mindlin theory

The elastic tangential force at time step depends on the previous elastic tangential force, at time step . It should be noted that the tangential stiffness will be different if the normal force is increasing (loading phase) or decreasing (unloading case). For the loading phase, the relationship between the elastic tangential force and the relative tangential displacement is defined through a regularised Coulomb model. On the other hand, in the unloading phase, the elastic tangential force must be reduced due to the reduction in the contact area, which implies that the previous tangential force can not be longer supported.

|

|

(3.22) |

The increment of tangential displacement is computed from the integration of the relative displacement of the point of contact in the local frame , due to particles translation and rotation.

|

|

(3.23) |

For the calculation of the tangential contact force , not only the elastic contact force but also the damping contact force should be considered. The damping coefficient is, as for the normal direction, a fraction of the critical damping :

|

|

(3.24) |

After calculating the elastic and damping tangential forces at time step , it has to be ensured that the total tangential force does not exceed the Coulomb's friction limit:

|

|

(3.25) |

|

|

(3.26) |

3.2.3.3 Contact parameters of the Hertz-Mindlin and Deresiewicz theory

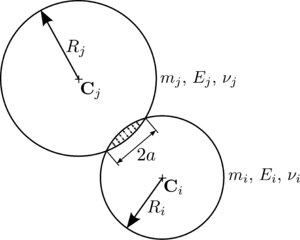

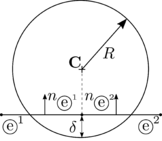

Till this point, forces have been expressed as a function of contact parameters. However, when the numerical model is applied to a real case, those contact parameters should be computed from the material properties and particles geometry. For the calculation of the contact stiffness, the Hertz theory considers the curvature of the contacting surfaces [65]. When two particles with radius and are in contact, the contact area is a circle of radius , as shown in Figure 23.

|

| Figure 23: Hertz contact model scheme. |

Which leads to the definition of and [66]

|

Meanwhile, the damping parameters are defined as:

|

Considering the contact between the two spheres and from Figure 23, if the material they are composed of has different Young modulus (, ), Poisson's ratio (, ), radius (, ) and damping coefficient (, ), the equivalent contact properties are computed as:

|

The value of the shear modulus for each particle can be obtained from its Young modulus and the Poisson's ratio :

|

|

(3.30) |

3.3 Time integration

As it was introduced in section 1.1, equations 3.2 and 3.3 are integrated in time using an explicit scheme, where the information at the previous step suffices to predict the solution at the next step. Explicit integration schemes require the time step to be below a certain limit in order to achieve computational stability. The critical time step depends on the material properties, particles geometry and constitutive model [67].

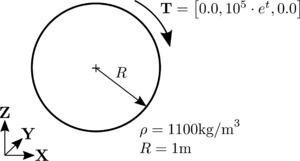

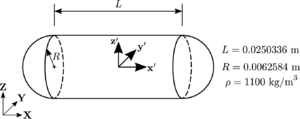

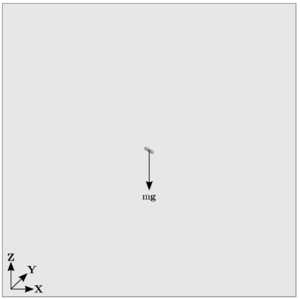

There exist several integration schemes, which can be of different order. In this work, low order (first and second) integration schemes are used, due to the fact that with such small computational time steps the use of higher order methods would not increase the accuracy significantly.

3.3.1 Explicit integration methods

The explicit methods to be described below come from the application of the Taylor series approximation of the equations that describe the problem (second order differential equations of motion 3.2 and 3.3).

|

|

(3.31) |

It should be mentioned that, the time integration of equation 3.3 is only applicable to spherical particles, due to the fact that spheres inertia tensor is independent of the orientation, furthermore, because of the symmetry of the sphere, the moment of inertia taken about any diameter is

|

|

(3.32) |

and the angular acceleration can be directly computed

|

|

(3.33) |

As for spheres, the orientation of the particle is not a key parameter, the use of the following explicit integration schemes, for calculating rotation increments, can be acceptable. However, in Section 5.3.1 the weaknesses of this schemes will be highlighted.

3.3.1.1 Forward Euler scheme

The forward Euler scheme is referred to as a first order approximation of the displacement and velocities.

|

Regarding the rotational motion, the angular velocity is also approximated using a first order approach

|

|

(3.36) |

such as the vector of incremental rotation

|

|

(3.37) |

3.3.1.2 Symplectic Euler scheme

The Symplectic Euler is a modification of the previous method which uses a backward difference approximation for the derivative of the position and the increment of rotation.

|

3.3.1.3 Taylor scheme

The Taylor scheme applies a first order integrator for the linear and angular velocities and a second order integrator for the position and rotation increment.

|

3.3.1.4 Velocity Verlet scheme

This algorithm is also known as the Central Differences algorithm [68]. Within this approach, the approximate velocity at instant is computed in order to calculate the displacement along this time step.

|

The velocity and the displacement are then used to estimate the force in the following time step.

|

|

(3.48) |

Finally the velocity is updated.

|

For the rotational motion, the procedure is the same as for the translational motion.

|

The torque in the following time step is estimated.

|

|

(3.53) |

And the angular velocity is updated.

|

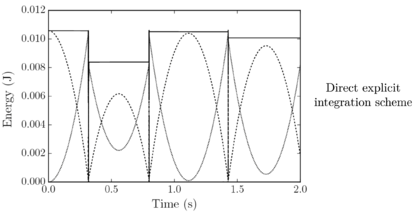

3.3.2 Accuracy, stability and computational cost

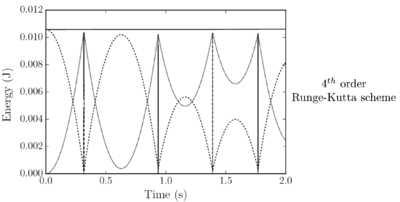

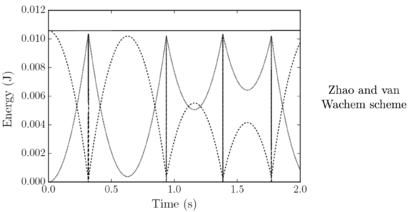

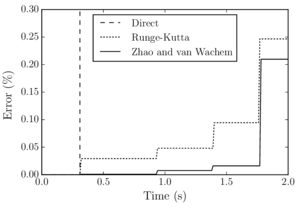

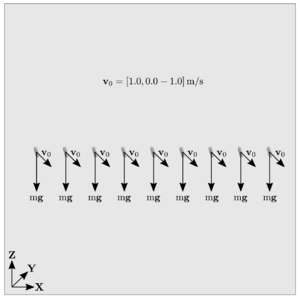

Santasusana [15] developed different benchmark cases in order to evaluate which is the better scheme to use, for the translational motion. From this study it was concluded that, Symplectic Euler and Velocity Verlet accurately approximate the indentation, however, regarding the velocity, the Velocity Verlet scheme is superior to the other schemes. From the tests concerning the numerical stability, the results showed that Velocity Verlet scheme is the only one which has an acceptable performance in the limit of the critical time step, as it is a second order scheme.

Concerning the computational cost, it was found that the difference in computational time between the quickest (Forward Euler) and the slowest (Velocity Verlet) is lower than 3%

From the above-mentioned it was concluded that the better performance is obtained with the Velocity Verlet scheme, which is the scheme chosen in the calculations along this work.

Regarding the integration of the rotational motion, these schemes can be assumed accurate enough for calculating rotation increments of spherical particles in most of the cases. However, if the orientation of the particle needs to be tracked, the use of specific schemes would be recommended. Those numerical schemes are presented in Section 5.3.1.

4 Interaction of spherical particles with rigid boundaries

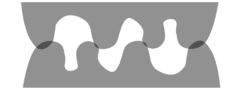

Till this point, the basic features of the DEM with spherical particles, were explained. However, it was realised that a greater adaptability of the numerical code would be achieved if there exist the possibility of computing the contact between planar boundaries and DEs. Talking about railway ballast, the boundaries refer to the soil, the sleeper or, in case of representing laboratory tests, the walls of the corresponding device.

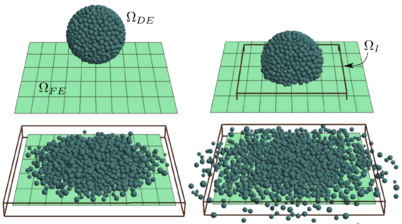

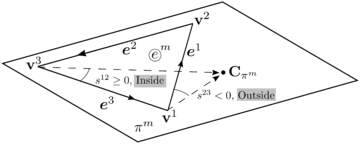

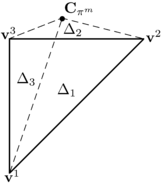

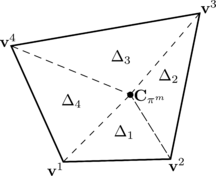

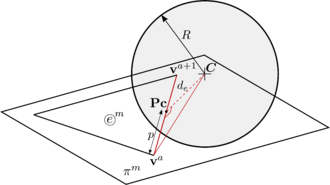

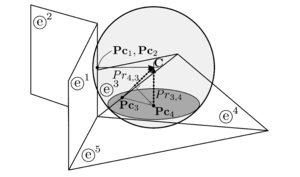

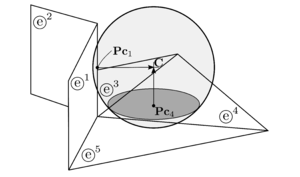

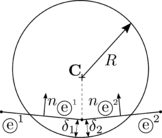

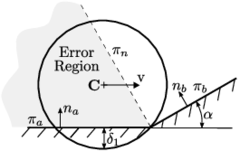

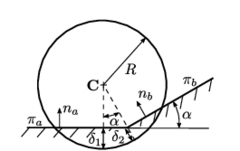

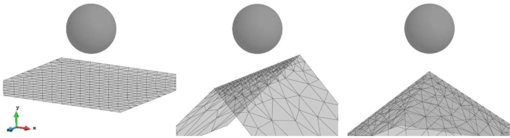

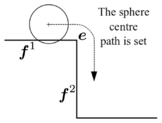

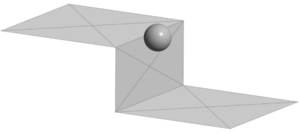

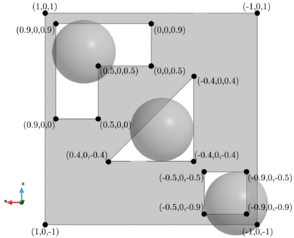

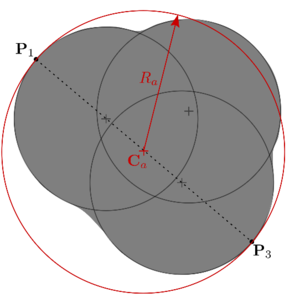

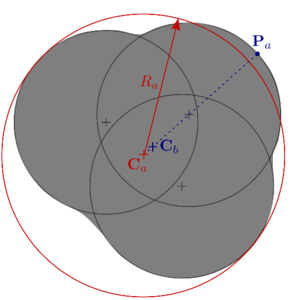

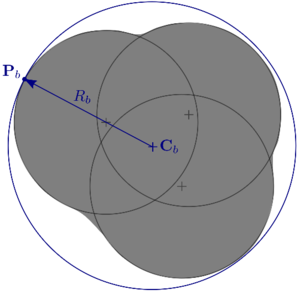

Regarding the characterisation of railway ballast, most of the deformation will occur in the layer of granular material and a small deformation will occur in the boundaries interacting with it. Thus, a new methodology for the treatment of the contact interaction between spherical DEs and rigid boundaries is developed. The parts of the rigid body are defined from surfaces meshed with a Finite Element-like discretisation. The detection and calculation of contacts between those DEs and the discretised boundaries is not straightforward and, during the last years, it was addressed by different approaches.

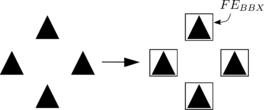

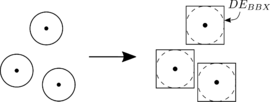

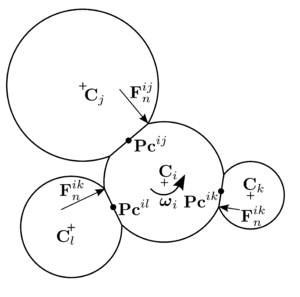

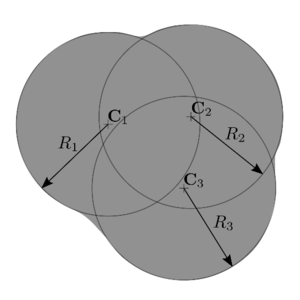

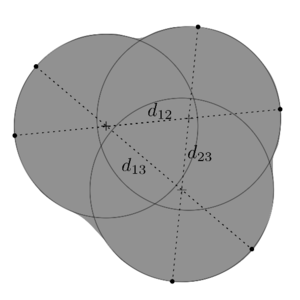

The algorithm presented in this chapter considers the contact of DEs with geometric primitives of a Finite Element (FE) mesh (facets, edges or vertices). To do so, the original hierarchical method presented by Horner in 2001 [61] is extended with a new insight leading to a robust, fast and accurate 3D contact algorithm which is fully parallelisable. The implementation of the method is developed in order to deal ideally with triangles and quadrilaterals. If the boundaries are discretised with another type of geometries, the method can be easily extended to higher order planar convex polyhedra.

In the following sections, a review of the state of the art of the existing methods for modelling the contact between DEs and rigid boundaries is presented. Next, the proposed strategy for the DE/FE contact search is described. Finally, some validation analysis together with examples of performance in critical situations (where most of the literature methods would fail) are shown.

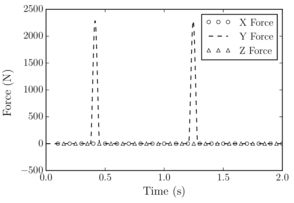

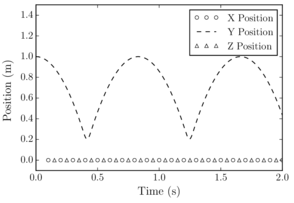

Most of the ideas presented in this chapter are based on the published article The double hierarchy method. A parallel 3D contact method for the interaction of spherical particles with rigid FE boundaries using the DEM[69].

4.1 State of the art

Particle-solid interaction problems were resolved by different approaches. Among the simplest ones is the glued-sphere approach [70], which approximates any complex geometry (i.e. a rigid body or boundary surface) by a collection of spherical particles, retaining the simplicity of particle-to-particle contact interaction. This approach, however, is geometrically inaccurate and computationally intensive due to the introduction of an excessive number of particles. Another easy approach (used in some numerical codes, e.g., ABAQUS) is to define the boundaries as analytical surfaces. This approach is computationally inexpensive, but it can only be applied in certain specific scenarios, where the use of infinite surfaces do not disturb the calculation. A more complex approach which combines accuracy and versatility is to resolve the contact of particles (spheres typically) with a finite element boundary mesh. These methods take into account the possibility of contact with the primitives of the FE mesh surface, i.e., facet, edge or vertex contact.

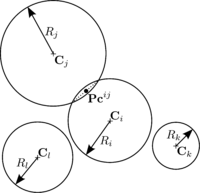

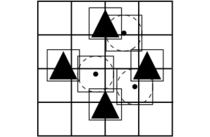

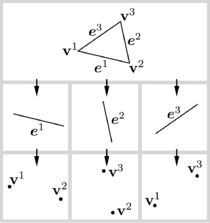

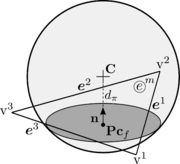

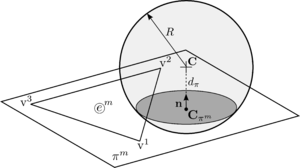

Horner et al. [61] and Kremmer et al. [71] developed the first hierarchical resolution algorithms for contact problems between spherical particles and triangular elements, while Zang et al. [72] proposed similar approaches accounting for quadrilateral facets. Dang et al. [73] upgraded the method introducing a numerical correction to improve smoothness and stability. Su et al. [74] developed a complex algorithm involving polygonal facets under the name of RIGID method which includes an elimination procedure to resolve the contact in different non-smooth contact situations. This approach, however, does not consider the cases when a spherical particle might be in contact with the entities of different surfaces at the same time (multiple contacts) leading to an inaccurate contact interaction. The upgraded RIGID-II method presented later by Su et al. [75] and also the method proposed by Hu et al. [76] account for the multiple contact situations, but they have a complex elimination procedure with many different contact scenarios to distinguish, which is difficult to code in practice. Recently, Chen et al. [77] presented a very simple and accurate algorithm which covers many situations. Their elimination procedure, however, requires a special database which can not be computed in parallel.

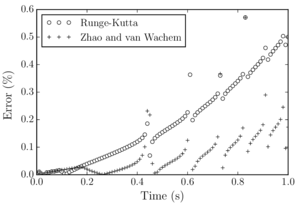

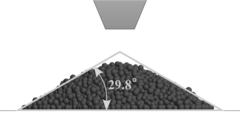

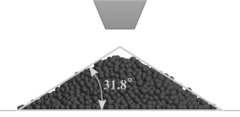

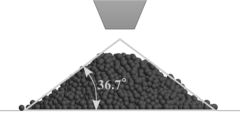

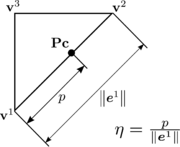

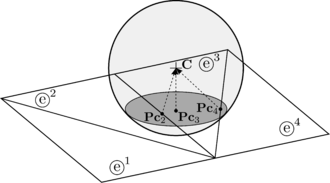

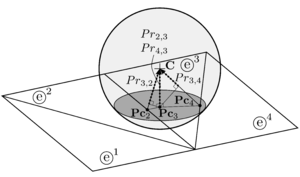

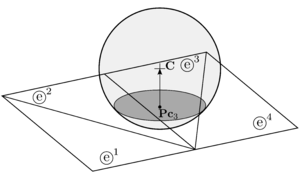

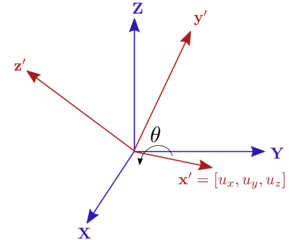

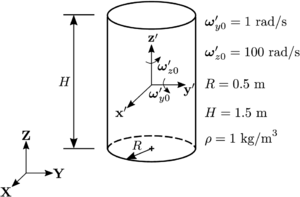

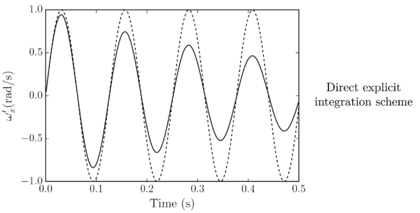

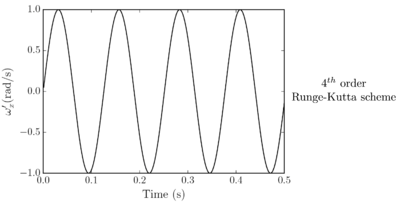

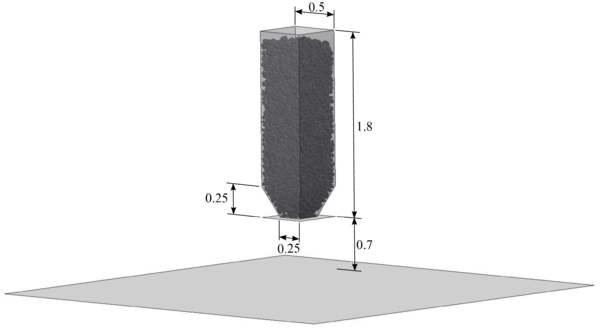

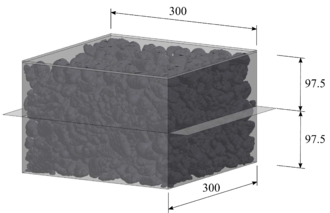

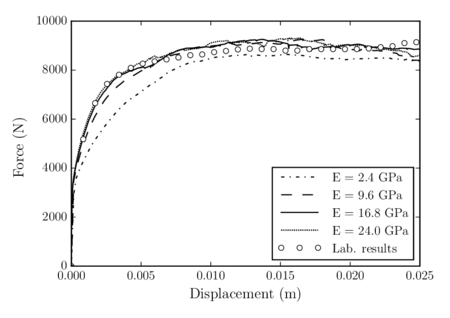

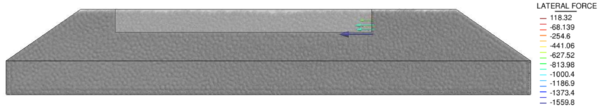

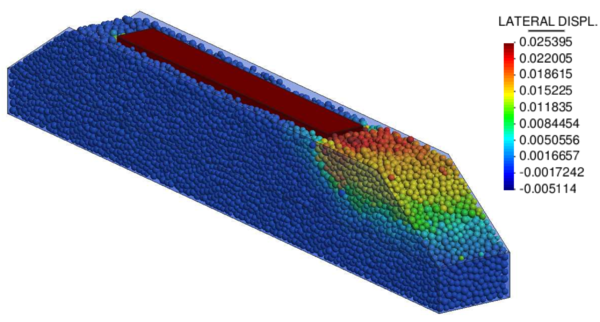

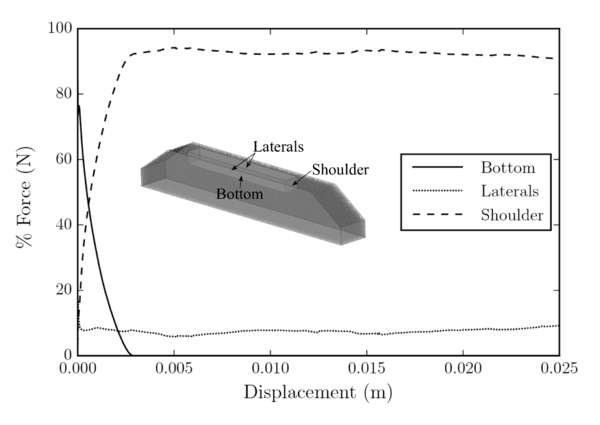

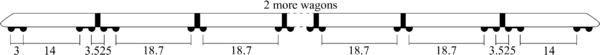

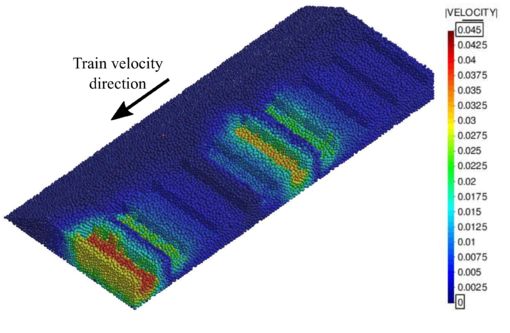

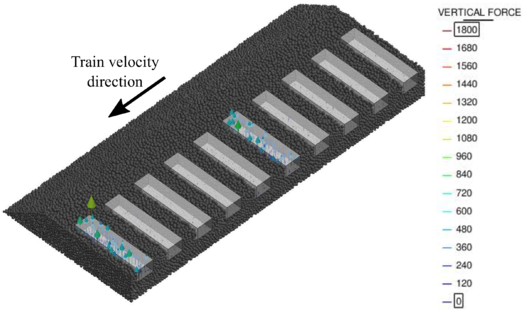

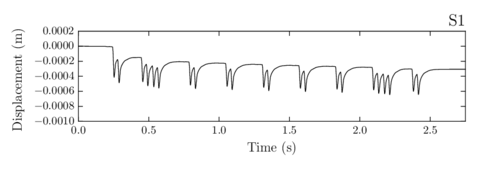

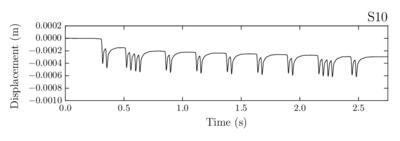

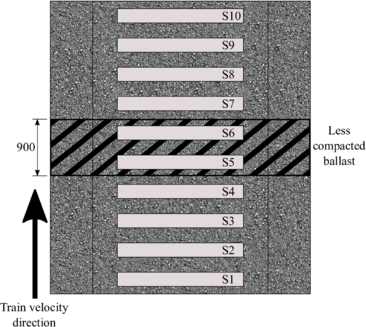

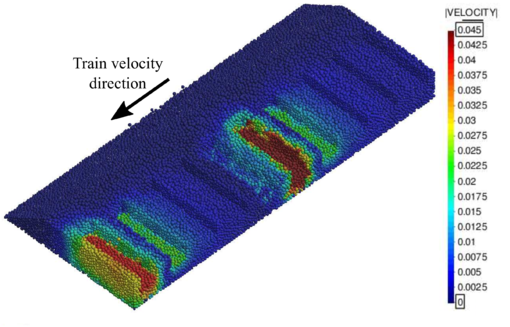

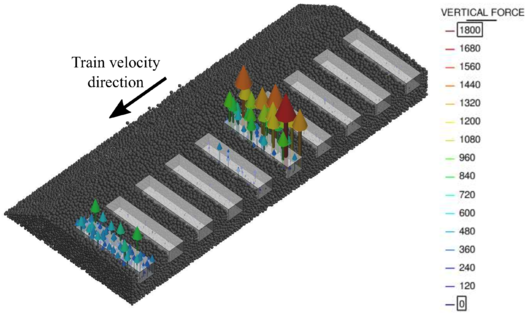

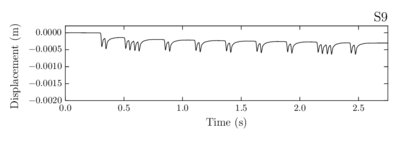

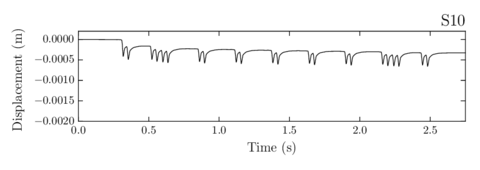

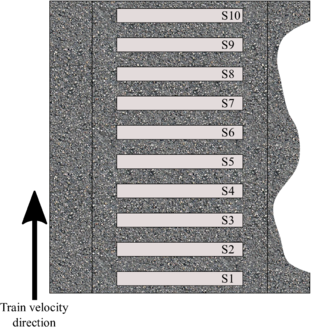

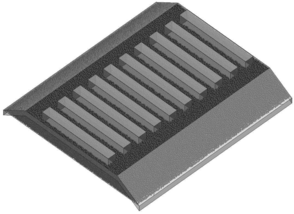

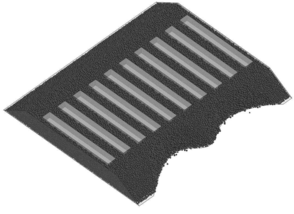

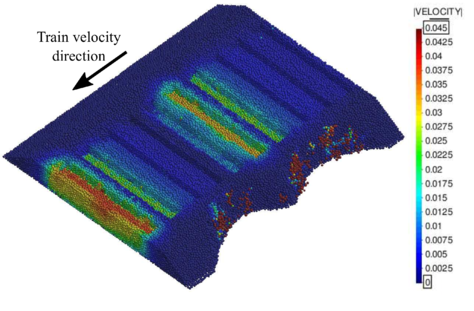

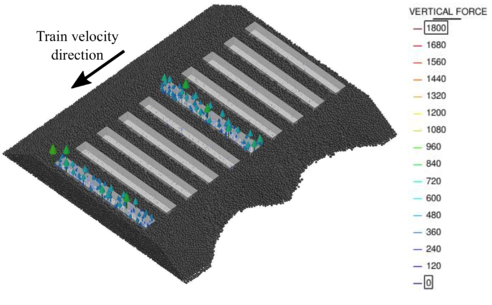

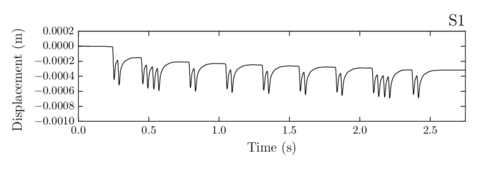

Therefore, the proposed Double Hierarchy () method consists in a simple contact algorithm based on the FE boundary approach. The method is specially designed to resolve efficiently the intersection of spheres with triangles and planar quadrilaterals but it can also work fine with any other higher order planar convex polyhedra. A two layer hierarchy is applied upgrading the classical hierarchy method presented by Horner [61]; namely hierarchy on contact type followed by hierarchy on distance. The first one, classifies the type of contact (facet, edge or vertex) for every contacting neighbour in a hierarchical way, while the distance-based hierarchy determines which of the contacts found are valid or relevant and which ones have to be removed.