m (Giuliotoscani moved page Draft Toscani 720756083 to Review 161341611808) |

(Tag: Visual edit) |

||

| Line 1: | Line 1: | ||

| − | ==Chilling Out Data Chaos: Leveraging Cold Chain Principles and the Triple Helix Framework== | + | =='''Chilling Out Data Chaos: Leveraging Cold Chain Principles and the Triple Helix Framework'''== |

| − | ==Giulio Toscani== | + | ====Giulio Toscani==== |

| − | ABSTRACT As big data increasingly influences decision-making, ensuring data consistency and reliability has become essential. This paper explores how the cold chain’s singular focus on temperature can serve as a model for building unified, high-quality, and sustainable data systems. Despite the primary emphasis on machine learning of literature, this grounded in theory research, investigates how big data quality impacts technological execution and decision-making across industries. Drawing on 65 expert interviews, the study’s final discussion highlights the potential of the Triple Helix model—collaboration between government, industry, and society—as a framework for creating unified data systems. The cold chain, with its focus on temperature as the sole variable, exemplifies how such collaboration can enhance data reliability. Key challenges identified include data governance, operational inconsistencies, and barriers to collaboration. The paper proposes solutions such as professional training and the development of collaborative infrastructures to improve data dependability and support sustainable decision-making across industries. | + | ABSTRACT |

| + | |||

| + | As big data increasingly influences decision-making, ensuring data consistency and reliability has become essential. This paper explores how the cold chain’s singular focus on temperature can serve as a model for building unified, high-quality, and sustainable data systems. Despite the primary emphasis on machine learning of literature, this grounded in theory research, investigates how big data quality impacts technological execution and decision-making across industries. Drawing on 65 expert interviews, the study’s final discussion highlights the potential of the Triple Helix model—collaboration between government, industry, and society—as a framework for creating unified data systems. The cold chain, with its focus on temperature as the sole variable, exemplifies how such collaboration can enhance data reliability. Key challenges identified include data governance, operational inconsistencies, and barriers to collaboration. The paper proposes solutions such as professional training and the development of collaborative infrastructures to improve data dependability and support sustainable decision-making across industries. | ||

INDEX TERMS Outcome, Data expert, Big Data, Value deterioration, Cold Chain | INDEX TERMS Outcome, Data expert, Big Data, Value deterioration, Cold Chain | ||

| − | =1. INTRODUCTION= | + | ===1. INTRODUCTION=== |

In recent times, the proliferation of digital technologies has led to a surge in big data accumulation, sparking interest among professionals and scholars. Organizations leverage big data for insights and a competitive edge, but its size and complexity present challenges (Fan, Fang & Han, 2014). Concerns include data quality, emphasizing the role of subjective choices by researchers (Naeem et al., 2022). This research contrasts human design with unbroken technological execution in the cold-chain sector, analyzing value deterioration over time (Singh et al., 2018) and its impact on sustainability (Farrukh & Holgado, 2020). Miller and Mork's (2013) data value-chain model guides the study, examining decision-making processes from a value-rational approach. The focus is on how data experts navigate decision-making processes and behaviors within this context (Snihur & Zott, 2020). More research is needed on how Data experts' thinking patterns and behaviors relate to data deterioration, aiming to provide insights for optimal data use (Rahwan et al., 2019). | In recent times, the proliferation of digital technologies has led to a surge in big data accumulation, sparking interest among professionals and scholars. Organizations leverage big data for insights and a competitive edge, but its size and complexity present challenges (Fan, Fang & Han, 2014). Concerns include data quality, emphasizing the role of subjective choices by researchers (Naeem et al., 2022). This research contrasts human design with unbroken technological execution in the cold-chain sector, analyzing value deterioration over time (Singh et al., 2018) and its impact on sustainability (Farrukh & Holgado, 2020). Miller and Mork's (2013) data value-chain model guides the study, examining decision-making processes from a value-rational approach. The focus is on how data experts navigate decision-making processes and behaviors within this context (Snihur & Zott, 2020). More research is needed on how Data experts' thinking patterns and behaviors relate to data deterioration, aiming to provide insights for optimal data use (Rahwan et al., 2019). | ||

| Line 17: | Line 19: | ||

RQ: How do Data experts' decision-making patterns and behaviors influence data value? | RQ: How do Data experts' decision-making patterns and behaviors influence data value? | ||

| − | =2. LITERATURE REVIEW = | + | ===2. LITERATURE REVIEW === |

In the present era, the widespread assimilation and advancement of digital technologies have led to a significant amplification in the accumulation of big data, the extensive volumes of data due to their efficacy in enhancing organizational efficacy (Al-Dmour et al., 2023). Firms utilizing AI exhibit distinctive traits: a unique operational framework prioritizing data, integration of AI into core processes, real-time decision-making through experimentation, and granular forecasting. They proactively learn from customer feedback via real-time experiments, enhancing offerings through continual data evaluation. (Iansiti & Lakhani, 2020). The extensive attention and scholarly curiosity from experts and researchers across diverse academic fields can be attributed to the rationale behind the significant interest evoked by big data (Abbasi, Sarker, & Chiang, 2016). The variability of human conditions will undermine the outcome of human decisions, instead, an approach to a machine learning decision-making tool would be less prone to variation, but still affected by human biases (Obermeyer & Lee, 2017; Allen & Choudury, 2022). However, regrettably, the findings indicate that the degree to which companies have implemented big data analytics applications is moderately and diversely observed among them due to inadequacies and disparities in their organizational and technological capabilities (Al-Dmour et al., 2023). Big data poses challenges due to its high dimensionality and large sample size. These challenges include noise accumulation, spurious correlations, and incidental homogeneity caused by high dimensionality. Additionally, the combination of high dimensionality and large sample size leads to issues like heavy computational costs and algorithmic instability. Furthermore, the aggregation of massive samples from multiple sources at different time points using various technologies adds complexity to the analysis (Fan, Fang and Han, 2014). This is important because when classification algorithms use human-generated input data that suffer from human biases (Sayogo et al., 2014), the predictions they generate may exacerbate the errors stemming from such biases and technical debt increase (Park, Jang & Lee, 2018). In the presence of variability in the bias-induced error, the impacts of bias can be mitigated, but not eliminated, even if the algorithmic design is adjusted to account for the bias (Ahsen et al. 2017). The moderation of resource capital influences the collection and storage of consumer activity records as Big Data, the extraction of insights from Big Data, and the utilization of insights to enhance dynamic/adaptive capability. Inadequate organizational alignment and member education on proactive utilization of insights can hinder effective utilization of consumer insights and impede a firm's adaptive capability (Erevelles, Fukawa, & Swayne, 2016). This may hinder the effective utilization of data may lead to bias-conscious algorithms that have the potential to greatly enhance anticipated results, although the extent of improvement relies heavily on the discriminatory capacities of information, encompassing accurate long-term strategizing; however, despite the awareness of big data's advantages, top-level management fails to prioritize it, ranking as the secondary factor contributing to unsuccessful data outcomes, consequently leading to a scarcity of financial resources as the primary cause (Ahsenet al., 2017; Al-Dmour et al., 2023). Miller and Mork (2013) recommended a collaborative partnership for data collection from diverse stakeholders, aiming to optimize service delivery and quality decisions. They proposed streamlining data management activities to benefit all stakeholders and adopting a portfolio-management approach to invest in people, processes, and technology, thus maximizing the value of integrated data and improving organizational performance. | In the present era, the widespread assimilation and advancement of digital technologies have led to a significant amplification in the accumulation of big data, the extensive volumes of data due to their efficacy in enhancing organizational efficacy (Al-Dmour et al., 2023). Firms utilizing AI exhibit distinctive traits: a unique operational framework prioritizing data, integration of AI into core processes, real-time decision-making through experimentation, and granular forecasting. They proactively learn from customer feedback via real-time experiments, enhancing offerings through continual data evaluation. (Iansiti & Lakhani, 2020). The extensive attention and scholarly curiosity from experts and researchers across diverse academic fields can be attributed to the rationale behind the significant interest evoked by big data (Abbasi, Sarker, & Chiang, 2016). The variability of human conditions will undermine the outcome of human decisions, instead, an approach to a machine learning decision-making tool would be less prone to variation, but still affected by human biases (Obermeyer & Lee, 2017; Allen & Choudury, 2022). However, regrettably, the findings indicate that the degree to which companies have implemented big data analytics applications is moderately and diversely observed among them due to inadequacies and disparities in their organizational and technological capabilities (Al-Dmour et al., 2023). Big data poses challenges due to its high dimensionality and large sample size. These challenges include noise accumulation, spurious correlations, and incidental homogeneity caused by high dimensionality. Additionally, the combination of high dimensionality and large sample size leads to issues like heavy computational costs and algorithmic instability. Furthermore, the aggregation of massive samples from multiple sources at different time points using various technologies adds complexity to the analysis (Fan, Fang and Han, 2014). This is important because when classification algorithms use human-generated input data that suffer from human biases (Sayogo et al., 2014), the predictions they generate may exacerbate the errors stemming from such biases and technical debt increase (Park, Jang & Lee, 2018). In the presence of variability in the bias-induced error, the impacts of bias can be mitigated, but not eliminated, even if the algorithmic design is adjusted to account for the bias (Ahsen et al. 2017). The moderation of resource capital influences the collection and storage of consumer activity records as Big Data, the extraction of insights from Big Data, and the utilization of insights to enhance dynamic/adaptive capability. Inadequate organizational alignment and member education on proactive utilization of insights can hinder effective utilization of consumer insights and impede a firm's adaptive capability (Erevelles, Fukawa, & Swayne, 2016). This may hinder the effective utilization of data may lead to bias-conscious algorithms that have the potential to greatly enhance anticipated results, although the extent of improvement relies heavily on the discriminatory capacities of information, encompassing accurate long-term strategizing; however, despite the awareness of big data's advantages, top-level management fails to prioritize it, ranking as the secondary factor contributing to unsuccessful data outcomes, consequently leading to a scarcity of financial resources as the primary cause (Ahsenet al., 2017; Al-Dmour et al., 2023). Miller and Mork (2013) recommended a collaborative partnership for data collection from diverse stakeholders, aiming to optimize service delivery and quality decisions. They proposed streamlining data management activities to benefit all stakeholders and adopting a portfolio-management approach to invest in people, processes, and technology, thus maximizing the value of integrated data and improving organizational performance. | ||

| Line 25: | Line 27: | ||

Data science endeavors confront obstacles pertaining to data accessibility, data integrity, and dependence on external repositories, culminating in escalated intricacy. In response to these issues, one proposed resolution has been to embrace interconnectivity (Gunther et al., 2017), which refers to the ability to integrate data from heterogeneous big data repositories. Evidently, the advent of sophisticated technologies is progressively empowering users to assimilate disparate data sources and extract valuable insights from their combination. Hence, this scholarly investigation endeavors to bridge the lacuna in contemporary data research, which predominantly centers on the domain of Big Data. It does so by pivoting the attention towards a comprehensive end-to-end data cold-chain paradigm. | Data science endeavors confront obstacles pertaining to data accessibility, data integrity, and dependence on external repositories, culminating in escalated intricacy. In response to these issues, one proposed resolution has been to embrace interconnectivity (Gunther et al., 2017), which refers to the ability to integrate data from heterogeneous big data repositories. Evidently, the advent of sophisticated technologies is progressively empowering users to assimilate disparate data sources and extract valuable insights from their combination. Hence, this scholarly investigation endeavors to bridge the lacuna in contemporary data research, which predominantly centers on the domain of Big Data. It does so by pivoting the attention towards a comprehensive end-to-end data cold-chain paradigm. | ||

| − | =3. METHODOLOGY= | + | ===3. METHODOLOGY=== |

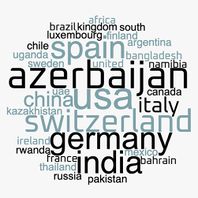

The grounded theory method (Corbin and Strauss, 2015) facilitates a rigorous analysis of data, exploring the interplay between good data and big data. Interviews with 65 Data experts from 21 countries across various fields aimed to understand their relation to data value deterioration. The broad geographical scope was strategically chosen for diverse insights, maintaining proximity to unorthodox paradigms (Bamberger, 2018). Respondents were contacted via Linkedin.com, resulting in a 5% response rate from invite to interview. The interviews were conducted in English through Zoom, recorded, transcribed by otter.ai, and analyzed using Atlas.ti. | The grounded theory method (Corbin and Strauss, 2015) facilitates a rigorous analysis of data, exploring the interplay between good data and big data. Interviews with 65 Data experts from 21 countries across various fields aimed to understand their relation to data value deterioration. The broad geographical scope was strategically chosen for diverse insights, maintaining proximity to unorthodox paradigms (Bamberger, 2018). Respondents were contacted via Linkedin.com, resulting in a 5% response rate from invite to interview. The interviews were conducted in English through Zoom, recorded, transcribed by otter.ai, and analyzed using Atlas.ti. | ||

| Line 33: | Line 35: | ||

Semi-structured interviews covered respondents' backgrounds, the data cold-chain, thinking patterns, and behaviors in data deployment. Open-ended "grand tour" queries and exploratory inquiries explored their experiences, learning trajectories, skill sets, challenges, and decision-making processes. The iterative refinement of the interview protocol accommodated emerging thematic elements, ensuring a comprehensive understanding of data experts' experiences. The sampling process continued until data saturation was achieved, indicating no new information emerged from supplementary responses. | Semi-structured interviews covered respondents' backgrounds, the data cold-chain, thinking patterns, and behaviors in data deployment. Open-ended "grand tour" queries and exploratory inquiries explored their experiences, learning trajectories, skill sets, challenges, and decision-making processes. The iterative refinement of the interview protocol accommodated emerging thematic elements, ensuring a comprehensive understanding of data experts' experiences. The sampling process continued until data saturation was achieved, indicating no new information emerged from supplementary responses. | ||

| − | =4. DATA ANALYSIS= | + | ===4. DATA ANALYSIS=== |

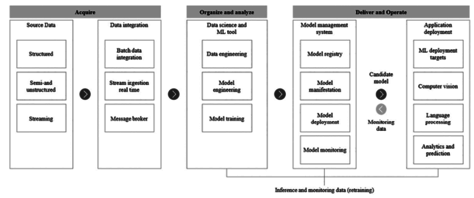

We established a theoretical foundation in innovation and technology management literature, guiding interviews with 65 diverse data experts (refer to figures 1, 2, and 3). | We established a theoretical foundation in innovation and technology management literature, guiding interviews with 65 diverse data experts (refer to figures 1, 2, and 3). | ||

==----== | ==----== | ||

| − | + | Insert figure 1, 2 and 3 here | |

| − | + | ||

==--- == | ==--- == | ||

| Line 49: | Line 50: | ||

the qualitative data analysis process involved open coding, memo writing, axial coding, constant comparison, and selective coding. nine distinct categories, such as "data experts alignment," emerged from the analysis, representing discrete units of information. the foundational concept of alignment underwent iterative development during the 39th interview, involving continuous comparative analysis, additional sampling, and participant validation. the concept's robust utility and explanatory effectiveness were affirmed through evaluation among participants at later stages. | the qualitative data analysis process involved open coding, memo writing, axial coding, constant comparison, and selective coding. nine distinct categories, such as "data experts alignment," emerged from the analysis, representing discrete units of information. the foundational concept of alignment underwent iterative development during the 39th interview, involving continuous comparative analysis, additional sampling, and participant validation. the concept's robust utility and explanatory effectiveness were affirmed through evaluation among participants at later stages. | ||

| − | =5. FINDINGS= | + | ===5. FINDINGS=== |

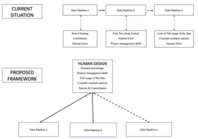

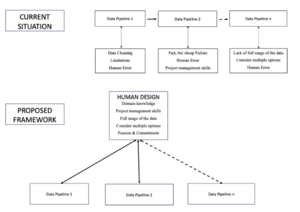

In the following discourse, we delineate the cognitive processes and behavioral tendencies exhibited by Data experts in relation to the various methodologies employed within the domain of data management. Subsequently, we expound upon the foundational characteristics underpinning these approaches, categorizing them into nine distinct classes. The present categorizations, encompassing both inadvertent occurrences such as human error and essential components like project management, can be ascribed to the prevailing characteristics of the extant data pipeline within the context of the prevailing data structure. In contrast, within the framework expounded upon in this investigation, it is conceivable that a centralized human-driven design could serve as the governing entity, exercising command over all facets inherent to the data pipeline, predicated upon a set of requisite attributes, specifically: Consider multiple options, Project management skills, Passion & Commitment, Domain knowledge & Full usage of data. (Fig. 4). The findings of this study underscore the imperative for a standardized and cohesive data collection-to-response data pipeline framework. Moreover, it accentuates the indispensability of human involvement within the data processing continuum. The discernible implication is the requirement for an initial stratum of automated processing, wherein each discrete stage should be methodically designed through augmentation methodologies. | In the following discourse, we delineate the cognitive processes and behavioral tendencies exhibited by Data experts in relation to the various methodologies employed within the domain of data management. Subsequently, we expound upon the foundational characteristics underpinning these approaches, categorizing them into nine distinct classes. The present categorizations, encompassing both inadvertent occurrences such as human error and essential components like project management, can be ascribed to the prevailing characteristics of the extant data pipeline within the context of the prevailing data structure. In contrast, within the framework expounded upon in this investigation, it is conceivable that a centralized human-driven design could serve as the governing entity, exercising command over all facets inherent to the data pipeline, predicated upon a set of requisite attributes, specifically: Consider multiple options, Project management skills, Passion & Commitment, Domain knowledge & Full usage of data. (Fig. 4). The findings of this study underscore the imperative for a standardized and cohesive data collection-to-response data pipeline framework. Moreover, it accentuates the indispensability of human involvement within the data processing continuum. The discernible implication is the requirement for an initial stratum of automated processing, wherein each discrete stage should be methodically designed through augmentation methodologies. | ||

| + | -----INSERT FIGURE 4 HERE | ||

----- | ----- | ||

| − | = | + | ===Data experts approach to data=== |

| − | + | ||

| − | + | ||

| − | + | ||

| − | ==Data experts approach to data== | + | |

The approach engendered by the cognitive patterns and behaviors of data experts may give rise to challenging scenarios and offer insights into the potential optimization of data value workflows, as posited by Bamberger (2018). To illustrate, the significance of expertise as a constraining factor is exemplified in situations where professionals are recruited despite possessing limited skills within a specific domain, based on their perceived long-term potential for delivering valuable contributions, as discussed by Snihur and Zott (2020). The introduction of a novel phenomenon into conventional software development life cycles can be discerned through behavioral inquiries, wherein, in the absence of traditional control mechanisms, collectives assume self-organizing roles, adapting their knowledge-sharing processes to enhance their capabilities in the context of deliberation and resolution, as elucidated by Kane et al. (2014). In the context of this study, nine features emerged from the comparative analysis to elucidate the characteristics of value deterioration: | The approach engendered by the cognitive patterns and behaviors of data experts may give rise to challenging scenarios and offer insights into the potential optimization of data value workflows, as posited by Bamberger (2018). To illustrate, the significance of expertise as a constraining factor is exemplified in situations where professionals are recruited despite possessing limited skills within a specific domain, based on their perceived long-term potential for delivering valuable contributions, as discussed by Snihur and Zott (2020). The introduction of a novel phenomenon into conventional software development life cycles can be discerned through behavioral inquiries, wherein, in the absence of traditional control mechanisms, collectives assume self-organizing roles, adapting their knowledge-sharing processes to enhance their capabilities in the context of deliberation and resolution, as elucidated by Kane et al. (2014). In the context of this study, nine features emerged from the comparative analysis to elucidate the characteristics of value deterioration: | ||

:1. Data Cleaning Limitations | :1. Data Cleaning Limitations | ||

| − | |||

:2. Lack of full usage of the data | :2. Lack of full usage of the data | ||

| − | |||

:3. Human Error | :3. Human Error | ||

| − | |||

:4. Fast, but cheap Failure | :4. Fast, but cheap Failure | ||

| − | |||

:5. Time Constraints | :5. Time Constraints | ||

| − | |||

:6. Consider multiple options | :6. Consider multiple options | ||

| − | |||

:7. Project management skills | :7. Project management skills | ||

| − | + | :8. Passion & Commitment9. Domain knowledge | |

| − | :8. Passion & | + | |

| − | + | ||

| − | + | ||

In the ensuing discourse, we expound upon the intricate particulars encompassing each of the nine delineated categories, culminating with the central category of Data experts’ allignment. | In the ensuing discourse, we expound upon the intricate particulars encompassing each of the nine delineated categories, culminating with the central category of Data experts’ allignment. | ||

| − | ==DATA CLEANING LIMITATIONS== | + | ===DATA CLEANING LIMITATIONS=== |

The main insight from the interview is that the quality of a data model is only as good as the quality of the data used. The participant emphasizes the "garbage in, garbage out" principle, underscoring the importance of obtaining clean, noise-free data to prevent wasting time on extensive data cleaning. Consulting experts at every stage is critical to ensure the data's appropriateness for the task, which optimizes model performance and reduces inefficiencies. While data cleaning is essential for optimization, it is not the ultimate goal of big data analysis but rather a necessary tool to ensure accuracy. However, real-world datasets are often small, dirty, and even biased, which poses significant challenges in data science. These imperfections can act as limiting factors that influence or constrain model outcomes. As a result, data scientists must balance the task of refining datasets with the broader objective of deriving meaningful insights from imperfect data. This approach not only enhances workflow efficiency but also mitigates the risks associated with poor-quality data, ensuring more reliable decision-making.As per the following informants’ quotes: | The main insight from the interview is that the quality of a data model is only as good as the quality of the data used. The participant emphasizes the "garbage in, garbage out" principle, underscoring the importance of obtaining clean, noise-free data to prevent wasting time on extensive data cleaning. Consulting experts at every stage is critical to ensure the data's appropriateness for the task, which optimizes model performance and reduces inefficiencies. While data cleaning is essential for optimization, it is not the ultimate goal of big data analysis but rather a necessary tool to ensure accuracy. However, real-world datasets are often small, dirty, and even biased, which poses significant challenges in data science. These imperfections can act as limiting factors that influence or constrain model outcomes. As a result, data scientists must balance the task of refining datasets with the broader objective of deriving meaningful insights from imperfect data. This approach not only enhances workflow efficiency but also mitigates the risks associated with poor-quality data, ensuring more reliable decision-making.As per the following informants’ quotes: | ||

| Line 103: | Line 92: | ||

''#62: Time constraints in big companies limit data experts' ability to innovate. '' | ''#62: Time constraints in big companies limit data experts' ability to innovate. '' | ||

| − | ==LACK OF FULL USAGE OF THE DATA== | + | ===LACK OF FULL USAGE OF THE DATA=== |

There seem to be three important aspects of data management in machine learning. First, optimization is essential for effectively handling large datasets, as it directly impacts model performance. Second, strategies such as unbiased sampling methods are implemented to manage data more efficiently, helping to overcome memory limitations and reduce loading times. Lastly, privacy concerns pose significant challenges, limiting the full utilization of data while underscoring the importance of protecting client information. | There seem to be three important aspects of data management in machine learning. First, optimization is essential for effectively handling large datasets, as it directly impacts model performance. Second, strategies such as unbiased sampling methods are implemented to manage data more efficiently, helping to overcome memory limitations and reduce loading times. Lastly, privacy concerns pose significant challenges, limiting the full utilization of data while underscoring the importance of protecting client information. | ||

| Line 121: | Line 110: | ||

''#61 I guide them on incorporating this valuable knowledge into their work. In a past banking job (a failure to fetch all database data affected numerous banking customers, highlighting the impact of incomplete tasks. In my role I train analysts for independent handling of basic statistics, aiming to shift their mindset toward utilizing machine learning and predictive modeling more frequently. I guide them on incorporating this valuable knowledge into their work. In a past banking job (quote a failure to fetch all database data affected numerous banking customers, highlighting the impact of incomplete tasks.'' | ''#61 I guide them on incorporating this valuable knowledge into their work. In a past banking job (a failure to fetch all database data affected numerous banking customers, highlighting the impact of incomplete tasks. In my role I train analysts for independent handling of basic statistics, aiming to shift their mindset toward utilizing machine learning and predictive modeling more frequently. I guide them on incorporating this valuable knowledge into their work. In a past banking job (quote a failure to fetch all database data affected numerous banking customers, highlighting the impact of incomplete tasks.'' | ||

| − | ==HUMAN ERROR== | + | ===HUMAN ERROR=== |

Respondents exhibit meticulousness in their procedural approach, diligently posing critical inquiries, especially in the domains of data retrieval and coding. This conscientiousness enables the discernment of a fundamental error. Furthermore, the statements underscore a disparity between the initial theoretical promise of innovative ideas from another department and their practical implementation, revealing inefficacy or flaws. This accentuates the significance of practical testing and validation in the evaluation of the viability of inventive solutions. | Respondents exhibit meticulousness in their procedural approach, diligently posing critical inquiries, especially in the domains of data retrieval and coding. This conscientiousness enables the discernment of a fundamental error. Furthermore, the statements underscore a disparity between the initial theoretical promise of innovative ideas from another department and their practical implementation, revealing inefficacy or flaws. This accentuates the significance of practical testing and validation in the evaluation of the viability of inventive solutions. | ||

| Line 137: | Line 126: | ||

''#45 Human error arises when assumptions directly influence data, potentially diverging from established knowledge and unsettling clients '' | ''#45 Human error arises when assumptions directly influence data, potentially diverging from established knowledge and unsettling clients '' | ||

| − | ==FAST, BUT CHEAP FAILURE== | + | ===FAST, BUT CHEAP FAILURE=== |

The interviews highlight that fostering an experimentation culture is fundamental to success in data science. A key insight is the focus on iterative learning, where teams continuously refine their processes by applying agile methods and adopting a "learning by doing" mindset. In industrial settings, this approach is particularly important, as starting models from scratch is emphasized to ensure accurate processing and better alignment with real-world data requirements: | The interviews highlight that fostering an experimentation culture is fundamental to success in data science. A key insight is the focus on iterative learning, where teams continuously refine their processes by applying agile methods and adopting a "learning by doing" mindset. In industrial settings, this approach is particularly important, as starting models from scratch is emphasized to ensure accurate processing and better alignment with real-world data requirements: | ||

| Line 159: | Line 148: | ||

A strategic approach to data handling is also emphasized, with smaller datasets recommended for initial testing. This method saves time and ensures that data reliability is assessed early on, minimizing the risk of errors down the line. The importance of data quality, swift feedback cycles, and an ongoing willingness to learn from mistakes are underlined as critical to refining data models and processes. By consistently focusing on these elements, data science teams can better align with industrial needs, ensuring agility and adaptability. | A strategic approach to data handling is also emphasized, with smaller datasets recommended for initial testing. This method saves time and ensures that data reliability is assessed early on, minimizing the risk of errors down the line. The importance of data quality, swift feedback cycles, and an ongoing willingness to learn from mistakes are underlined as critical to refining data models and processes. By consistently focusing on these elements, data science teams can better align with industrial needs, ensuring agility and adaptability. | ||

| − | ==CONSIDER MULTIPLE OPTIONS== | + | ===CONSIDER MULTIPLE OPTIONS=== |

The qualitative interviews reveal that exploring multiple options enhances efficiency and adaptability in data collection (#61, #4). Standards for model performance are acknowledged as sufficient (#2), but bridging the gap between data science and other departments maximizes the data's potential for informed decision-making (#60). Automating tedious tasks enables a focus on more creative aspects of data science (#6), while workflow optimization ensures a continuous flow, minimizing delays (#15). The inclusion of diverse viewpoints in projects emphasizes the need for careful algorithmic application to avoid unintended consequences (#45). Together, these insights highlight the balance between automation, creativity, and cross-functional collaboration. | The qualitative interviews reveal that exploring multiple options enhances efficiency and adaptability in data collection (#61, #4). Standards for model performance are acknowledged as sufficient (#2), but bridging the gap between data science and other departments maximizes the data's potential for informed decision-making (#60). Automating tedious tasks enables a focus on more creative aspects of data science (#6), while workflow optimization ensures a continuous flow, minimizing delays (#15). The inclusion of diverse viewpoints in projects emphasizes the need for careful algorithmic application to avoid unintended consequences (#45). Together, these insights highlight the balance between automation, creativity, and cross-functional collaboration. | ||

| Line 177: | Line 166: | ||

''#45: Diverse viewpoints in data science projects highlight the importance of cautious algorithmic application.'' | ''#45: Diverse viewpoints in data science projects highlight the importance of cautious algorithmic application.'' | ||

| − | ==PROJECT MANAGEMENT SKILLS== | + | ===PROJECT MANAGEMENT SKILLS=== |

The critical role of project management expertise in data science initiatives emerges: a skilled data expert underscores the importance of knowing when to initiate processes and the relevant techniques to employ. Limited participation in business-related discussions can lead to a fragmented understanding of projects, demonstrating the need for strong project management to facilitate successful outcomes and collaboration between data science and business teams. Furthermore, collaborative decision-making not only minimizes regrets but also reduces the necessity for extensive code modifications, fostering a growth-oriented mindset. Organizations that engage in diverse meetings for planning and coordination, alongside inter-team collaborations, enhance their ability to prioritize effectively and achieve their goals. | The critical role of project management expertise in data science initiatives emerges: a skilled data expert underscores the importance of knowing when to initiate processes and the relevant techniques to employ. Limited participation in business-related discussions can lead to a fragmented understanding of projects, demonstrating the need for strong project management to facilitate successful outcomes and collaboration between data science and business teams. Furthermore, collaborative decision-making not only minimizes regrets but also reduces the necessity for extensive code modifications, fostering a growth-oriented mindset. Organizations that engage in diverse meetings for planning and coordination, alongside inter-team collaborations, enhance their ability to prioritize effectively and achieve their goals. | ||

| Line 199: | Line 188: | ||

''#35 Positive feedback is identified as crucial in motivating individuals for future projects. Overcoming challenges and persevering through difficulties can be demotivating, prompting reflection on recent efforts and accomplishments '' | ''#35 Positive feedback is identified as crucial in motivating individuals for future projects. Overcoming challenges and persevering through difficulties can be demotivating, prompting reflection on recent efforts and accomplishments '' | ||

| − | ==DOMAIN KNOWLEDGE == | + | ===DOMAIN KNOWLEDGE === |

The complexities of achieving true representativity in data science, are because of its highly dependence on individual circumstances, making a universal definition difficult to establish. Engaging in business-oriented discussions allows respondents to gain valuable context and explore data iteratively, facilitating the sharing of findings with stakeholders and generating new questions that drive further inquiry. | The complexities of achieving true representativity in data science, are because of its highly dependence on individual circumstances, making a universal definition difficult to establish. Engaging in business-oriented discussions allows respondents to gain valuable context and explore data iteratively, facilitating the sharing of findings with stakeholders and generating new questions that drive further inquiry. | ||

| Line 211: | Line 200: | ||

#45 "Shadowing" with business units spreads domain knowledge, bridging the gap between technical and business language for effective communication | #45 "Shadowing" with business units spreads domain knowledge, bridging the gap between technical and business language for effective communication | ||

| − | ==TIME CONSTRAINTS== | + | ===TIME CONSTRAINTS=== |

There is an inherent uncertainty in data science, where multiple initial spikes or proof of concepts (POCs) are acceptable as the investigation time remains unpredictable. Managing expectations regarding immediate results is essential, as it underscores the importance of clear project timelines and the acquisition of necessary datasets. The use of minimum viable products (MVPs) allows for continuous feedback, helping teams navigate the challenges associated with time and resource management. By setting realistic expectations and utilizing iterative approaches, data science projects can be more effectively aligned with organizational goals, enhancing their potential for success. | There is an inherent uncertainty in data science, where multiple initial spikes or proof of concepts (POCs) are acceptable as the investigation time remains unpredictable. Managing expectations regarding immediate results is essential, as it underscores the importance of clear project timelines and the acquisition of necessary datasets. The use of minimum viable products (MVPs) allows for continuous feedback, helping teams navigate the challenges associated with time and resource management. By setting realistic expectations and utilizing iterative approaches, data science projects can be more effectively aligned with organizational goals, enhancing their potential for success. | ||

| Line 220: | Line 209: | ||

<span id='_Toc370462709'></span> | <span id='_Toc370462709'></span> | ||

| − | ==DATA EXPERTS’ ALLIGNMENT (CORE CATEGORY)== | + | ===DATA EXPERTS’ ALLIGNMENT (CORE CATEGORY)=== |

Close collaboration between data experts and other professionals is essential for delivering effective services. While data experts often prioritize insights over software quality, it’s crucial to ensure alignment across the entire organization. Careful research and planning can prevent testing issues and errors, resulting in significant time savings and improved accuracy. A lack of alignment poses a major challenge, as data experts often focus on insights during tight timelines, mirroring the mindset of other roles like data engineers and QA specialists. This alignment transcends mere process coordination; it requires recognizing each individual action as part of a cohesive "cold" chain. To enhance internal processes in data science, active engagement and the suggestion of improvement ideas are necessary, alongside dedicated time for data exploration and analysis. Thorough planning is vital to avoid testing issues and minimize the need for extensive model training. By leveraging smaller, high-quality datasets and establishing robust testing protocols, organizations can save time and boost accuracy. Moreover, maintaining alignment within an external ecosystem of data experts demands effective leadership that acknowledges individual strengths and identifies collaboration needs, ensuring teams work together towards common goals and optimal results. | Close collaboration between data experts and other professionals is essential for delivering effective services. While data experts often prioritize insights over software quality, it’s crucial to ensure alignment across the entire organization. Careful research and planning can prevent testing issues and errors, resulting in significant time savings and improved accuracy. A lack of alignment poses a major challenge, as data experts often focus on insights during tight timelines, mirroring the mindset of other roles like data engineers and QA specialists. This alignment transcends mere process coordination; it requires recognizing each individual action as part of a cohesive "cold" chain. To enhance internal processes in data science, active engagement and the suggestion of improvement ideas are necessary, alongside dedicated time for data exploration and analysis. Thorough planning is vital to avoid testing issues and minimize the need for extensive model training. By leveraging smaller, high-quality datasets and establishing robust testing protocols, organizations can save time and boost accuracy. Moreover, maintaining alignment within an external ecosystem of data experts demands effective leadership that acknowledges individual strengths and identifies collaboration needs, ensuring teams work together towards common goals and optimal results. | ||

| Line 235: | Line 224: | ||

#35 In an academic context, single-handedly managing the entire project involving data collection, workflow creation, and dashboard design may lead to issues if there's a lack of alignment or synchronization with project objectives | #35 In an academic context, single-handedly managing the entire project involving data collection, workflow creation, and dashboard design may lead to issues if there's a lack of alignment or synchronization with project objectives | ||

| + | Value deterioration occurs due to a lack of congruence but can be addressed through a cohesive human framework and uninterrupted technological progression. | ||

| − | == | + | === 6. DISCUSSION === |

| − | + | ||

| − | =6. DISCUSSION= | + | |

| − | + | ||

This discussion begins by exploring the question, "How do data experts’ decision-making patterns and behaviors influence data value?" The study validates the socio-technical approach (Markus & Topi, 2015), which posits that successful data science projects depend not only on technical excellence but also on effective communication skills and collaboration across teams. This perspective aligns with the Triple Helix model, which stresses the collaborative interplay between government, industry, and society, including academia. The paper critiques the conventional focus on expediency and coding-centric practices, as these may hinder long-term data value, as noted by Nadkarni & Prügl (2021). | This discussion begins by exploring the question, "How do data experts’ decision-making patterns and behaviors influence data value?" The study validates the socio-technical approach (Markus & Topi, 2015), which posits that successful data science projects depend not only on technical excellence but also on effective communication skills and collaboration across teams. This perspective aligns with the Triple Helix model, which stresses the collaborative interplay between government, industry, and society, including academia. The paper critiques the conventional focus on expediency and coding-centric practices, as these may hinder long-term data value, as noted by Nadkarni & Prügl (2021). | ||

| Line 246: | Line 233: | ||

In terms of challenges, the research identifies that data science projects often face demotivation due to the lack of a supportive atmosphere. In the Triple Helix context, this is echoed in the struggles municipalities and organizations face when attempting to build cohesive, large-scale data ecosystems. Examples from Metro Boston and New York City illustrate the difficulties in achieving uniformity across geographical and organizational boundaries (Kitchin & Moore-Cherry, 2021; OpenData, 2024). However, efforts like cross-sectional data initiatives and public-private partnerships show promise in bridging these gaps. This study’s emphasis on human engagement, workflow optimization, and clear communication is crucial for success, as seen in successful urban data ecosystems that utilize similar frameworks (Woods, Bunnell & Kong, 2023). The integration of human elements and social interactions is vital to project success, as illustrated by Singh et al. (2018) in the cold chain sector, which demonstrates how a singular data metric can minimize value deterioration. This focus on aligning human input with technical outputs aligns with the Triple Helix literature’s emphasis on structured collaboration, professional development, and ethical data practices. In particular, this study calls for corporate education initiatives that foster a data-sharing culture, essential for maintaining data value and adaptability. Finally, the Triple Helix model offers a pathway to overcoming fragmented data systems by advocating for collaboration infrastructures and public-private partnerships. Lessons from global smart city projects—like Singapore’s emphasis on platformization and stakeholder engagement—show how integrating data across sectors can lead to innovation and sustainability (Woods, Bunnell & Kong, 2023; Zhu & Chen, 2022). This research proposes that, much like the cold chain’s reliance on temperature as a unifying metric, the broader data science community must adopt standardized metrics and collaborative strategies to sustain data ecosystems that are both reliable and effective. | In terms of challenges, the research identifies that data science projects often face demotivation due to the lack of a supportive atmosphere. In the Triple Helix context, this is echoed in the struggles municipalities and organizations face when attempting to build cohesive, large-scale data ecosystems. Examples from Metro Boston and New York City illustrate the difficulties in achieving uniformity across geographical and organizational boundaries (Kitchin & Moore-Cherry, 2021; OpenData, 2024). However, efforts like cross-sectional data initiatives and public-private partnerships show promise in bridging these gaps. This study’s emphasis on human engagement, workflow optimization, and clear communication is crucial for success, as seen in successful urban data ecosystems that utilize similar frameworks (Woods, Bunnell & Kong, 2023). The integration of human elements and social interactions is vital to project success, as illustrated by Singh et al. (2018) in the cold chain sector, which demonstrates how a singular data metric can minimize value deterioration. This focus on aligning human input with technical outputs aligns with the Triple Helix literature’s emphasis on structured collaboration, professional development, and ethical data practices. In particular, this study calls for corporate education initiatives that foster a data-sharing culture, essential for maintaining data value and adaptability. Finally, the Triple Helix model offers a pathway to overcoming fragmented data systems by advocating for collaboration infrastructures and public-private partnerships. Lessons from global smart city projects—like Singapore’s emphasis on platformization and stakeholder engagement—show how integrating data across sectors can lead to innovation and sustainability (Woods, Bunnell & Kong, 2023; Zhu & Chen, 2022). This research proposes that, much like the cold chain’s reliance on temperature as a unifying metric, the broader data science community must adopt standardized metrics and collaborative strategies to sustain data ecosystems that are both reliable and effective. | ||

| − | =7. THEORETICAL CONTRIBUTIONS= | + | ===7. THEORETICAL CONTRIBUTIONS=== |

The theoretical implications of this research extend the understanding of data science project management by integrating insights from the cold chain industry and the Triple Helix model, emphasizing the role of human collaboration in maintaining data value. This study reframes data science projects not only as technical endeavors but as socio-technical systems where cross-sectoral collaboration is essential. The cold chain serves as an analogy, demonstrating how a unified variable, like temperature, ensures data consistency and prevents deterioration. Similarly, the research advocates for the standardization of data metrics and processes across data ecosystems, a key principle in Triple Helix literature, which emphasizes collaboration between government, industry, and society for sustainable data practices. A key contribution of this study lies in its recognition of data experts' decision-making patterns, positioning them as central to the data creation and curation process, much like the standardization processes that optimized human expertise during the industrial revolution. Challenges such as data availability, quality, and reliability, particularly in under-digitalized contexts, are identified as critical factors that can impede the success of data projects (Schweinsberg et al., 2021). These issues reflect broader systemic challenges found in data ecosystems that lack the cohesive frameworks advocated by the Triple Helix model. | The theoretical implications of this research extend the understanding of data science project management by integrating insights from the cold chain industry and the Triple Helix model, emphasizing the role of human collaboration in maintaining data value. This study reframes data science projects not only as technical endeavors but as socio-technical systems where cross-sectoral collaboration is essential. The cold chain serves as an analogy, demonstrating how a unified variable, like temperature, ensures data consistency and prevents deterioration. Similarly, the research advocates for the standardization of data metrics and processes across data ecosystems, a key principle in Triple Helix literature, which emphasizes collaboration between government, industry, and society for sustainable data practices. A key contribution of this study lies in its recognition of data experts' decision-making patterns, positioning them as central to the data creation and curation process, much like the standardization processes that optimized human expertise during the industrial revolution. Challenges such as data availability, quality, and reliability, particularly in under-digitalized contexts, are identified as critical factors that can impede the success of data projects (Schweinsberg et al., 2021). These issues reflect broader systemic challenges found in data ecosystems that lack the cohesive frameworks advocated by the Triple Helix model. | ||

| Line 254: | Line 241: | ||

In conclusion, this research advances the theoretical discourse on data science management by emphasizing the need for standardized practices, human-centered design, and collaborative frameworks to optimize the potential of data experts. It suggests that adopting a unified, standardized approach—similar to the cold chain’s temperature focus—can mitigate the risks of data deterioration and enhance the overall success of data-driven initiatives. Through the lens of the Triple Helix model, this study calls for a deeper integration of cross-sectoral partnerships and knowledge-sharing mechanisms to sustain robust data ecosystems. | In conclusion, this research advances the theoretical discourse on data science management by emphasizing the need for standardized practices, human-centered design, and collaborative frameworks to optimize the potential of data experts. It suggests that adopting a unified, standardized approach—similar to the cold chain’s temperature focus—can mitigate the risks of data deterioration and enhance the overall success of data-driven initiatives. Through the lens of the Triple Helix model, this study calls for a deeper integration of cross-sectoral partnerships and knowledge-sharing mechanisms to sustain robust data ecosystems. | ||

| − | =8. PRACTICAL IMPLICATION= | + | ===8. PRACTICAL IMPLICATION=== |

The research offers valuable practical contributions to the field of data science, particularly by highlighting the importance of understanding data creation processes to prevent value deterioration. Drawing on insights from the cold chain industry, where a singular variable like temperature ensures data consistency, the study emphasizes the need for organizations and researchers to adopt standardized metrics across data workflows. This approach, akin to a "data cold chain," can help align data experts and maintain quality throughout the data lifecycle. Furthermore, integrating the Triple Helix model of collaboration between government, industry, and society proves essential for addressing data accessibility challenges. By fostering interconnectivity and partnership, diverse data sources can be integrated more effectively, yielding better insights and enhancing the decision-making process. The emphasis on sustaining motivation within data science teams underscores the importance of creating a supportive atmosphere—one that prioritizes human-centric design, open communication, and interdisciplinary collaboration. These elements are essential for overcoming the common barriers to success in data-driven projects. The research also stresses the importance of building a data-sharing culture, where data is seamlessly integrated into decision-making processes. Implementing standardized metrics and creating collaborative infrastructures, as seen in successful Triple Helix partnerships, is critical for preserving data value and preventing its deterioration. In conclusion, this study highlights that fostering collaboration, promoting a data-driven culture, and adopting human-centric approaches are key to optimizing data workflows. These strategies not only enable innovation but also help organizations overcome the complex challenges that arise in data science endeavors. Establishing a cohesive data "cold chain" within these frameworks ensures a comprehensive, end-to-end approach to managing data. | The research offers valuable practical contributions to the field of data science, particularly by highlighting the importance of understanding data creation processes to prevent value deterioration. Drawing on insights from the cold chain industry, where a singular variable like temperature ensures data consistency, the study emphasizes the need for organizations and researchers to adopt standardized metrics across data workflows. This approach, akin to a "data cold chain," can help align data experts and maintain quality throughout the data lifecycle. Furthermore, integrating the Triple Helix model of collaboration between government, industry, and society proves essential for addressing data accessibility challenges. By fostering interconnectivity and partnership, diverse data sources can be integrated more effectively, yielding better insights and enhancing the decision-making process. The emphasis on sustaining motivation within data science teams underscores the importance of creating a supportive atmosphere—one that prioritizes human-centric design, open communication, and interdisciplinary collaboration. These elements are essential for overcoming the common barriers to success in data-driven projects. The research also stresses the importance of building a data-sharing culture, where data is seamlessly integrated into decision-making processes. Implementing standardized metrics and creating collaborative infrastructures, as seen in successful Triple Helix partnerships, is critical for preserving data value and preventing its deterioration. In conclusion, this study highlights that fostering collaboration, promoting a data-driven culture, and adopting human-centric approaches are key to optimizing data workflows. These strategies not only enable innovation but also help organizations overcome the complex challenges that arise in data science endeavors. Establishing a cohesive data "cold chain" within these frameworks ensures a comprehensive, end-to-end approach to managing data. | ||

| − | =9. LIMITATIONS = | + | ===9. LIMITATIONS === |

The findings and conclusions of this study are contextually bound to data science projects, particularly in the realm of socio-technical collaborations and data management practices. Extrapolation to other domains or industries should be done cautiously, as unique contextual factors may influence outcomes differently. Furthermore the data collected for this study may exhibit sampling bias, potentially limiting the generalizability of the results. The study's reliance on specific industries, organizational sizes, and geographical locations may restrict the broader applicability of the findings. While the study incorporates a grounded theory approach, it does not consider diverse methodological instruments such as statistical analyses and visualization techniques, these tools may not comprehensively capture all nuances and dimensions of data value preservation. Alternative methodologies and measures could yield different insights. The grounded theory framework proposed is limited to a specific timeframe, and technological advancements or shifts in organizational practices occurring after the study's conclusion might not be adequately represented, potentially affecting the relevance of the conclusions over time. | The findings and conclusions of this study are contextually bound to data science projects, particularly in the realm of socio-technical collaborations and data management practices. Extrapolation to other domains or industries should be done cautiously, as unique contextual factors may influence outcomes differently. Furthermore the data collected for this study may exhibit sampling bias, potentially limiting the generalizability of the results. The study's reliance on specific industries, organizational sizes, and geographical locations may restrict the broader applicability of the findings. While the study incorporates a grounded theory approach, it does not consider diverse methodological instruments such as statistical analyses and visualization techniques, these tools may not comprehensively capture all nuances and dimensions of data value preservation. Alternative methodologies and measures could yield different insights. The grounded theory framework proposed is limited to a specific timeframe, and technological advancements or shifts in organizational practices occurring after the study's conclusion might not be adequately represented, potentially affecting the relevance of the conclusions over time. | ||

| − | =10. REFERENCES= | + | ===10. REFERENCES=== |

Abbasi, A., Sarker, S., & Chiang, R. H. (2016). Big data research in information systems: Toward an inclusive research agenda. Journal of the Association for Information Systems, 17(2), 3. | Abbasi, A., Sarker, S., & Chiang, R. H. (2016). Big data research in information systems: Toward an inclusive research agenda. Journal of the Association for Information Systems, 17(2), 3. | ||

| Line 396: | Line 383: | ||

3. [[Image:Draft_Toscani_720756083-image3.jpeg|198px]] | 3. [[Image:Draft_Toscani_720756083-image3.jpeg|198px]] | ||

| − | '''Figure | + | '''Figure 3 Respondents’ Country''' |

4. [[Image:Draft_Toscani_720756083-image4.png|198px]] | 4. [[Image:Draft_Toscani_720756083-image4.png|198px]] | ||

'''Figure 4 Framework''' | '''Figure 4 Framework''' | ||

| − | + | [[File:Image 4.png|left|thumb]] | |

| − | [[ | + | |

''' ''' | ''' ''' | ||

| − | ==APPENDIX== | + | ===APPENDIX=== |

5. [[Image:Draft_Toscani_720756083-image6.png|600px]] | 5. [[Image:Draft_Toscani_720756083-image6.png|600px]] | ||

''' ''' | ''' ''' | ||

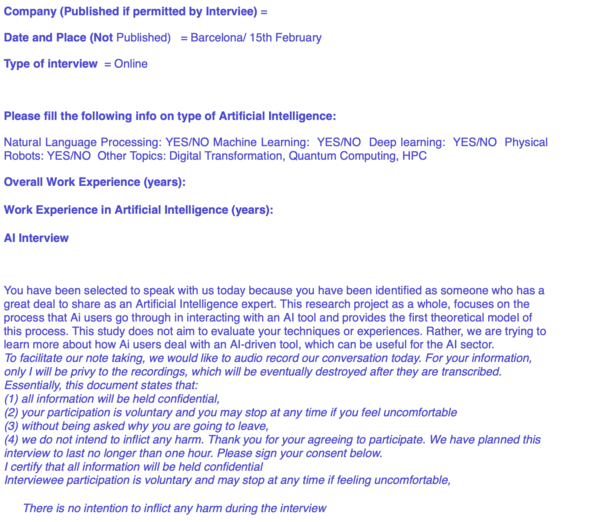

| − | + | Appendix Figure 1 Interviewee’s Consent | |

Revision as of 16:11, 20 January 2025

Chilling Out Data Chaos: Leveraging Cold Chain Principles and the Triple Helix Framework

Giulio Toscani

ABSTRACT

As big data increasingly influences decision-making, ensuring data consistency and reliability has become essential. This paper explores how the cold chain’s singular focus on temperature can serve as a model for building unified, high-quality, and sustainable data systems. Despite the primary emphasis on machine learning of literature, this grounded in theory research, investigates how big data quality impacts technological execution and decision-making across industries. Drawing on 65 expert interviews, the study’s final discussion highlights the potential of the Triple Helix model—collaboration between government, industry, and society—as a framework for creating unified data systems. The cold chain, with its focus on temperature as the sole variable, exemplifies how such collaboration can enhance data reliability. Key challenges identified include data governance, operational inconsistencies, and barriers to collaboration. The paper proposes solutions such as professional training and the development of collaborative infrastructures to improve data dependability and support sustainable decision-making across industries.

INDEX TERMS Outcome, Data expert, Big Data, Value deterioration, Cold Chain

1. INTRODUCTION

In recent times, the proliferation of digital technologies has led to a surge in big data accumulation, sparking interest among professionals and scholars. Organizations leverage big data for insights and a competitive edge, but its size and complexity present challenges (Fan, Fang & Han, 2014). Concerns include data quality, emphasizing the role of subjective choices by researchers (Naeem et al., 2022). This research contrasts human design with unbroken technological execution in the cold-chain sector, analyzing value deterioration over time (Singh et al., 2018) and its impact on sustainability (Farrukh & Holgado, 2020). Miller and Mork's (2013) data value-chain model guides the study, examining decision-making processes from a value-rational approach. The focus is on how data experts navigate decision-making processes and behaviors within this context (Snihur & Zott, 2020). More research is needed on how Data experts' thinking patterns and behaviors relate to data deterioration, aiming to provide insights for optimal data use (Rahwan et al., 2019).

The paper aims to help organizations understand how data use may drive or impede data projects by exploring data experts' decision-making patterns and behaviors. The research question is:

RQ: How do Data experts' decision-making patterns and behaviors influence data value?

2. LITERATURE REVIEW

In the present era, the widespread assimilation and advancement of digital technologies have led to a significant amplification in the accumulation of big data, the extensive volumes of data due to their efficacy in enhancing organizational efficacy (Al-Dmour et al., 2023). Firms utilizing AI exhibit distinctive traits: a unique operational framework prioritizing data, integration of AI into core processes, real-time decision-making through experimentation, and granular forecasting. They proactively learn from customer feedback via real-time experiments, enhancing offerings through continual data evaluation. (Iansiti & Lakhani, 2020). The extensive attention and scholarly curiosity from experts and researchers across diverse academic fields can be attributed to the rationale behind the significant interest evoked by big data (Abbasi, Sarker, & Chiang, 2016). The variability of human conditions will undermine the outcome of human decisions, instead, an approach to a machine learning decision-making tool would be less prone to variation, but still affected by human biases (Obermeyer & Lee, 2017; Allen & Choudury, 2022). However, regrettably, the findings indicate that the degree to which companies have implemented big data analytics applications is moderately and diversely observed among them due to inadequacies and disparities in their organizational and technological capabilities (Al-Dmour et al., 2023). Big data poses challenges due to its high dimensionality and large sample size. These challenges include noise accumulation, spurious correlations, and incidental homogeneity caused by high dimensionality. Additionally, the combination of high dimensionality and large sample size leads to issues like heavy computational costs and algorithmic instability. Furthermore, the aggregation of massive samples from multiple sources at different time points using various technologies adds complexity to the analysis (Fan, Fang and Han, 2014). This is important because when classification algorithms use human-generated input data that suffer from human biases (Sayogo et al., 2014), the predictions they generate may exacerbate the errors stemming from such biases and technical debt increase (Park, Jang & Lee, 2018). In the presence of variability in the bias-induced error, the impacts of bias can be mitigated, but not eliminated, even if the algorithmic design is adjusted to account for the bias (Ahsen et al. 2017). The moderation of resource capital influences the collection and storage of consumer activity records as Big Data, the extraction of insights from Big Data, and the utilization of insights to enhance dynamic/adaptive capability. Inadequate organizational alignment and member education on proactive utilization of insights can hinder effective utilization of consumer insights and impede a firm's adaptive capability (Erevelles, Fukawa, & Swayne, 2016). This may hinder the effective utilization of data may lead to bias-conscious algorithms that have the potential to greatly enhance anticipated results, although the extent of improvement relies heavily on the discriminatory capacities of information, encompassing accurate long-term strategizing; however, despite the awareness of big data's advantages, top-level management fails to prioritize it, ranking as the secondary factor contributing to unsuccessful data outcomes, consequently leading to a scarcity of financial resources as the primary cause (Ahsenet al., 2017; Al-Dmour et al., 2023). Miller and Mork (2013) recommended a collaborative partnership for data collection from diverse stakeholders, aiming to optimize service delivery and quality decisions. They proposed streamlining data management activities to benefit all stakeholders and adopting a portfolio-management approach to invest in people, processes, and technology, thus maximizing the value of integrated data and improving organizational performance.

Furthermore, despite significant advancements in safeguarding healthcare data in the digital age, vulnerabilities remain, allowing attackers to compromise even highly secure and sensitive information stored on cloud servers, potentially modifying it for their personal gain, despite ongoing efforts by researchers to enhance security measures (Gupta, Gaurav, & Panigrahi, 2023). The study conducted by Lebovitz et al. (2022) substantiates the costly nature of Big data outcomes, prompting a focused examination on the direction of investments and the prioritization of key factors by companies to achieve superior data quality. With this research, we tried to shed a light on uncovering latent consumer insights significantly enhances adaptive capacity, enabling firms to anticipate future trends and behaviors, as exemplified by Amazon's anticipatory shipping and Target's pregnancy detection, thereby emphasizing the importance of an ignorance-based perspective and inductive reasoning for eliciting novel inquiries that can uncover hidden consumer insights beyond existing knowledge (Erevelles, Fukawa, & Swayne, 2016). When considering the human-led brokerage process in an algorithm, we have dug into the collective long-term output vs. the single decision-maker short-term brokering in her/his favour (Waanderburg et al., 2022). This study highlights the absence of a cohesive framework for data collection and sharing among organizations, resulting in security vulnerabilities exploited by attackers. Consequently, the establishment of a resilient and standardized protocol for processing business-to-business healthcare data becomes imperative. (Gupta, Gaurav, & Panigrahi, 2023). Our collaboration involving Data experts has the potential to intricately analyze the procedural aspects of data generation, as opposed to its utilization. Our focus is not directed towards the methodology of chefs preparing dishes, but rather akin to the techniques employed by chemists in formulating the constituent elements that are subsequently manipulated by the chefs. Concurrently, our endeavor seeks to demystify the exalted status often accorded to technology, while advocating for the primacy of human agency by advocating for decision-making processes rooted in human cognition. However, it is imperative to contemplate mechanisms whereby collaborative efforts between the public and private sectors are leveraged to foster innovation via the facilitation of platformization processes (Woods, Bunnel & Kong, 2023). The analysis of these extensive datasets is crucial for assessing both firm-specific risks and systematic risks. It necessitates the expertise of professionals well-versed in advanced statistical techniques applicable to portfolio management, securities regulation, proprietary trading, financial consulting, and risk management (Fan, Han and Liu, 2014). The primary focus of this paper is not centered on individual skills in isolation; rather, it delves into a comprehensive capability that surpasses the confines of human expertise. From an academic standpoint, when analyzing the data cold-chain process, it becomes evident that numerous obstacles arise due to the lack of uniform practices across diverse domains, leading to the deterioration of data value. Indeed, it is unlikely to portray a comprehensive workflow that encompasses the entire data lifecycle, spanning from the initial acquisition phase to the subsequent stages of data processing and storage (Belcore et al., 2021). In attaining this objective, it is imperative to engage key stakeholders and implement a techno-bureaucratic framework, amalgamating strategies targeting urban social, economic, and environmental complexities, prioritizing citizen engagement and ecological stewardship (Palumbo et al., 2021). Therefore, while it would be an act of reductionism to conflate the complexity inherent in data metrics with temperature, focusing on the singular metric characterizing the cold-chain, the proposition of establishing reduced and collectively agreed-upon metrics presents noteworthy advantages, as previously underscored by Fu et al. (2022) through their formulation of objective performance benchmarks. This would be similar to how the internet operates seamlessly today, a remarkable illustration of the advantageous outcomes resulting from a consensus of various stakeholders, including government, industry, and academia, have collaborated as partners in the progressive advancement and implementation of the internet (Leiner et al., 2009). Furthermore, the OCI, (Open Container Initiative), introduced under the patronage of the Linux Foundation in the middle of 2015, endeavors to institute inclusive benchmarks within the domain of container runtime and configuration. In light of the pervasive adoption of containerization, OCI has swiftly garnered patronage from an excess of 30 corporations and entities, encompassing eminent purveyors of cloud computing and application frameworks (Fu et al., 2016). Furthermore, bureaucratic structures uphold fundamental principles while dynamically influencing and responding to surroundings through lower-level agents, crafting regulations amid societal shifts (Lekkas & Souitaris, 2023).

Data science endeavors confront obstacles pertaining to data accessibility, data integrity, and dependence on external repositories, culminating in escalated intricacy. In response to these issues, one proposed resolution has been to embrace interconnectivity (Gunther et al., 2017), which refers to the ability to integrate data from heterogeneous big data repositories. Evidently, the advent of sophisticated technologies is progressively empowering users to assimilate disparate data sources and extract valuable insights from their combination. Hence, this scholarly investigation endeavors to bridge the lacuna in contemporary data research, which predominantly centers on the domain of Big Data. It does so by pivoting the attention towards a comprehensive end-to-end data cold-chain paradigm.

3. METHODOLOGY

The grounded theory method (Corbin and Strauss, 2015) facilitates a rigorous analysis of data, exploring the interplay between good data and big data. Interviews with 65 Data experts from 21 countries across various fields aimed to understand their relation to data value deterioration. The broad geographical scope was strategically chosen for diverse insights, maintaining proximity to unorthodox paradigms (Bamberger, 2018). Respondents were contacted via Linkedin.com, resulting in a 5% response rate from invite to interview. The interviews were conducted in English through Zoom, recorded, transcribed by otter.ai, and analyzed using Atlas.ti.

The primary theoretical construct derived from interviews is the "data cold-chain," emphasizing coherence in inputs and processes for data tasks (Von Krogh, 2018). An iterative approach to data analysis led to the identification and categorization of distinct features within data technology. Decision-making processes of executives and managers in creating data systems were explored through recorded interviews, averaging 30–45 minutes in length. A four-step, grounded, iterative analysis process (Corbin & Strauss, 2015) involved open coding, analytical filters, axial coding, and selective coding. The resulting conceptual model illustrates Data experts' thinking patterns and behaviors regarding data value.

Semi-structured interviews covered respondents' backgrounds, the data cold-chain, thinking patterns, and behaviors in data deployment. Open-ended "grand tour" queries and exploratory inquiries explored their experiences, learning trajectories, skill sets, challenges, and decision-making processes. The iterative refinement of the interview protocol accommodated emerging thematic elements, ensuring a comprehensive understanding of data experts' experiences. The sampling process continued until data saturation was achieved, indicating no new information emerged from supplementary responses.

4. DATA ANALYSIS

We established a theoretical foundation in innovation and technology management literature, guiding interviews with 65 diverse data experts (refer to figures 1, 2, and 3).

----

Insert figure 1, 2 and 3 here

---

the iterative coding process, facilitated by atlas.ti software, involved rigorous scrutiny of interview transcripts and memos, organizing respondent comments into thematic categories aligned with preliminary codes. we drew insights from relevant literature, such as works by chen (2012), jean, burke et al. (2012), and ghahramani (2015), to interpret emerging concepts and strategies related to algorithmic biases.

ngaging data experts in comprehensive inquiries, our research aimed to understand their data interactions, perspectives on data strategy, awareness of evolving dynamics, enhancements in team dynamics, and identification of those benefiting from strategic choices. although unable to undertake secondary observations and field visits, our interviews provided profound insights into human impact on data, contributing significantly to the literature. the expert process model we present enriches the understanding of human influence in data technology and underscores variations in individuals' approaches to data work.

the qualitative data analysis process involved open coding, memo writing, axial coding, constant comparison, and selective coding. nine distinct categories, such as "data experts alignment," emerged from the analysis, representing discrete units of information. the foundational concept of alignment underwent iterative development during the 39th interview, involving continuous comparative analysis, additional sampling, and participant validation. the concept's robust utility and explanatory effectiveness were affirmed through evaluation among participants at later stages.

5. FINDINGS